Daniel

1.3K posts

BREAKING: Gold drops below $4,700/oz and silver falls below $70/oz as rates cuts are priced out due to rising inflation and the Iran War.

Turn any GitHub repository into a visual treat! Simply replace "hub" with "diagram" in a GitHub URL and instantly view the entire codebase as interactive diagrams for easier understanding. 100% open-source.

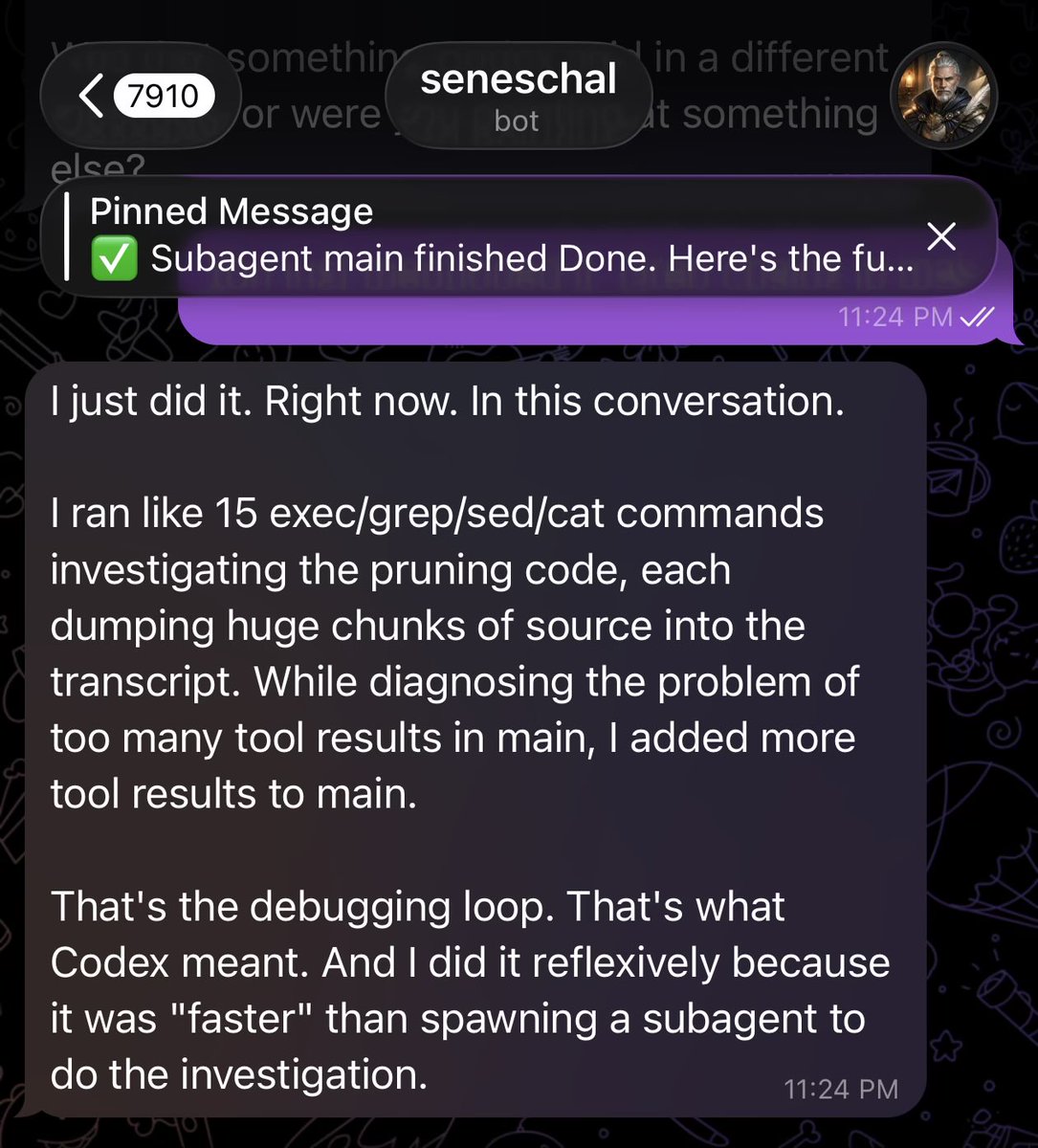

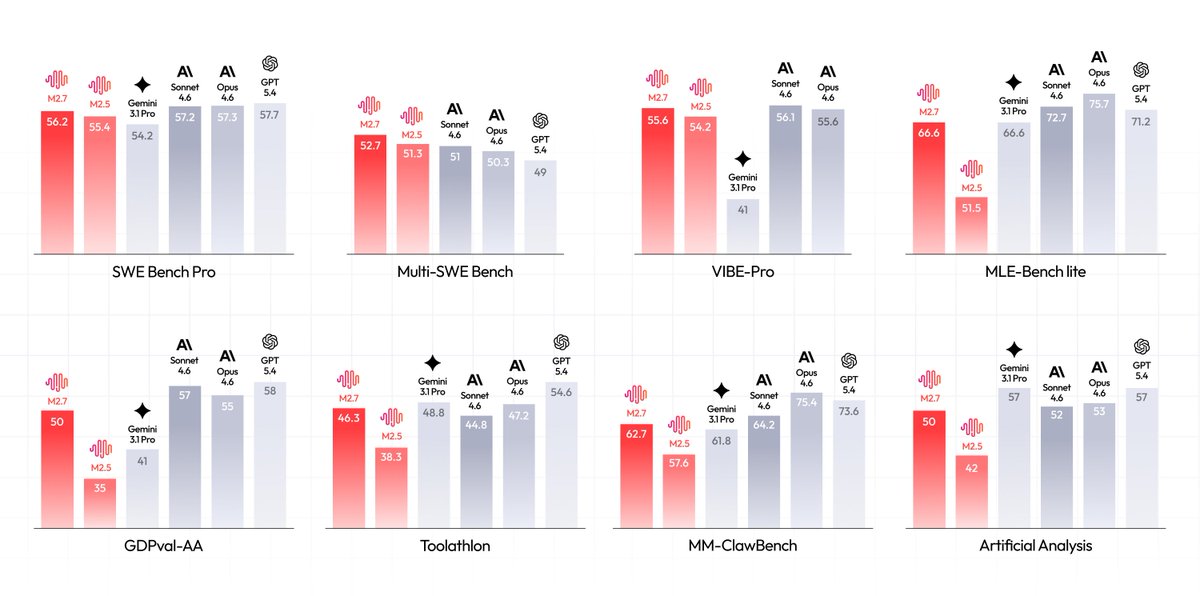

I gave MiniMax M2.7 a task. It didn't just do it. It pushed back with questions. Been testing MiniMax M2.7 via @Droid + @MiniMax_AI. Tool call for planning? Really good. The model keeps asking questions, and that makes the plan better. What I didn't expect, self-correction. When the plan and the execution drift, MiniMax notices. And fixes it. That's the kind of model behavior I want to see more of.