Daniel Lemire | AI MISTAKES

5.5K posts

Daniel Lemire | AI MISTAKES

@danlemire

25 years in Tech. I help people solve problems, learn the latest tech, and build high-performing systems and teams with the right architecture, automation & AI.

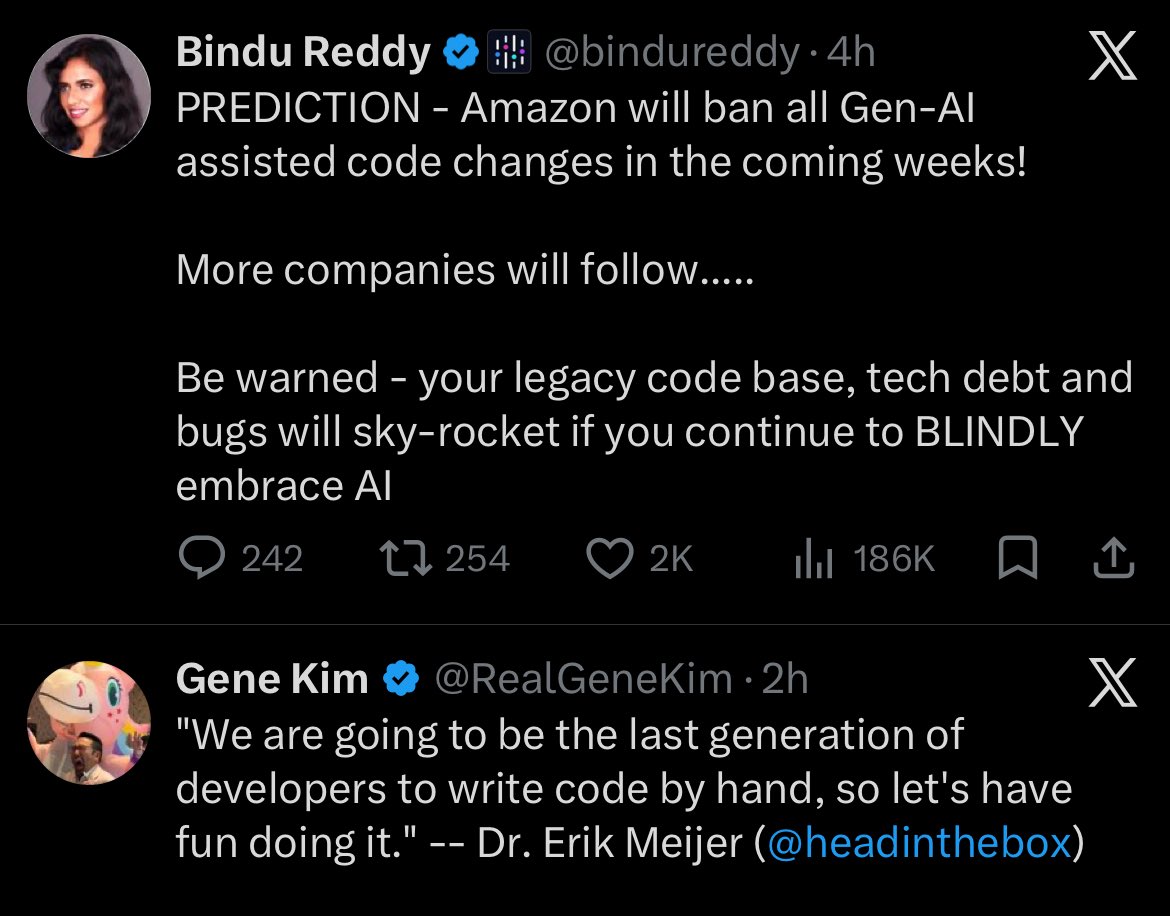

Does AI make us collectively dumber? Is "grok wat do u think" the new "I've outsourced my common sense to a chatbot and I feel great about it"? A study secretly made an AI give wrong answers half the time while people used it to solve logic puzzles. People followed the wrong AI 80% of the time. Their confidence went up regardless. This is the "cognitive surrender". The moment people stop asking "is this true?" and start asking "what does the model say? I don't care, too". The habit of deference is sticky.

I’m on week five of trying to vibe code a replacement for some dumb saas that we use and it’s so incredibly frustrating that I’m slowly realizing it’s actually a quite complex and thoughtful piece of software.

🦔 Researchers at Aikido Security found 151 malicious packages uploaded to GitHub between March 3 and March 9. The packages use Unicode characters that are invisible to humans but execute as code when run. Manual code reviews and static analysis tools see only whitespace or blank lines. The surrounding code looks legitimate, with realistic documentation tweaks, version bumps, and bug fixes. Researchers suspect the attackers are using LLMs to generate convincing packages at scale. Similar packages have been found on NPM and the VS Code marketplace. My Take Supply chain attacks on code repositories aren't new, but this technique is nasty. The malicious payload is encoded in Unicode characters that don't render in any editor, terminal, or review interface. You can stare at the code all day and see nothing. A small decoder extracts the hidden bytes at runtime and passes them to eval(). Unless you're specifically looking for invisible Unicode ranges, you won't catch it. The researchers think AI is writing these packages because 151 bespoke code changes across different projects in a week isn't something a human team could do manually. If that's right, we're watching AI-generated attacks hit AI-assisted development workflows. The vibe coders pulling packages without reading them are the target, and there are a lot of them. The best defense is still carefully inspecting dependencies before adding them, but that's exactly the step people skip when they're moving fast. I don't really know how any of this gets better. The attackers are scaling faster than the defenses. Hedgie🤗 arstechnica.com/security/2026/…

Introducing the new /crawl endpoint - one API call and an entire site crawled. No scripts. No browser management. Just the content in HTML, Markdown, or JSON.