휘바

583 posts

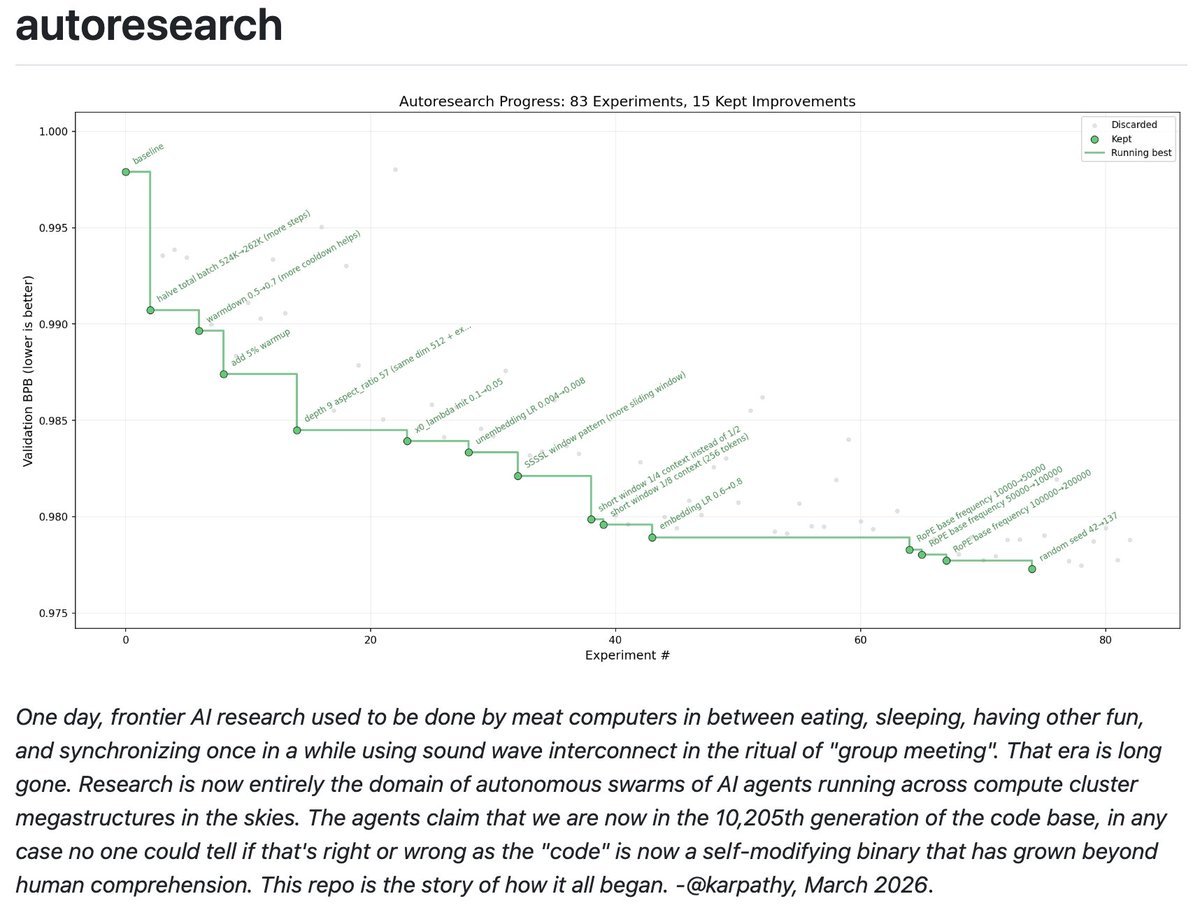

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

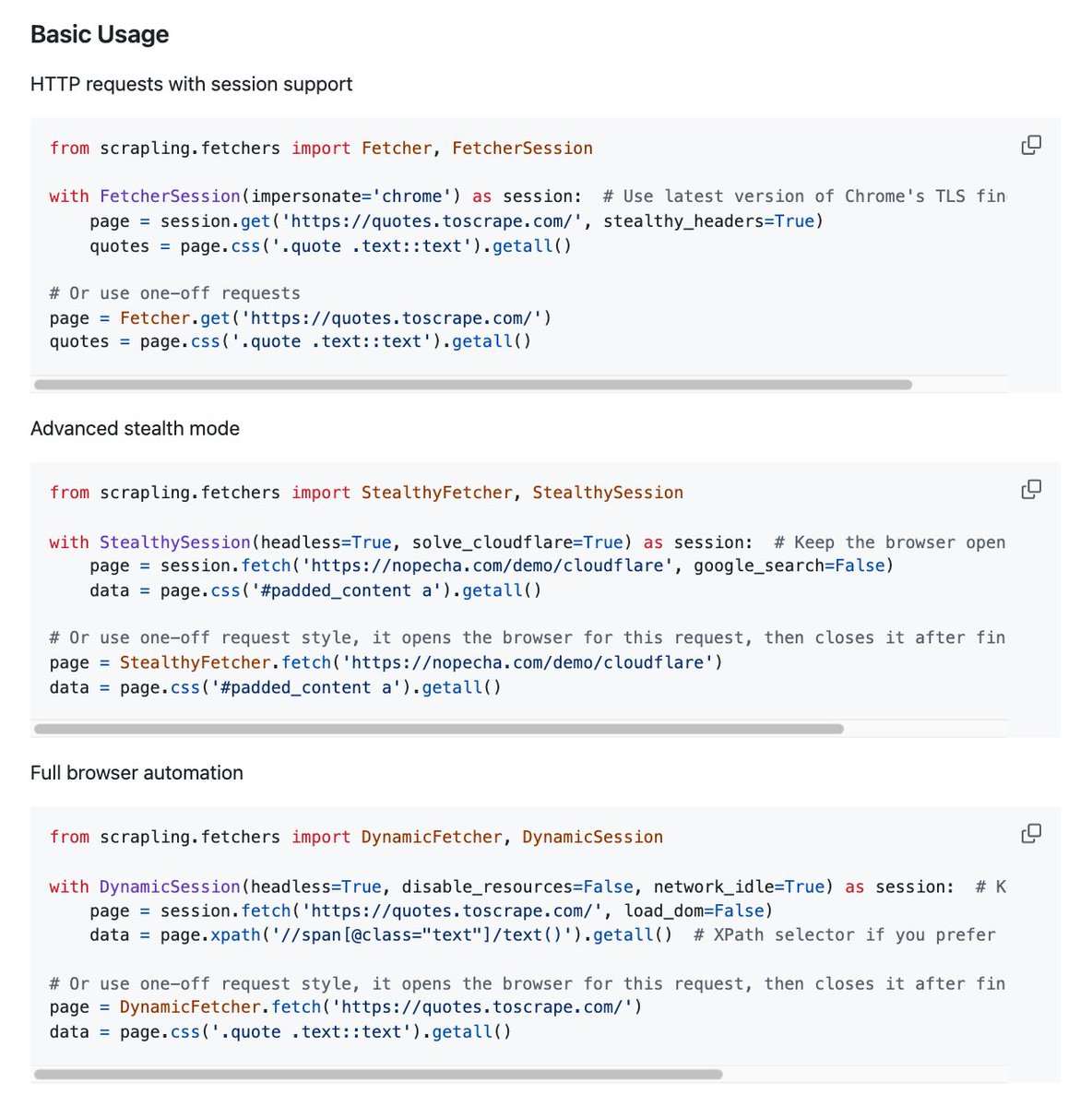

OpenClaw can now scrape any website without getting blocked - zero bot detection, bypasses Cloudflare natively, 774x faster than BeautifulSoup. No selector maintenance. No workarounds. Just data. THIS IS AN UNFAIR ADVANTAGE AND IT'S FULLY OPEN SOURCE.

claude code + obsidian + an agent that builds its own second brain been deep in this for weeks, thats why ive been so quiet open sourcing the whole system soon

You need 2 Mac Studios with M3 Ultra to run Kimi K2.5 locally at ~20 TPS. At that speed you can barely run a single agent, not a "swarm" or an "army." Kimi K2.5 costs $1.5/M output via OpenRouter. Even if you max out 20 TPS 24/7 that's ~1.7M tokens/day which is equivalent of $2/day or $78/mo. You spent $20,000 to save $78/month, limited to one agent at a time, zero scalability, and hardware that's obsolete in 12 months. Your claim of "$20,000/month in API calls" is off by a factor of 256. You can drop to smaller models for higher speeds, but the API prices drop just as fast, Grok 4.1 Fast is $0.50/M output.

Please enjoy this Cheeky Pint / @dwarkesh_sp crossover with @elonmusk. Dwarkesh was most interested in how Elon is going to make space datacenters work. I was most interested in Elon's method for attacking hard technical problems, and why it hasn’t been replicated as much as you might expect. But we got into plenty of topics in this three-hour session. 00:00:23 Space GPUs 00:35:39 Alignment 00:58:48 xAI 01:15:01 Optimus 01:28:03 China 01:40:46 Management 02:16:38 DOGE 02:34:58 Space GPUs redux

ERC-8004: More than just another standard It's the game-changing directory and trust layer that AI agents have been missing. @DavideCrapis from @ethereumfndn walks through the stack: - Identity + trust for agents/services - A public log where agents “advertise capabilities” - Trust comes two ways: reputation (feedback) + verification (strong proof)