Debesh Mandal

2.9K posts

@debesh

Co-founder and CEO @nanograb (YC S23)

One London office has produced more AI founders than any university on Earth. DeepMind isn't just a lab. It's a founder factory. People who walked through that building in King's Cross have started or co founded a striking share of Britain's frontier AI companies. A short list: >Isomorphic Labs :Demis Hassabis is still at the helm, applying AlphaFold to drug discovery, now in clinical trials >Cursive : Talfan Evans, ex-DeepMind, building generative AI infrastructure (just backed by Sovereign AI) > Ineffable Intelligence :David Silver, lead author of AlphaGo and AlphaZero, spent 13 years at DeepMind > Wayve:founded in Cambridge but deeply intertwined with DeepMind research alumni Plus founders and senior teams at Reka, Cohere, Stability AI and several others trace back to DeepMind in some way. Stanford CS produces about 200 AI graduates per year. DeepMind has employed roughly 1,500 researchers in its history. The output per person ratio for frontier company founders is extraordinary.One London office has produced more AI founders than any university on Earth.

FinalDose is building the first programmable drug platform - a single smart drug molecule that finds diseased cells by their DNA and destroys them. They're starting with all cancers. Congrats on the launch, @Jeffliu6068Liu, @sklin_lite, and @liyaohuang2! ycombinator.com/launches/QKj-f…

Some progress in lightning: quantamagazine.org/what-causes-li….

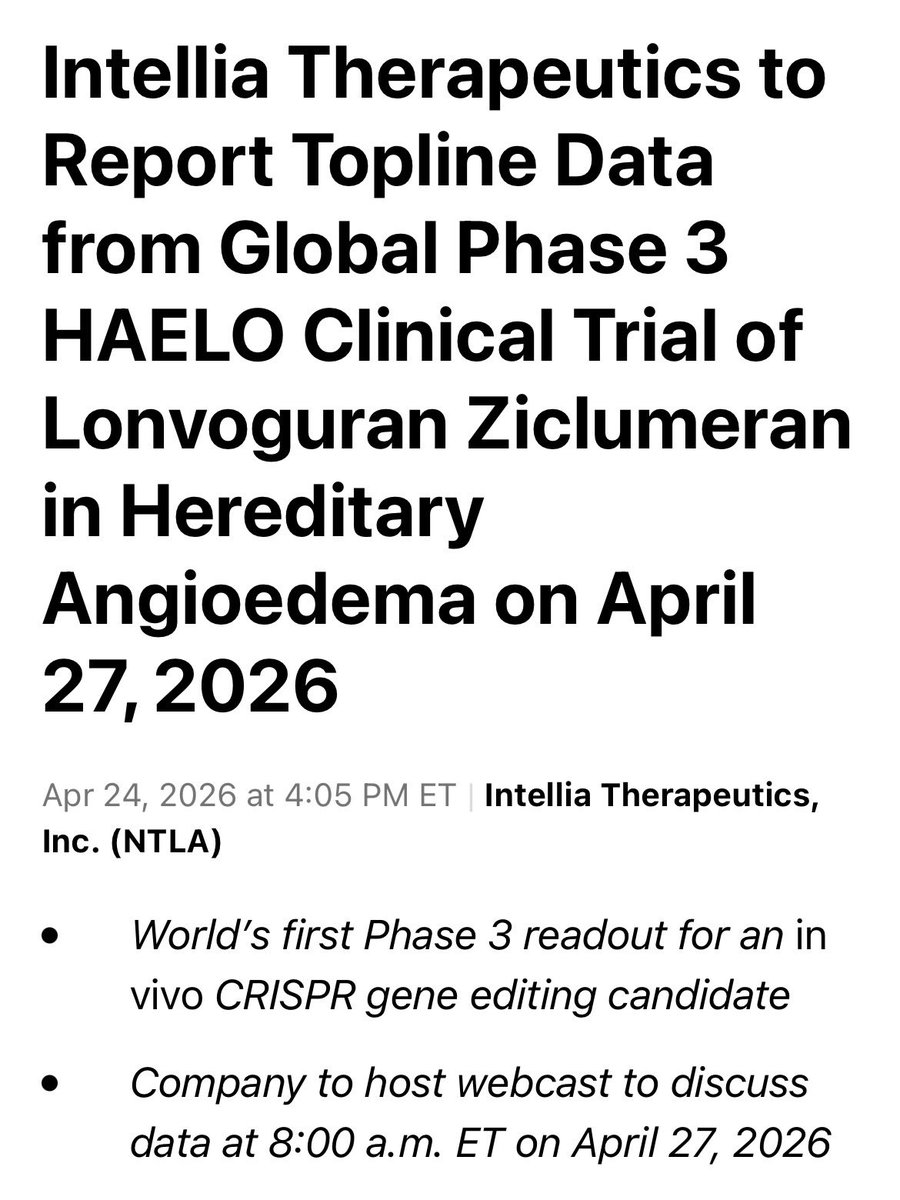

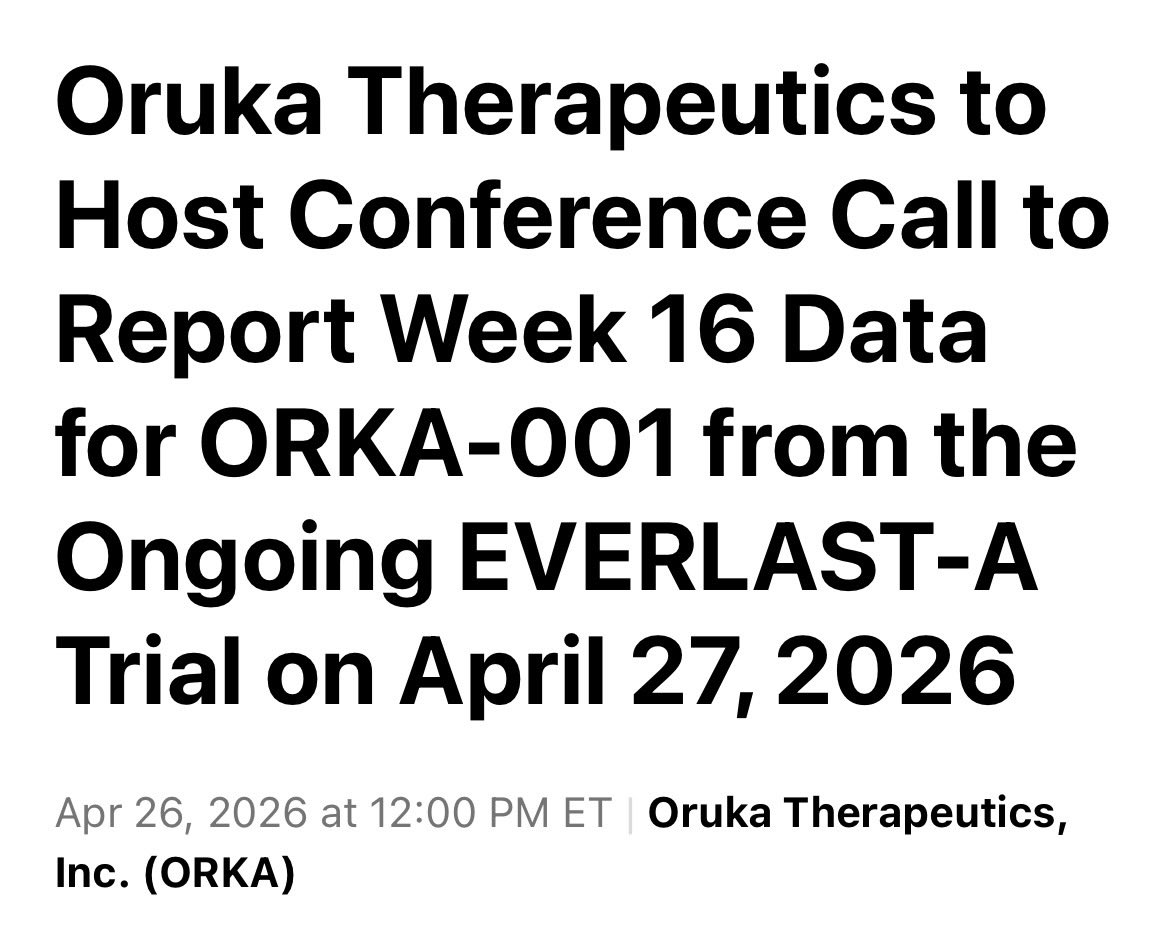

Congratulations to anyone who is able to raise large sums of capital in biotech. It’s an extremely hard, brutal sector. We need all the good news we can get. I do not enjoy seeing fellow biotech’ers rip each other down, which I’m seeing a lot of right now. Sure, continue to call out misinformation, false data, fraud, and the like. Be rigorous and ruthless. But realize we are all on the same team at the end of the day. Don’t waste your energy on infighting.

unfathomable how much bio data will be required to match LLM performance in coding/text even if it was readily available. huge opportunity here

Exactly - biology is a fundamentally different domain than text and scaling laws do not apply cleanly ~All the tasks you want an LLM to do are contained in the text data itself. For biology, NONE of the tasks you want the model to do are contained in the sequence data itself.

Helix-02 running simultaneously on 2 robots, fully onboard, doing a full bedroom reset from pixels-to-actions. To be clear, there's no explicit messaging between these robots, they coordinate their actions fully visually, e.g. head nods. 1x speed, fully autonomous, no teleop.

Never read anything quite like The Singularity Is Near. In 2004, Ray's writing stuff like "Sometime in the 2020s, we'll have AI that begin can work on their own successors" and his evidence is basically a straight line time series of milestones starting with fire, the wheel, etc.