Deepak Vijaykeerthy

6.1K posts

Deepak Vijaykeerthy

@deepakvijayke

Research & Engineering @IBM

🤯🤯🤯! after battling a nasty NCCL bug we finally have crazy crazy results. Thats adjusted MFU (to causal mask) I need to recheck my numbers because that's crazy high

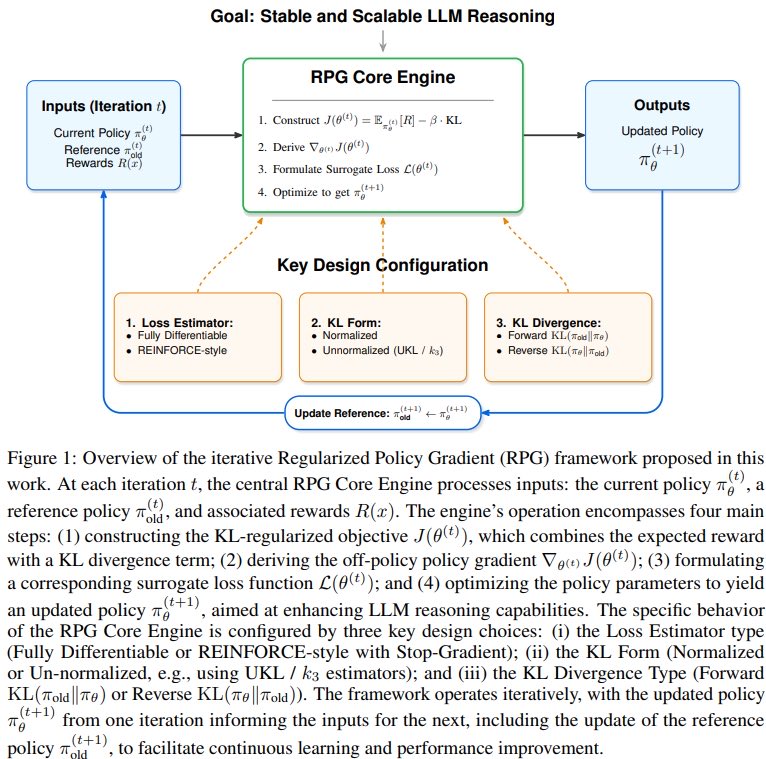

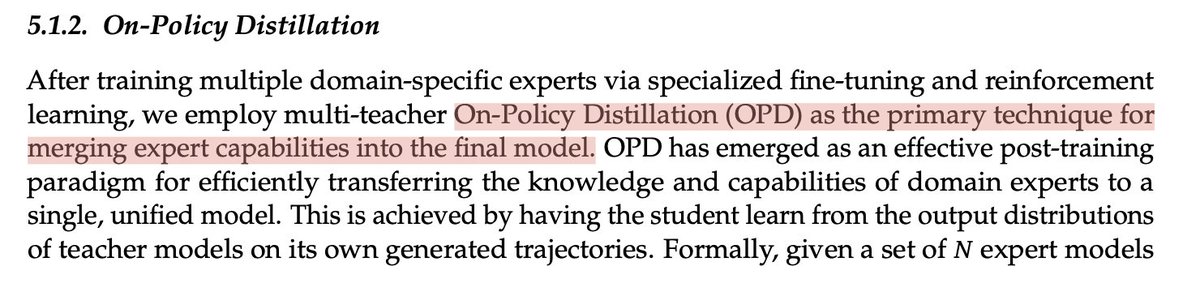

Entropy is H(p) = E_p[-log p(x)], your optimal expected code length when you know p. Shannon coding assigns symbol x a length of -log p(x), and on average you can't beat it. Now suppose you don't know p. You believe it's q, so you build a code where x has length -log q(x). But the data is actually drawn from p, so your expected code length is: H(p, q) = E_p[-log q(x)] = -sum over x of p(x) * log q(x) That's cross-entropy: the bits you actually pay using q's codebook on p's data. KL is just the gap between what you pay and the optimum: KL(p || q) = H(p, q) - H(p) = sum over x of p(x) * log( p(x) / q(x) ) Or as a one-liner you could literally run: kl = sum(p[x] * log(p[x] / q[x]) for x in support) The story in three lines: H(p) is the floor, H(p,q) is what you actually pay with the wrong codebook, KL is the penalty — and it's always ≥ 0 because you can't beat the optimal code. - Will Brown & Claude Opus 4.7

Can RL agents learn directly from real-world feedback? Join us at the RLxF (RL from World Feedback) Workshop @ ICML 2026 (Jul 10) to find out! 📄 Submit Full papers (8p) & proof-of-concept short-papers (2–4p) by May 13 AoE. 🔗 sites.google.com/view/rlxf-icml…

Traditional inference wasn’t built for agentic coding. Agentic tools make hundreds of API calls per coding session, often with recomputed context, creating bottlenecks that drive up cost per token. NVIDIA Dynamo rebuilds the stack for agents with: → KV-aware routing → Agent-aware scheduling → Multi-tier caching → Unified orchestration The result: higher cache hit rates, lower latency, and up to 7× more throughput: nvda.ws/3P1tO1N

Wish to build scaling laws for RL but not sure how to scale? Or what scales? Or would RL even scale predictably? We introduce: The Art of Scaling Reinforcement Learning Compute for LLMs