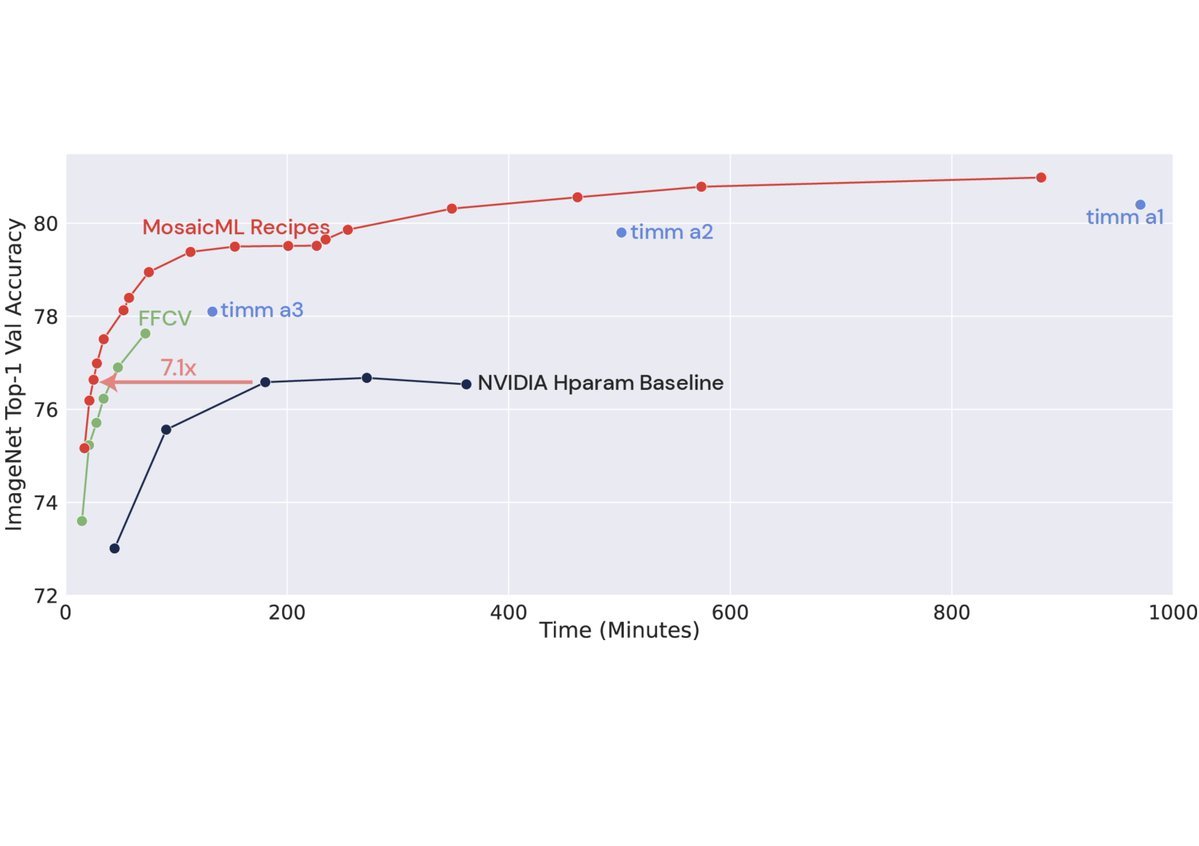

Muon is having its moment — Kimi K2, GLM 5, and now DeepSeek V4!

More broadly, it feels like the time for advanced optimizers is finally here — reiterating that they are an important component for efficient training systems at scale!

Our recent work: performant Muon/SOAP-class optimizers in NVIDIA NeMo/Megatron-Core — layer-wise distributed optimizer, TP-aware Newton-Schulz, SYRK kernels. Muon ≥ AdamW on GB300-NVL72.

developer.nvidia.com/blog/advancing…

English