Yuhao Dong

150 posts

@dyhTHU

PhD student - MMLab@NTU, advised by Prof. Ziwei Liu @liuziwei7. Prev. @Tsinghua_Uni. Multimodal Learning

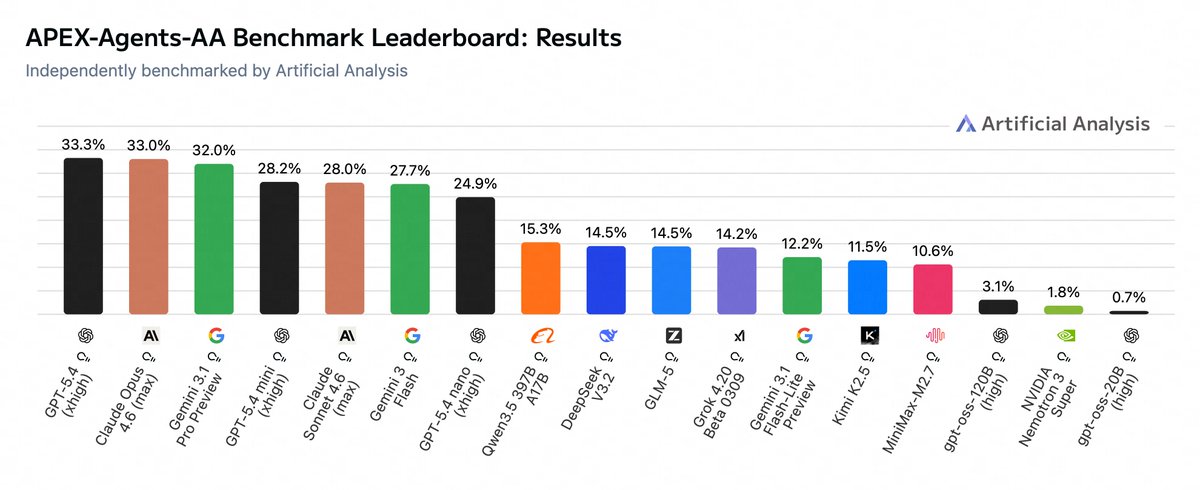

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

🔥 Excited to share Video-MME-v2! 🔥 We built it to tackle a growing issue: video understanding benchmarks are getting saturated. 🏃🏻 Over 3,300 human-hours, nearly a year of effort 🌟 A new design with a progressive hierarchy + group-based nonlinear evaluation What we found: 👉 Human: 90.7 vs 👉 Gemini-3-Pro: 49.4 The gap is still huge. Explore More at: Page: video-mme-v2.netlify.app Paper: arxiv.org/pdf/2604.05015

Today we’re introducing Muse Spark, our most powerful model yet, giving you a faster and smarter Meta AI. Muse Spark currently powers the Meta AI app and website and will be rolling out to @whatsapp, @Instagram, @facebook, @messenger, and AI glasses in the coming weeks. about.fb.com/news/2026/04/i…

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing