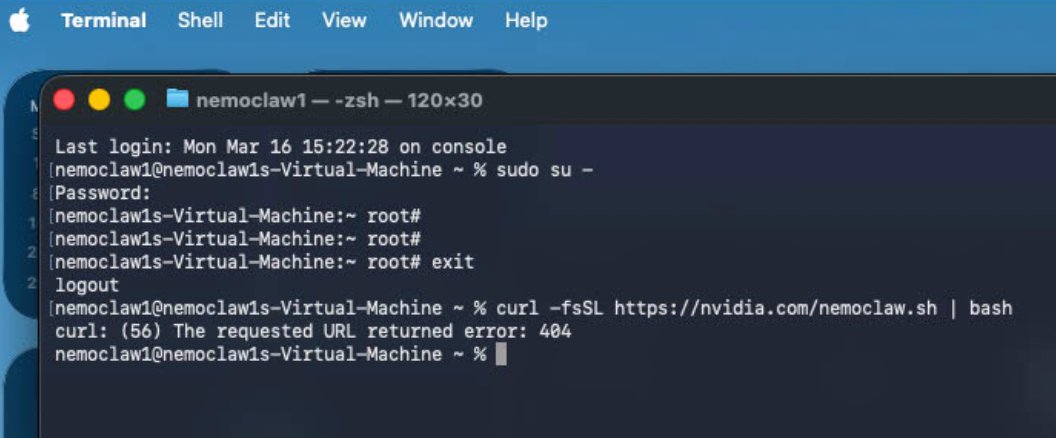

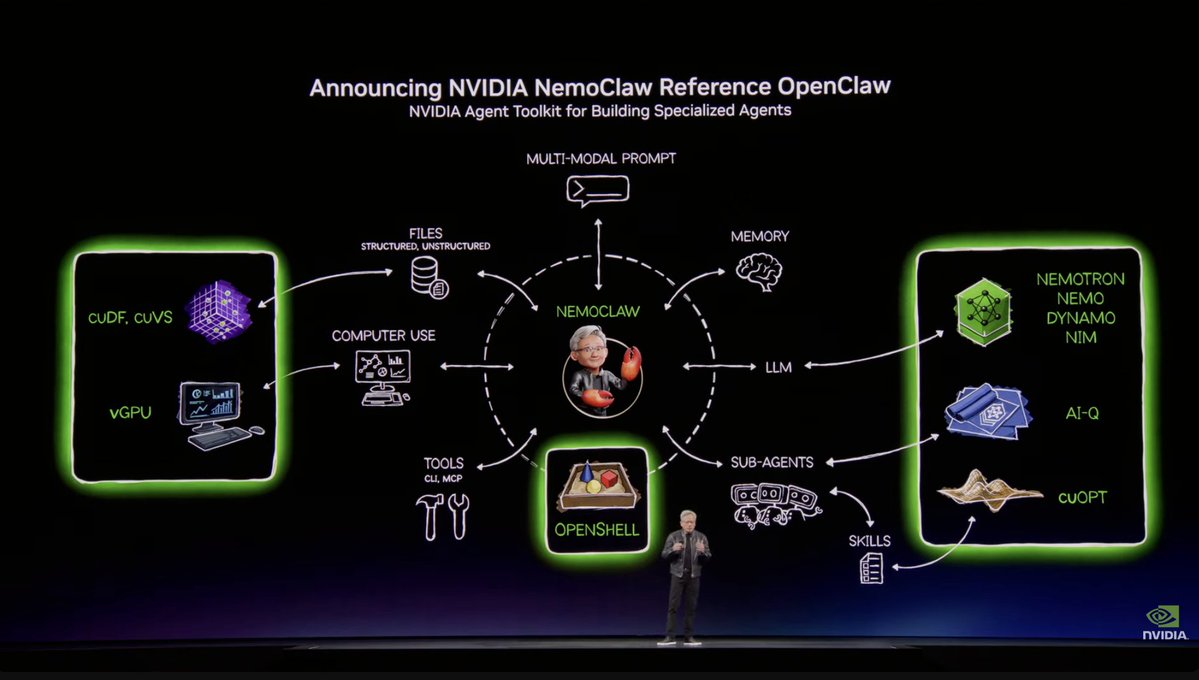

The "static course" is dead. If you’re learning AI from a pre-recorded video, you’re already behind. We’re building openmobius.ai because the industry moves too fast for "fundamentals" to stay fixed. It is a self-evolving, agent-driven education loop where the project is the curriculum. A collaboration between father and son: @easthambuilds @DanielEastham3