eavaria

7.6K posts

eavaria

@eavaria

vea mis tweets :)

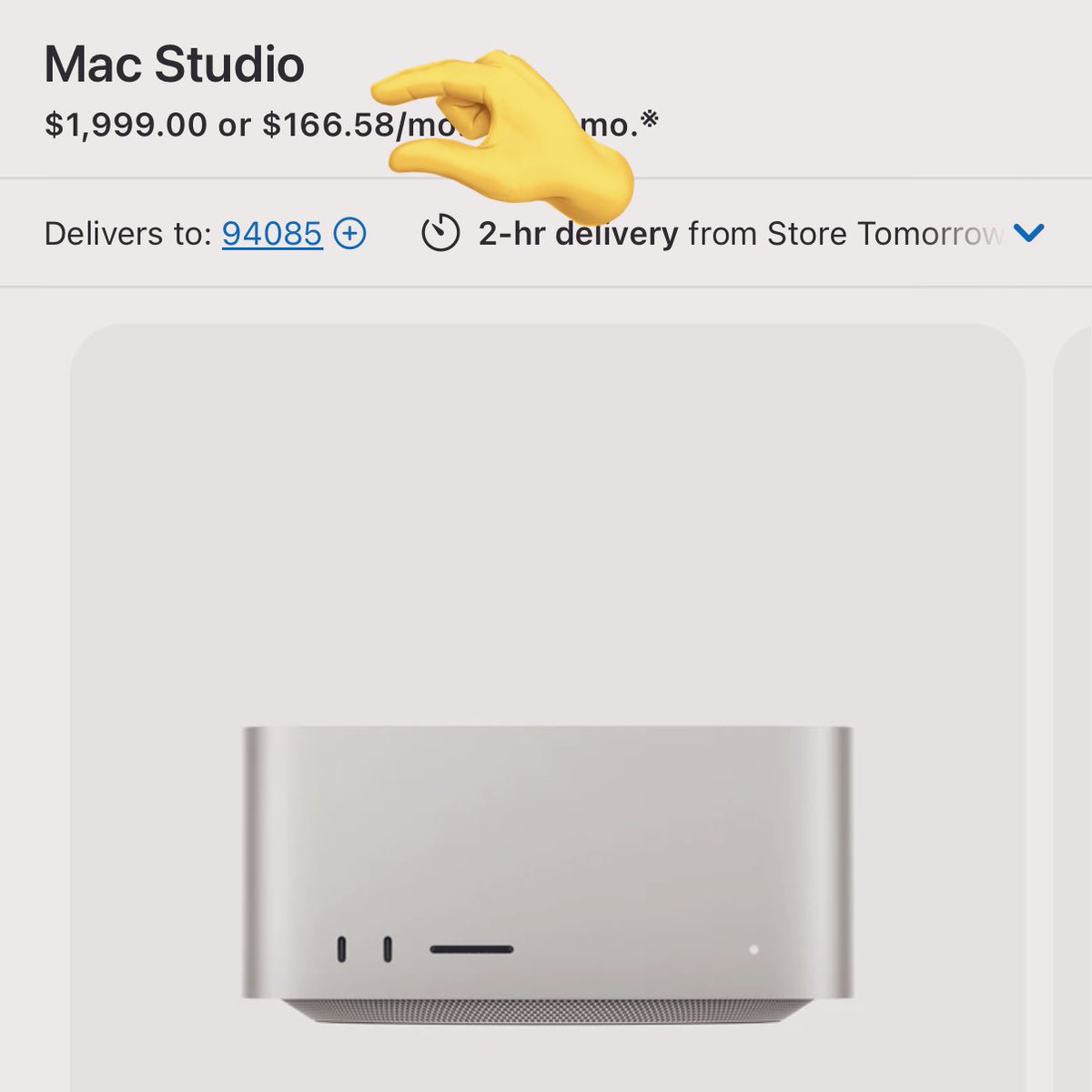

@TheAhmadOsman I just hit 53 tp/s with Qwen-3.5-35B-A3B (Q4_K_S) at FULL 1M token context running fully on a single 5090. Is this the new king of consumer inference? Thought you'd be proud! Settings in comments. OWN YOUR OWN COMPUTE! Well done @Alibaba_Qwen can't say it enough!

Qwen3.5-35B-A3B testing on single RTX 3090 and it flew. 112 tokens per second. zero tuning. default config. all 41 layers on GPU with 4GB VRAM to spare. for context: the 80B coder-next did 1.3 tok/s on this same card. needed two 3090s to hit 46 tok/s. this model just did 112 on one. same 3B active params. half the total weight. 19.7GB on disk instead of 45. the math was obvious but the result still caught me off guard. flash attention enabled itself automatically. KV cache quantization, expert offloading, thread tuning, none of that applied yet. this is baseline. full optimization breakdown and benchmark results dropping soon. if default settings do 112, i want to see where the ceiling is. exact hardware specs in the image below.

JUNE 2028. The S&P is down 38% from its highs. Unemployment just printed 10.2%. Private credit is unraveling. Prime mortgages are cracking. AI didn’t disappoint. It exceeded every expectation. What happened? citriniresearch.com/p/2028gic

No me meto en crianza ajena. Pero cuando veo niños de dos años viendo monitos en el celular en el bus, un café o una sala de espera me dan ganas de cachetear a sus padres.