Justin

3.1K posts

Justin

@edtech808

educational technologist, gr 6-12

"We already have universal basic income. It's called knowledge work." Max Schoening (@mschoening) is one of the deepest thinkers on how AI is changing how we build and use software. He's also what the future of product leadership looks like. He's head of product at @NotionHQ, was VP of Design (and a part-time engineer) at @GitHub, head of design at @Heroku, a PM at Google, and a 2x founder. In our in-depth conversation, we discuss: 🔸 What’s worked in getting designers and PMs to fully embrace AI 🔸 Why agency—not skills—is the thing that separates people who will thrive 🔸 Max’s “tiny core” theory of great products: iPhone multitouch, the GitHub pull request, Dropbox’s menu bar icon 🔸 How the first 10% of every project is now “free,” and what that means for product development 🔸 Why the amount of software has exploded but the quality hasn’t, and why that gap creates opportunity Listen now 👇 youtu.be/mCO-D3pkviM

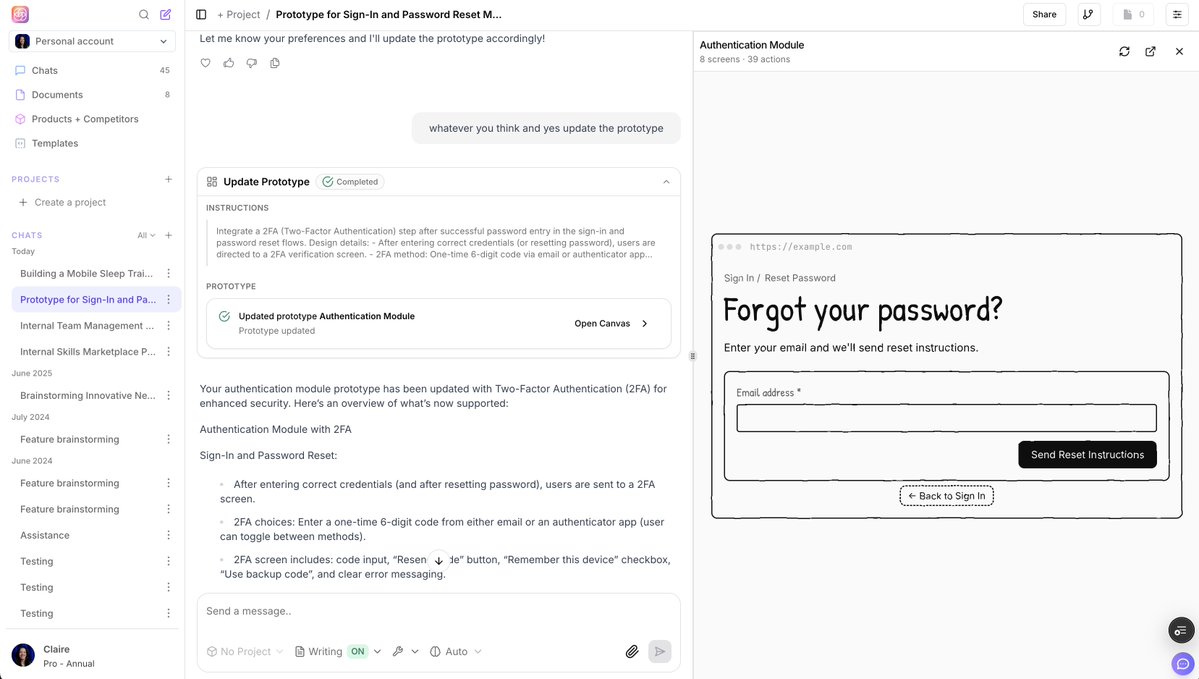

What if chat is the wrong interface for managing agents? In this talk, @steveruizok shows what changes when agents move onto a shared canvas instead of staying trapped in a linear thread. Using tldraw's Fairydraw experiment, where users collaborated with three "fairies" coordinating with both humans and each other, this is a practical look at what spatial interfaces make possible for agent workflows. youtube.com/watch?v=sPUjIB…

Self-soothing is a skill that can be taught and practiced (like explicitly taught, not just leaving kids alone to develop unhealthy coping mechanisms), and the better kids get at it, the calmer daily life becomes. One trick your kids won't see coming: When the kid says "I can't calm down!" offer them ice cream if they can be calm. You only need to do this once, because then any other time they say they can't calm down, you can remind them "Remember that time when I offered you ice cream and you immediately calmed down? That means you're capable of doing it. So let's do it now." (In the context of the original post, the answer is: dinner is now late/pizza so we can use this time to help the 2yo and 4yo practice their self-soothing skills)