Eric Auld

4.8K posts

Eric Auld

@ericauld

Performance and kernels at Together AI

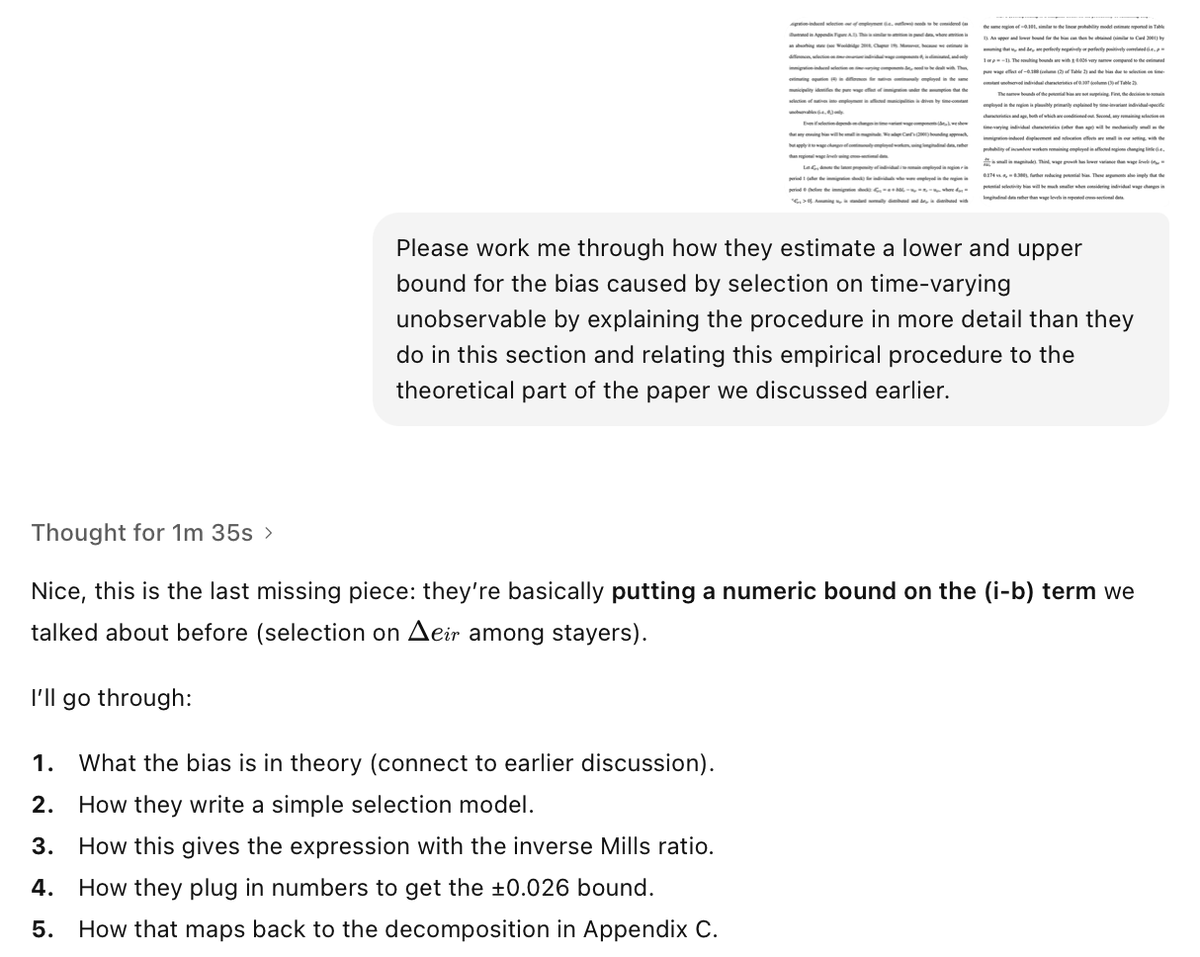

Would be curious to read a post from @DKokotajlo explaining why his timelines have lengthened. The question I find myself asking, though, is: might the prediction error be not just about forecasting timelines incorrectly, but also flawed assumptions about the nature of “AGI” and “superintelligence” themselves? In my recent debate with @tegmark and many similar debates I’ve had over the past two years, this, rather than timelines alone, has been the crux. Typically in these debates I will argue something like “you are misapprehending the nature of ‘intelligence’ if you think ‘being really intelligent’ means ‘possessing the will and ability to dominate the world.’” This remains my view. Intelligence is powerful, but it is far from magic. Skilled forecasters can carefully model how inputs like data, compute, and the like will grow. They can extrapolate the straight lines on graphs. But all that careful modeling can still prove misleading if the forecast is based on incorrect assumptions about the nature of intelligence itself. I would encourage those who have high p(dooms) to consider whether it is not just timelines worth revising, but basic assumptions about what it is we appear to be building. Now for my caveats: none of this means “AI capabilities are leveling off.” Over the coming years I expect that AI will improve faster than the vast majority of Americans anticipate. It will an incredibly powerful and consequential technology—very possibly the most consequential development in many centuries or longer. It’s still possible that AI could cause serious job loss, though the above notes on the nature of intelligence should factor into your analysis here. There are other novel risks, too, about which I have said plenty. None of this is to downplay all concerns, risks, etc. Instead I am specifically countering the line of thinking behind the superintelligence ban, and any other argument rooted in “doom-y” assumptions about intelligence.

Allen Hatcher, @Cornell, will receive the inaugural Elias M. Stein Prize for Transformative Exposition for his book Algebraic Topology. Read More: ams.org/news?news_id=7…