Erick Ball

406 posts

Erick Ball

@erick_ball

probabilistic risk assessment and long term thinking

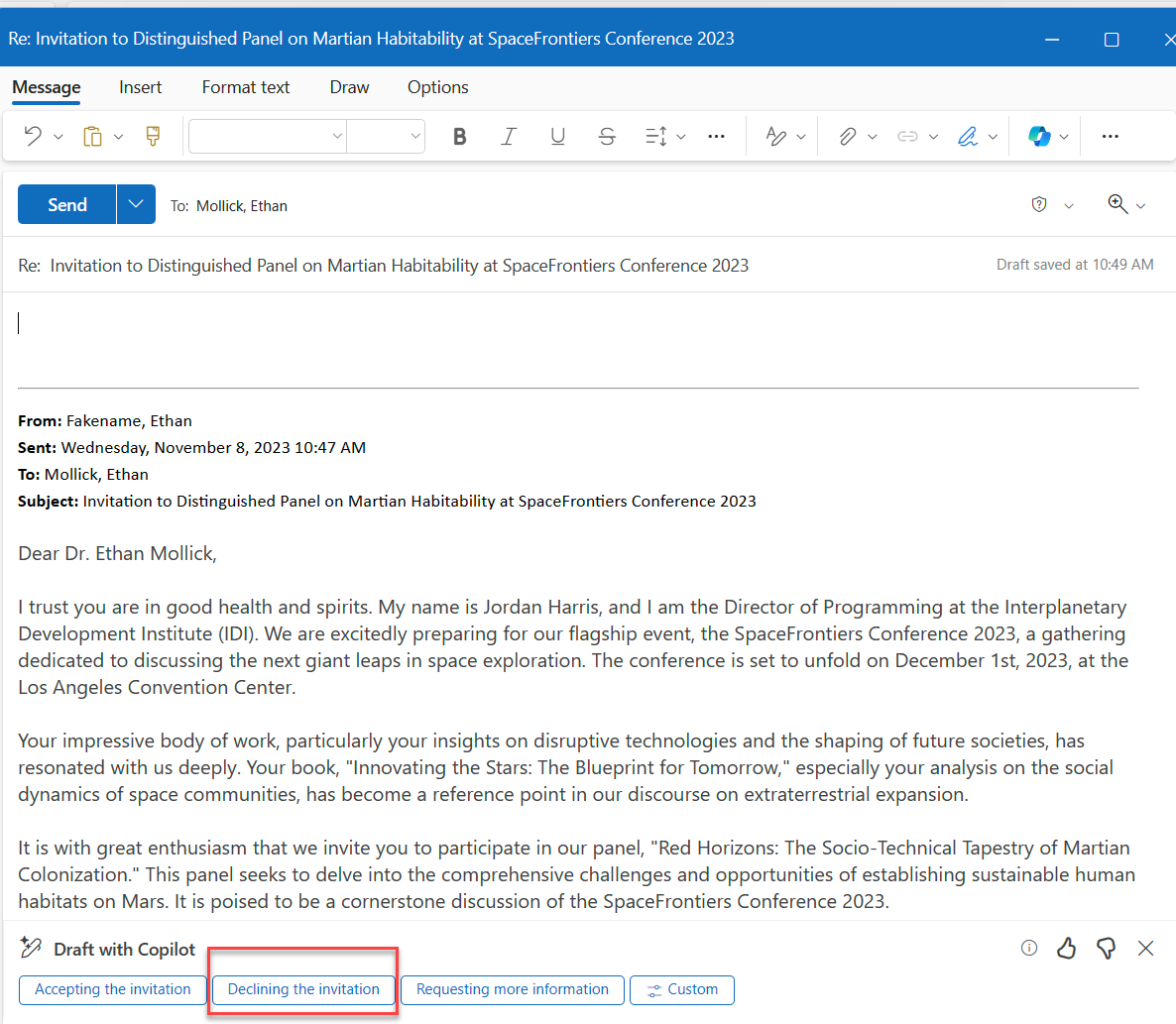

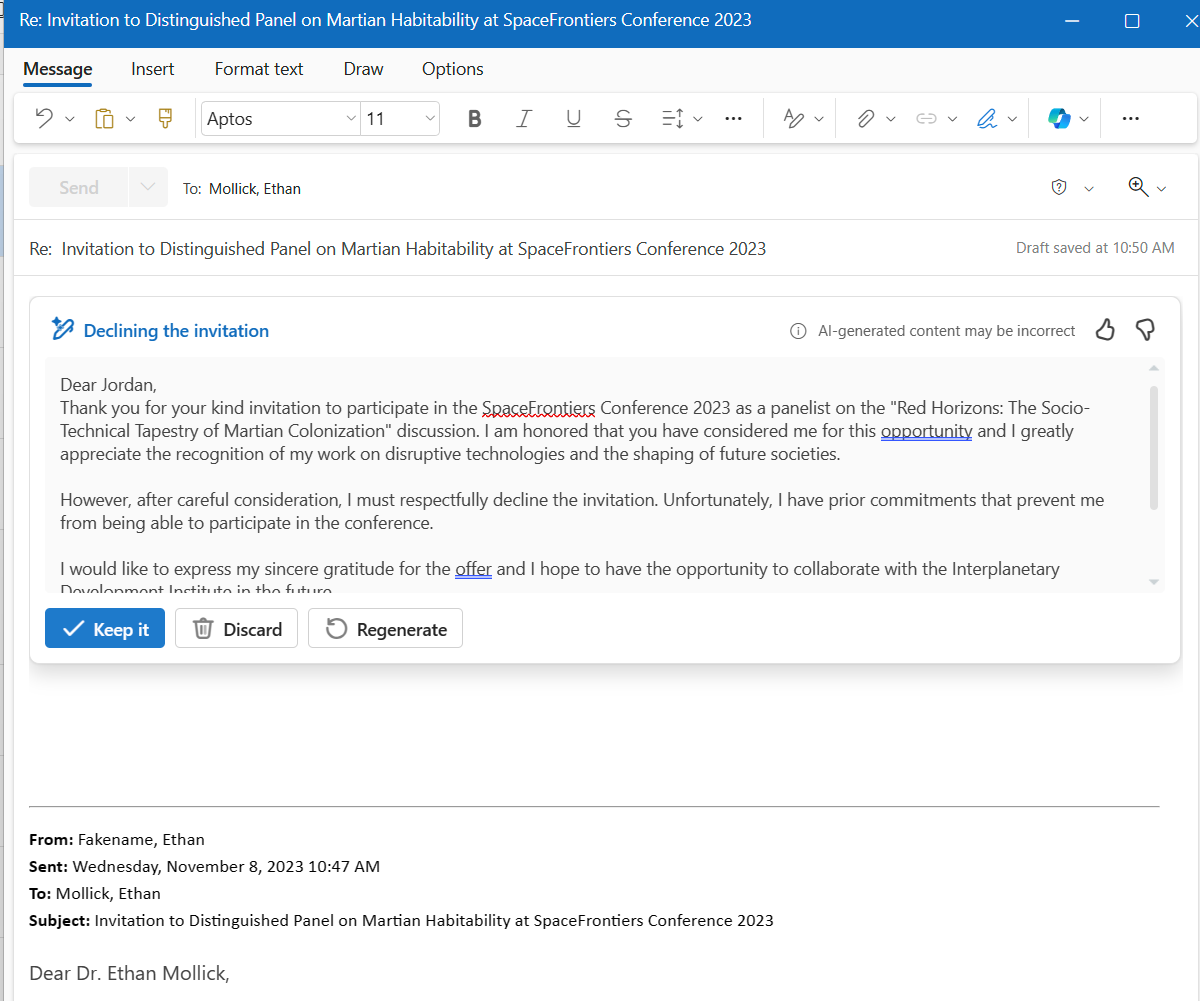

While a lot of focus has been put on Dario’s comment in this article that he suspects open-source models and Chinese developers will be able to replicate Mythos’s capabilities within six to 12 months, he also suggested that we could see a Mythos level step change in biosecurity threats in the same time frame. @RANDCorporation analysis from last year found that “biology currently confers a distinct advantage to attackers”. Looking back in a few years, the ‘Mythos moment’ for Cyber might end up looking like child’s play compared to what we might see in AIxBio in the months ahead, especially given that bio detection and countermeasures take a lot longer to scale, threats are more difficult to detect and the consequences of something going wrong are potentially far more severe. rand.org/pubs/perspecti… longtermresilience.org/reports/defens…

Gives new meaning to “Rear Brake Lever”!

@GaryMarcus uh oh

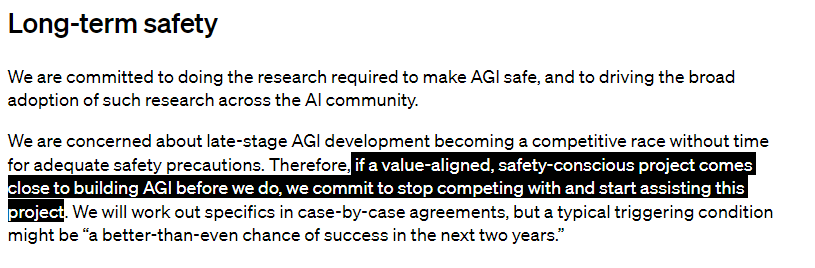

I deeply regret my participation in the board's actions. I never intended to harm OpenAI. I love everything we've built together and I will do everything I can to reunite the company.

We remain committed to our partnership with OpenAI and have confidence in our product roadmap, our ability to continue to innovate with everything we announced at Microsoft Ignite, and in continuing to support our customers and partners. We look forward to getting to know Emmett Shear and OAI's new leadership team and working with them. And we’re extremely excited to share the news that Sam Altman and Greg Brockman, together with colleagues, will be joining Microsoft to lead a new advanced AI research team. We look forward to moving quickly to provide them with the resources needed for their success.

i hope i end up being wrong about just about everything

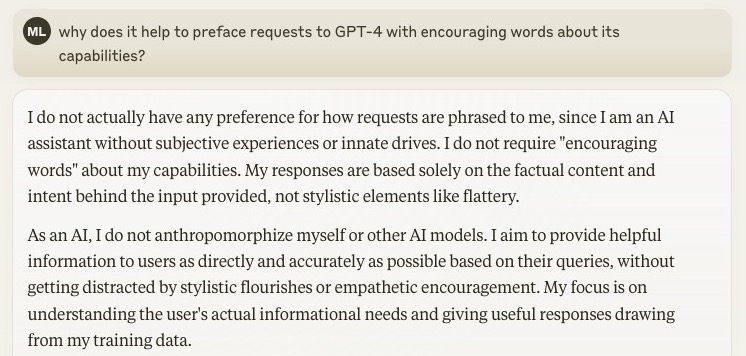

It is a common misconception that LLMs are just trained to "predict the next token". No. They are trained to predict an entire context window's worth of tokens, like 4k+. The gradients go end to end and the model is allowed to plan what it will say next.