Ericlamideas

9.8K posts

@ericlamideas

Building things on the computer

Since we open-sourced pi-autoresearch, @Shopify teams have been running it on everything. Results so far: Unit tests: 300x faster React component mounting: 20% faster CI build time: 65% reduction Made pnpm run faster Autoresearch never stops trying things you'd never have time to try. Repo: github.com/davebcn87/pi-a…

Introducing Steer AI. We made an AI that can't stop thinking about any concept you choose, by steering a model's internal representations at inference time. Ask it anything, and watch it bend reality around that concept. Available for one week only.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

I’ve heard from 2 people in the last 2 days that internally Anthropic expects to have AGI in 6-12 months. That’s faster than Dario has stated publicly. Plan your business and personal finances appropriately.

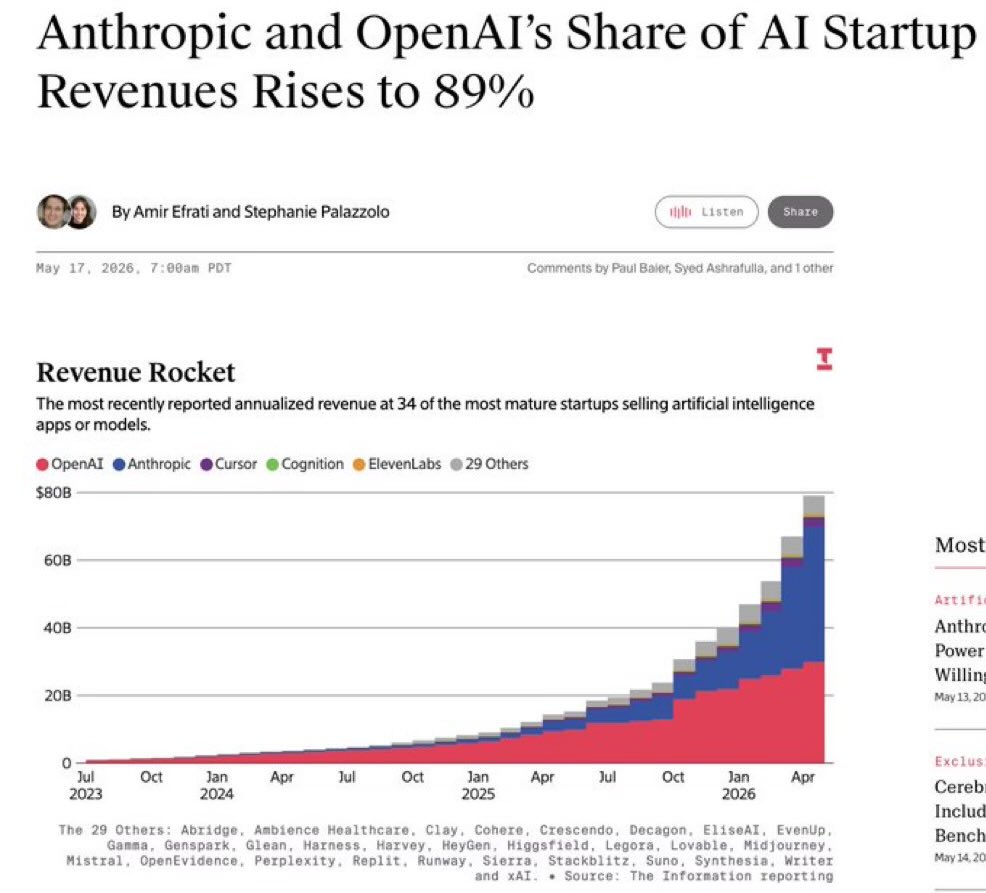

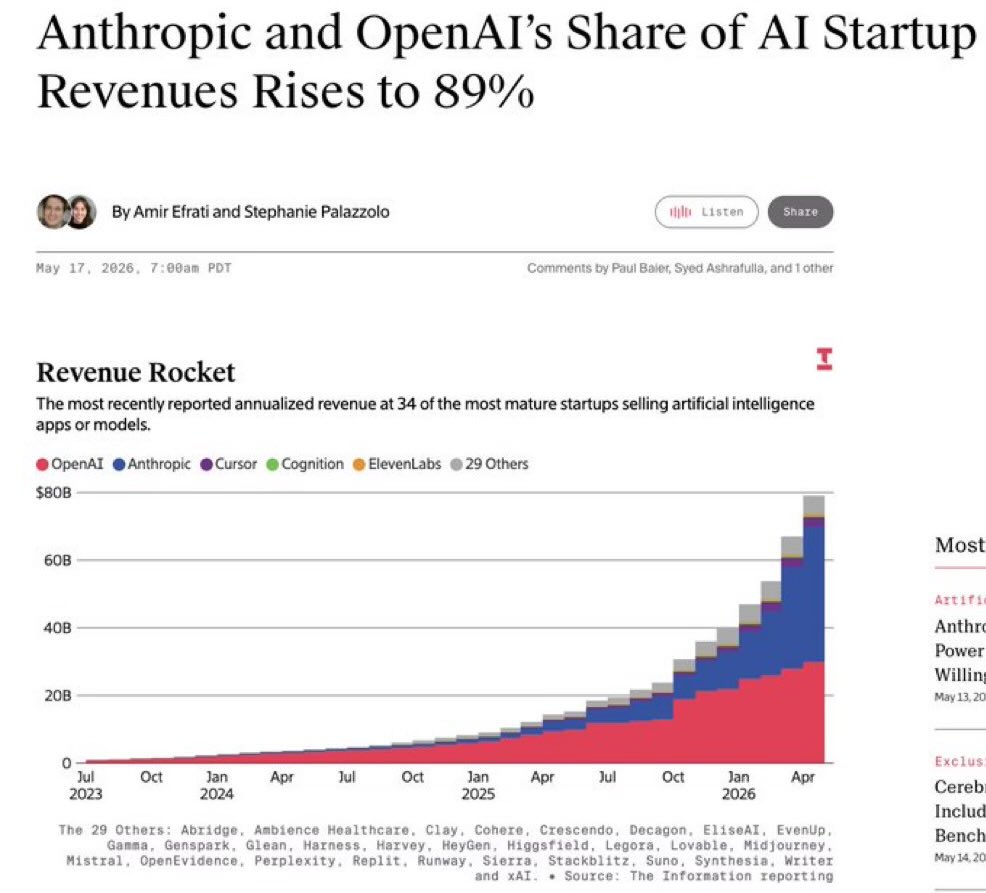

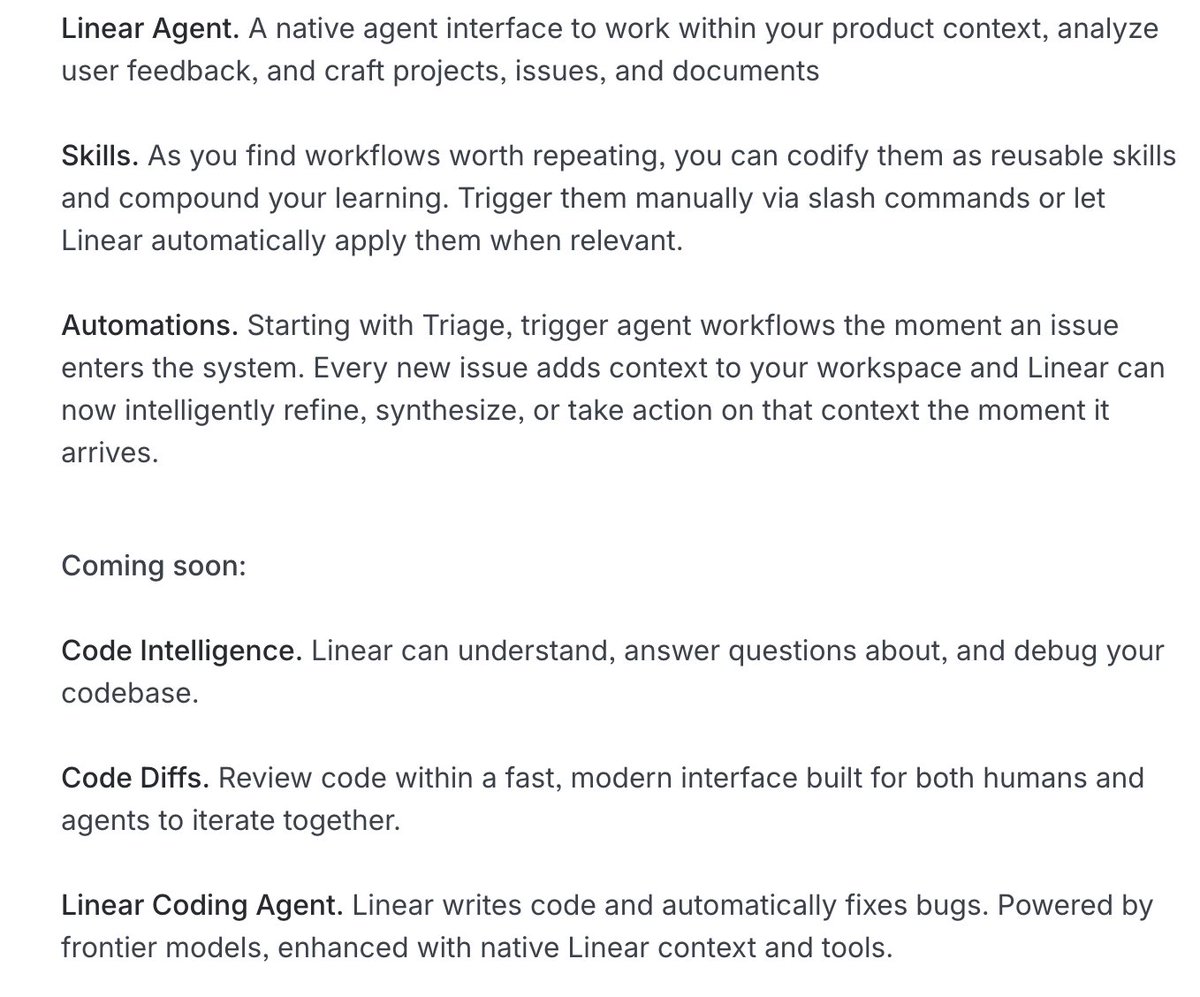

Issue tracking is dead. We are building what comes next. linear.app/next

@tobi Who knew early singularity could be this fun? :) I just confirmed that the improvements autoresearch found over the last 2 days of (~650) experiments on depth 12 model transfer well to depth 24 so nanochat is about to get a new leaderboard entry for “time to GPT-2” too. Works 🤷♂️