evanfeenstra

662 posts

evanfeenstra

@evanfeenstra

Creating a free future one line of code at a time

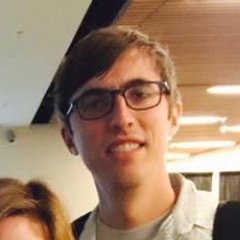

AI PROMPTING → AI VERIFYING AI prompting scales, because prompting is just typing. But AI verifying doesn’t scale, because verifying AI output involves much more than just typing. Sometimes you can verify by eye, which is why AI is great for frontend, images, and video. But for anything subtle, you need to read the code or text deeply — and that means knowing the topic well enough to correct the AI. Researchers are well aware of this, which is why there’s so much work on evals and hallucination. However, the concept of verification as the bottleneck for AI users is under-discussed. Yes, you can try formal verification, or critic models where one AI checks another, or other techniques. But to even be aware of the issue as a first class problem is half the battle. For users: AI verifying is as important as AI prompting.

60 public companies can issue equity to buy #Bitcoin.

I used to have a very similar perception as you (maybe less dramatic in the sense that I would still consider the possibility of a god), but I also didn't believe and was assuming that others only believed because they either grew up with these ideas, needed them to "cope with the challenges of life" or were simply uncomfortable with the concept of not being able to "explain everything", just yet. In east Germany, religion was not very popular and I think that the very first time I ever met a person that was openly expressing a belief in god was at the age of 19 when I met one of my first girlfriends (she was a Jehovas Witness and dead serious about the idea of god being real). We weren't together for long but it triggered my interest in the topic and I read most of the major religious texts to see if there was something to this idea. I even tried to proactively make friends with people from different religious groups to be able to join their gatherings and collect first hand experience rather than just "reading about things". It was super interesting to learn about all these ideas and I still consider most of the people I met back then to be very close friends but I personally concluded that all these text were way too fuzzy and unspecific "for me" to not leave massive room for questions and interpretation. Even if there would be conclusive proof for a god in these ancient text, it seemed almost impossible to decide which "religious framework" to adopt as they were all deeply intertwined with societal norms and values. To me, it seemed like a god would leave something behind that would be less "debatable" and that would not be denoted in "human language" that constantly changes its meaning. Of course I heard quotes like "The first sip from the cup of natural science makes one an atheist, but at the bottom of the cup, God awaits." but I always thought that if this would be true, then the scientists making these claims would surely be able to share their line of thought to allow others to arrive at a similar conclusion. The reason why I am writing this entire text in the past tense is because I am starting to seriously question my PoV on this topic and I am starting to move away from the traditional western materialist view - not because I developed some weird desire for spirituality but because I believe that the scientific evidence we have collected over the last years points in exactly that direction. Interestingly, I am not the only scientist / person interested in these topics who has recently expressed a "dramatic change of mind". Joscha Basch for example who I consider to be one of the most brilliant thinkers of our times (especially in the realm of AI / consciousness research) posted the following tweet just 4 hours after I posted mine: x.com/plinz/status/1… I think we are on the verge of a new scientific understanding of the cosmos that revolves around teleological and animist concepts and we will eventually see more and more people commit to these ideas as they mature into precise theories with testable predictions.