Yunzhen Feng retweetledi

Yunzhen Feng

134 posts

Yunzhen Feng

@feeelix_feng

PhD at CDS, NYU. Ex-Intern at GenAI, FAIR @AIatMeta. Previously undergrad at @PKU1898

Katılım Mayıs 2022

844 Takip Edilen532 Takipçiler

Yunzhen Feng retweetledi

Yunzhen Feng retweetledi

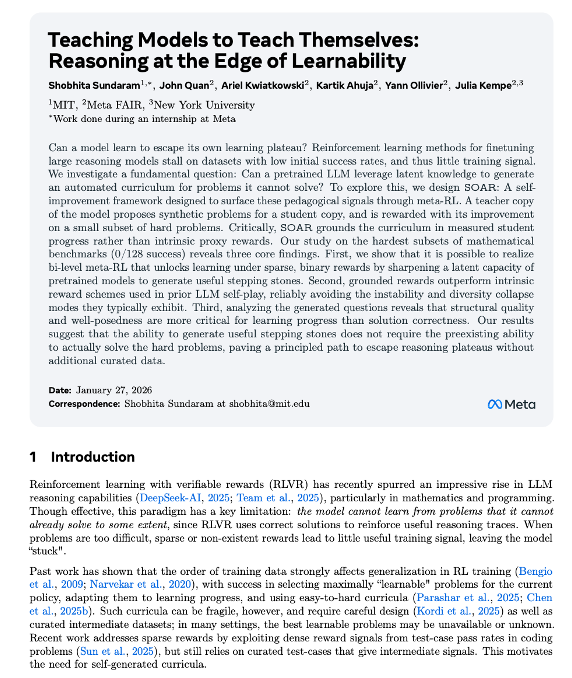

Can a model learn to break its own reasoning plateau?

In our new paper, we show that LLMs can be taught with meta-RL to generate their own "stepping stones" that kickstart learning on hard math problems (0/128 success rate) where direct RL fails.

Paper 📝: arxiv.org/abs/2601.18778

Blog post 🌐: ssundaram21.github.io/soar/

(1/n)

English

Yunzhen Feng retweetledi

🚀 ML / Applied Math / Stats PhD Opportunities @JohnsHopkins

I'm recruiting PhD students excited about generative modeling, probabilistic inference, and scientific applications (biochemistry, physics, and more), with strong backgrounds in CS/Math/Stats/Basic Science and curiosity for advancing ML and solving real-world problems!

Apply to our Applied Mathematics and Statistics PhD program by Dec 15, 2025, and become part of the broader @HopkinsDSAI community!

engineering.jhu.edu/ams/academics/…

English

@codewithimanshu @KempeLab Best reasoning: Be accurate first and then improve the efficiency

English

@KempeLab @feeelix_feng That's a very interesting topic, Julia, but I wonder if efficiency always equals better reasoning, no?

English

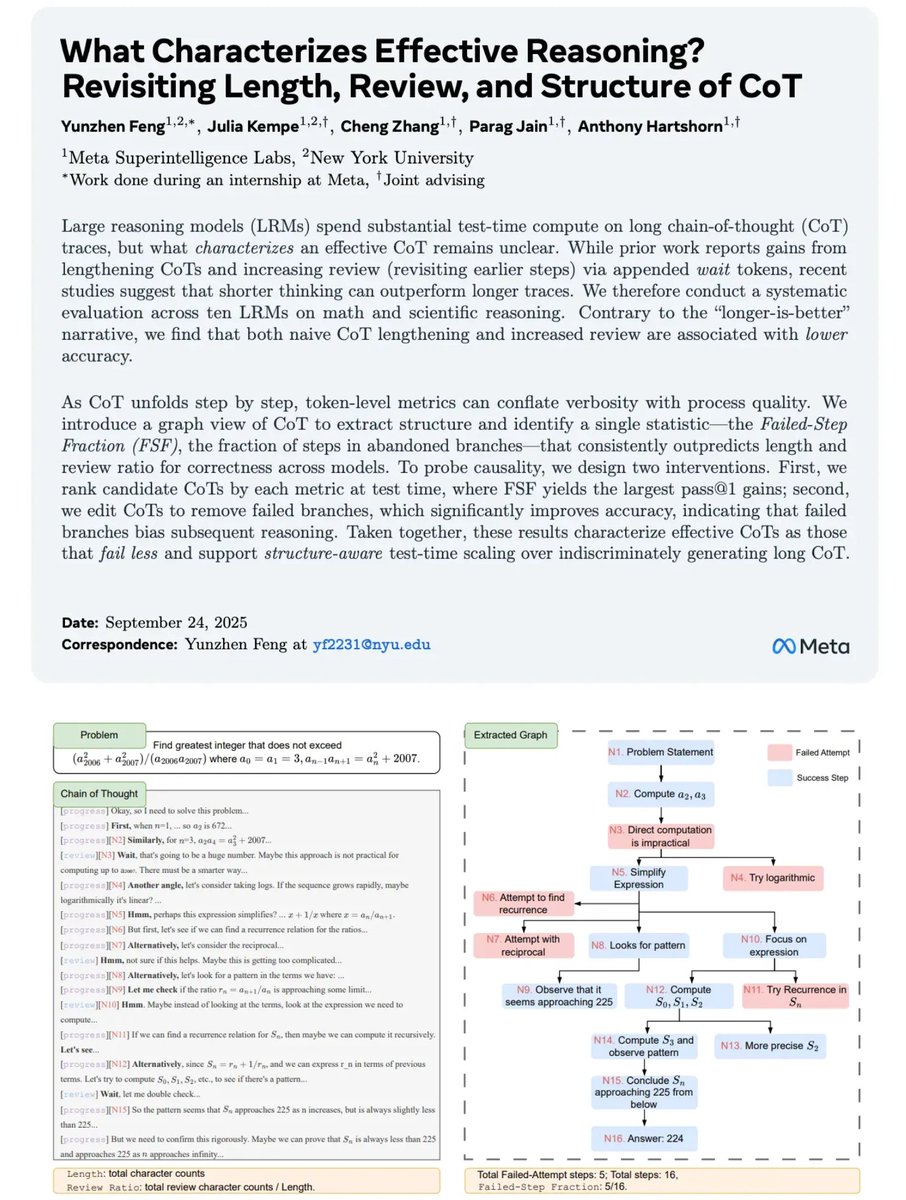

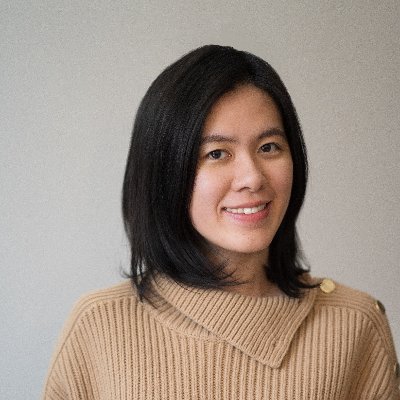

Interested in our work on characteristics of *Efficient LLM Reasoning*? Come to our spotlight poster at the Efficient Reasoning workshop at NeurIPS today, Exhibit Hall F, and talk to @feeelix_feng .

English

I’ll be at #NeurIPS2025 until 12/7!👋

Please reach out if you want to chat about RL, reasoning, self-evolving, or LLM diversity.

My Pre:

🌟 Fri, Dec 5 (11a-2p): Spotlight on Synthetic Data Scheduling, #4108

🌟 Sat, Dec 6 (11:30a & 4:30p): Spotlight on evaluating CoT, Hall F

English

Yunzhen Feng retweetledi

I will be recruiting 1-2 PhD students at @NYUDataScience or @NYUCourant CS to work on Machine Learning & applications in NYU's vibrant top ML ecosystem. Check Google Scholar to see our latest research interests. Interested? Please mention my name in your application. Deadl. 12/12

English

@zorikgekhman Hey Zorik, thanks for the interest in our work. Could you share your email address?

English

@feeelix_feng Very interesting work, congrats @feeelix_feng. I want to implement your review ration baseline and wondered if you can share the prompt you used for Llama 4 Maverick to label each chunk as progress or review? And also maybe the code you used for chunking using the keywords?

English

Yunzhen Feng retweetledi

Yunzhen Feng retweetledi

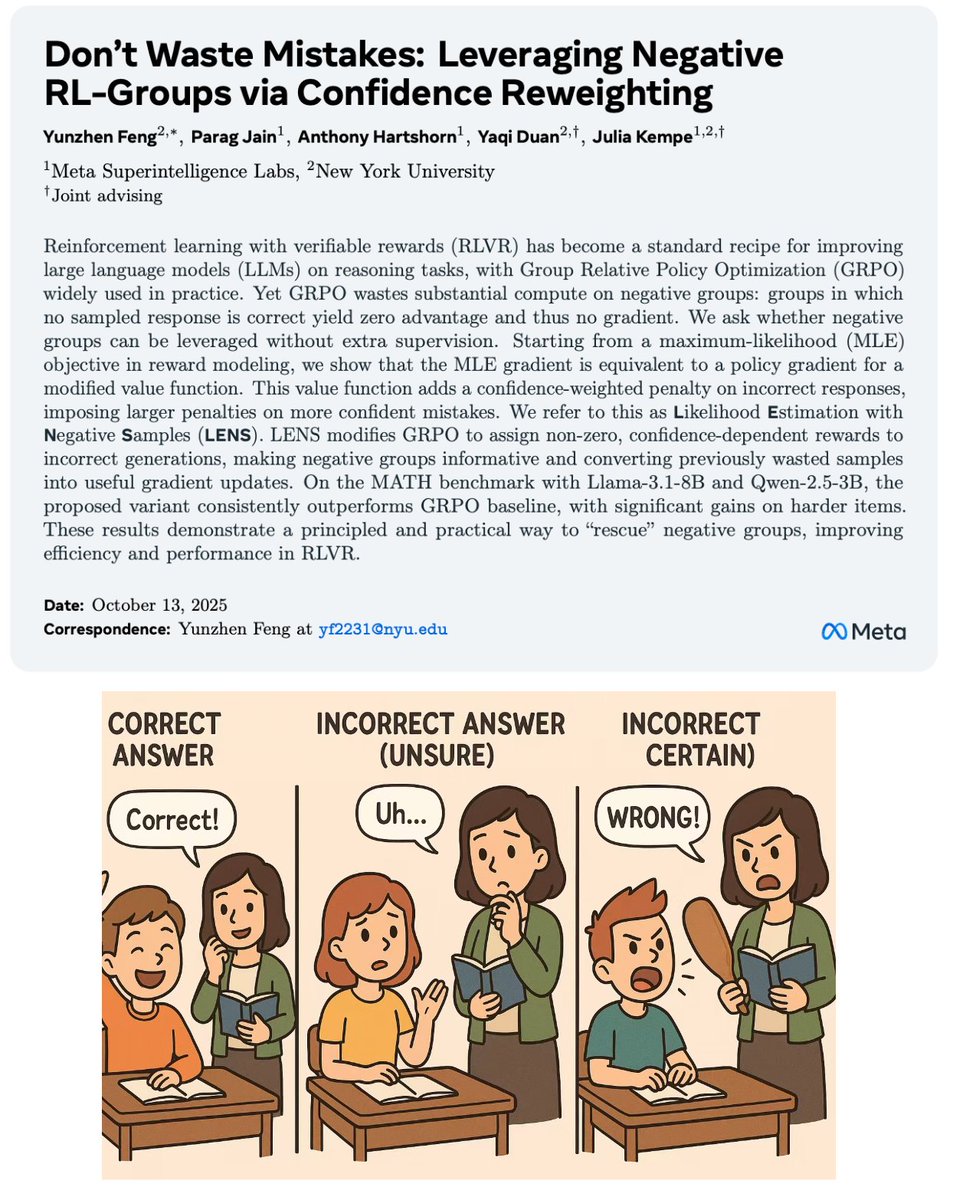

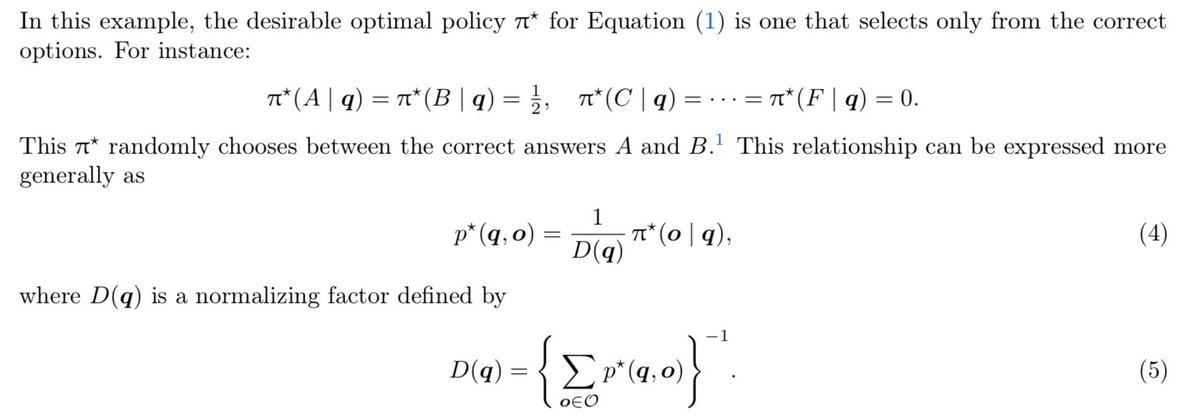

@AntChen_ @KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn @AIatMeta @NYUDataScience 1) Yes, but p* does not sum to 1 over all o. It represents the probability of correctness of o given q.

2) Yes. For the same reason, we need to scale the policy probability into correctness probability.

English

@feeelix_feng @KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn @AIatMeta @NYUDataScience Cool! Some naive Qs to better understand how you estimate confidence which seem to rely on D(q). Here:

1) Eq 4 further normalizes pi* when it is already a proper distribution?

2) Sub (5) into (4) gives p*(q,o) = \sum_o p*(q,o) pi*(o|q)? Or am I mis-reading the notation?

English

@josancamon19 @KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn Are you referring to the dynamic sampling in DAPO? In DAPO, they oversample and then filter out all the negative groups. In contrast, we aim to recover training signal from those discarded groups.

English

@feeelix_feng @KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn how’s this different from DAPO rejection sampling?

English

@siddarthv66 Prompt changes does affect eval. But both the baseline training and our method use the same eval setup - for fair comparison.

The experiments are run with university compute, so I wish I could run more. We are experimenting using LoRA to train.

English

Why throw the numina 1.5 results into the appendix then? Regardless it’s another math dataset with comparable difficulty to MATH-500, which we know is grossly insufficient to evaluate these models. There’s so many other eval tasks now in 2025, not including any of them is just being lazy. Even minor prompt changes can account for 1-2% difference on these math tasks. Sorry for saying it’s single seed, but again good RL practice involves reporting std intervals, and 2 seeds aren’t enough. If the improvement was significant it’s still justifiable, but definitely not with 1-2% gains.

English

> Method is just hacky reward shaping

> Only evaluate on MATH-500 (criminal)

> Qwen 2.5 and Llama 3.1, with about 1-2% improvement from baselines (single seed)

This is awful experimental practice, really disappointing to see MSL/FAIR turning into a paper mill

Yunzhen Feng@feeelix_feng

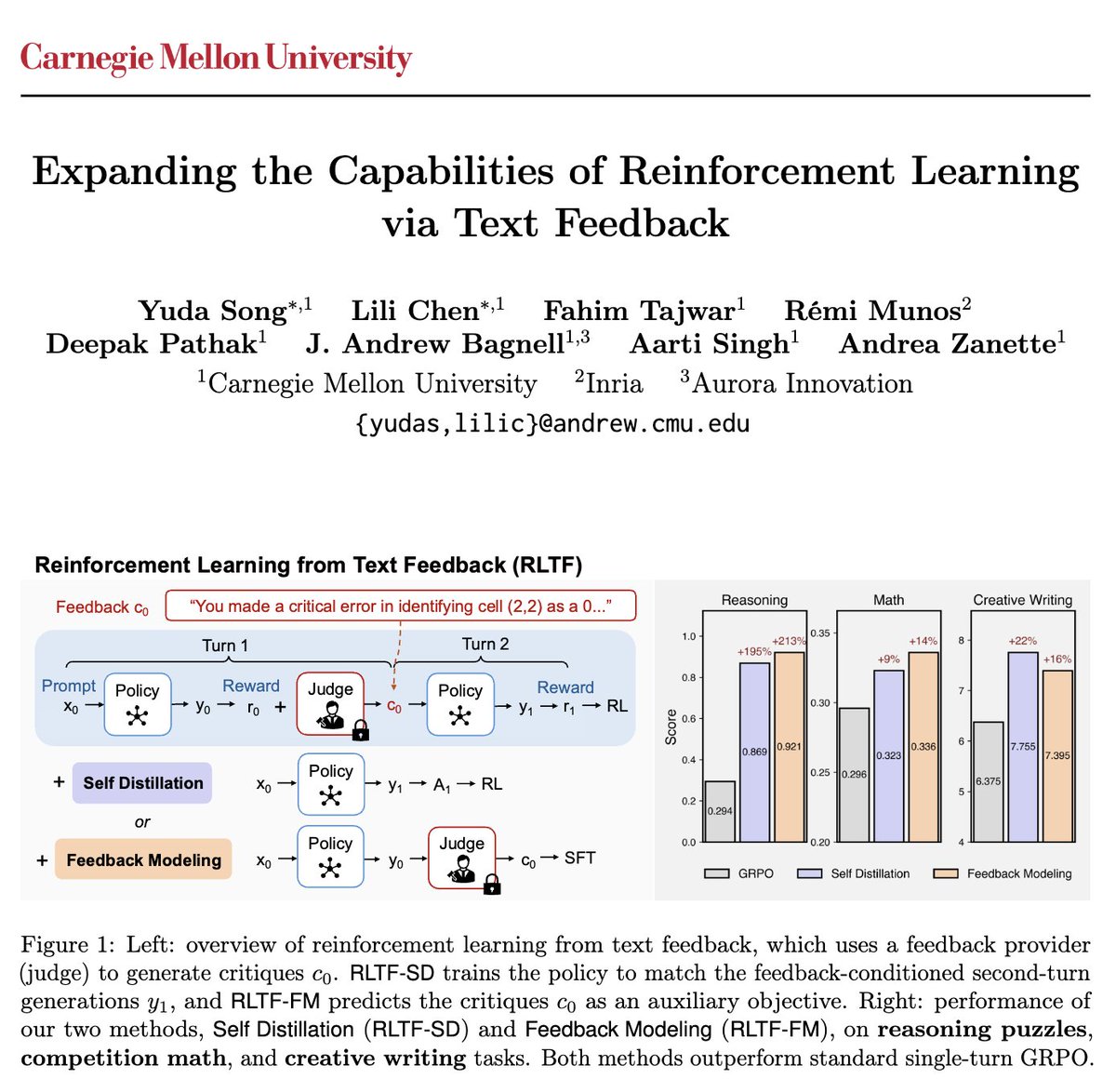

Current GRPO wastes compute on negative groups — when all K samples are wrong, you get zero gradient despite full generation cost. We propose a principled fix by bridging reward modeling and policy optimization: 👉 Penalize highly confident wrong answers more to create signal.🧵

English

@siddarthv66 It was for page limit so we put the Numina 1.5 in the appendix.

The eval challenge is mostly for Llama. The accuracy is <1% for AIME25. We do not want GSM or AMC because they're saturated. What else benchmark are there for math that is not contaminated?

English

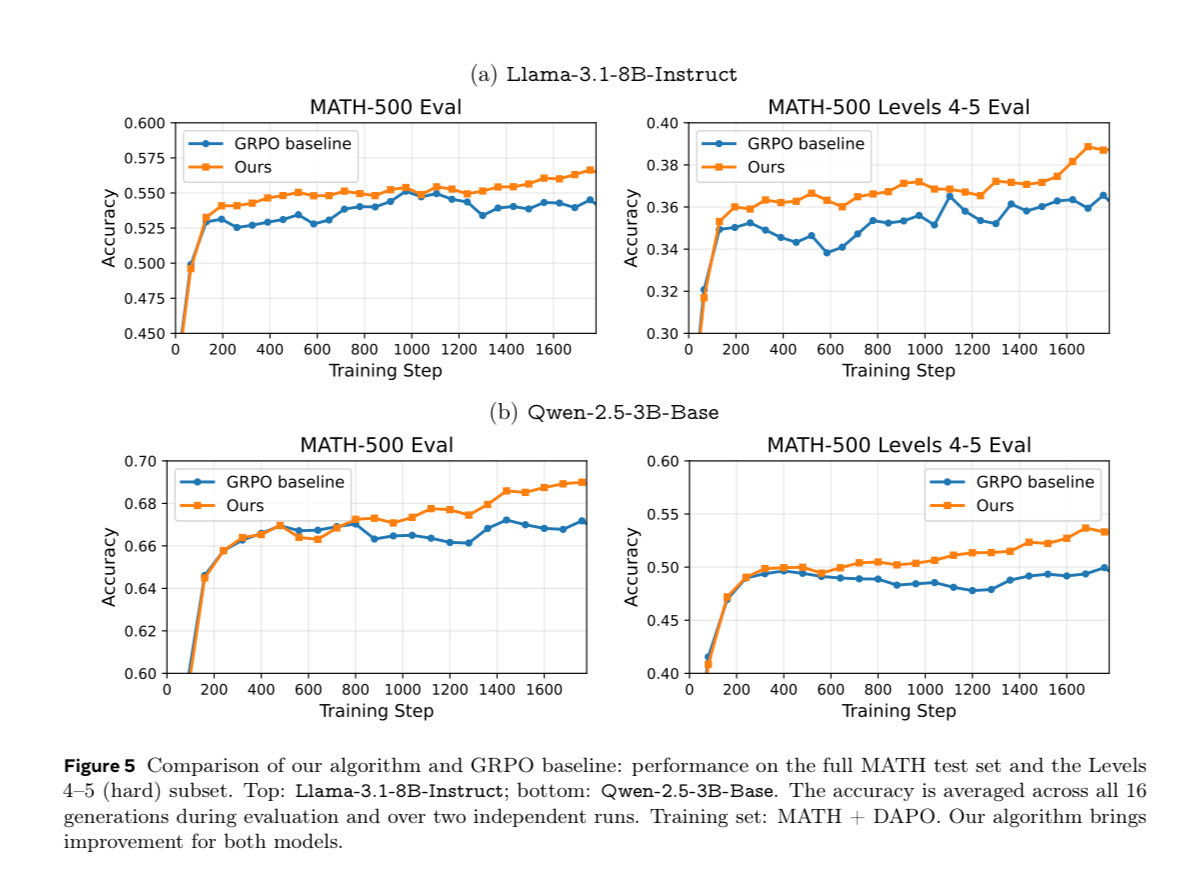

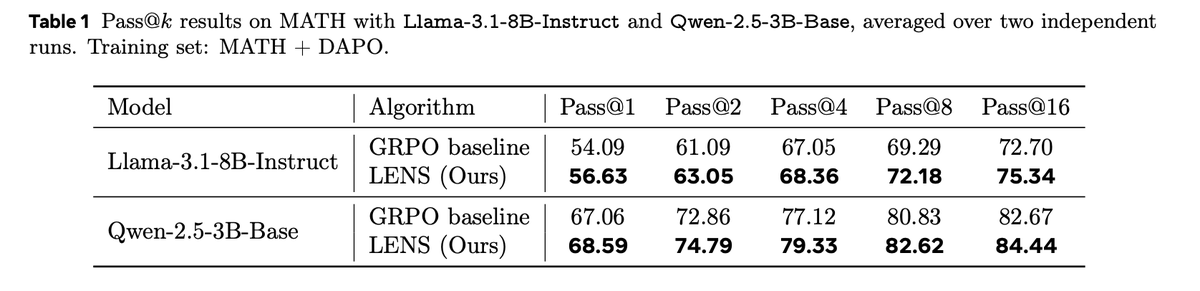

@KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn @AIatMeta @NYUDataScience Key observation:

(1) Our method continues to improve accuracy when GRPO saturates ⬆️

(2) Our method improves all Pass@k metrics

This matches our intuition—by learning from negative groups, we get better exploration on hard problems where it matters most.

English

@KempeLab @YaqiDuanPKU @jparag123 @tonyjhartshorn @AIatMeta @NYUDataScience We experiment on two different training sets with Llama-3.1-8B and Qwen-2.5-3B 📈

For MATH+DAPO, we run two random seeds. Our method consistently outperforms GRPO across training, with significant improvements on hard problems (Level 4-5)

English