Felipe Engelberger

972 posts

Felipe Engelberger

@fengel97

PhD Student at @MeilerLab @UniLeipzig | Member of the PB³ Lab @pb3_lab | Biochem @Uchile | Founder @DataRoot_CL

o1 pro's math skills are very impressive 😮. Here is o1 pro solving Q3 (the hardest question) from IMO 2006 in 6 minutes and 48 seconds. For contrast, in 2006, out of roughly 500 or so top Math kids under 19 in the whole world only 28 were able to fully solve it...and they had 4 and a half hours to do so...And no one from the 6 person US team could do it... I've tried this question with every other model (including o1) and this is the first time that I've seen an AI model get the answer correct. P.S. Obviously this is a very summarized solution so I did ask it to show work especially on steps 4/5. The expanded thought process was just as impressive.

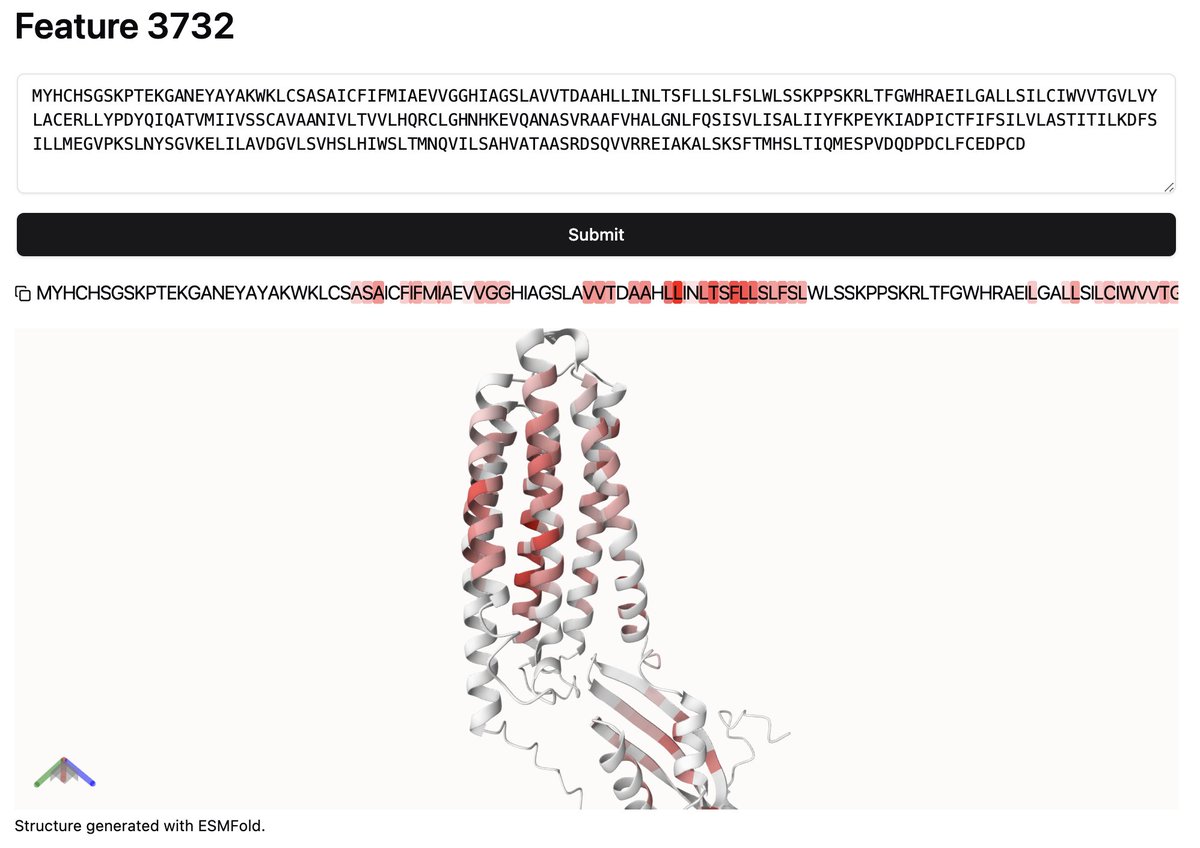

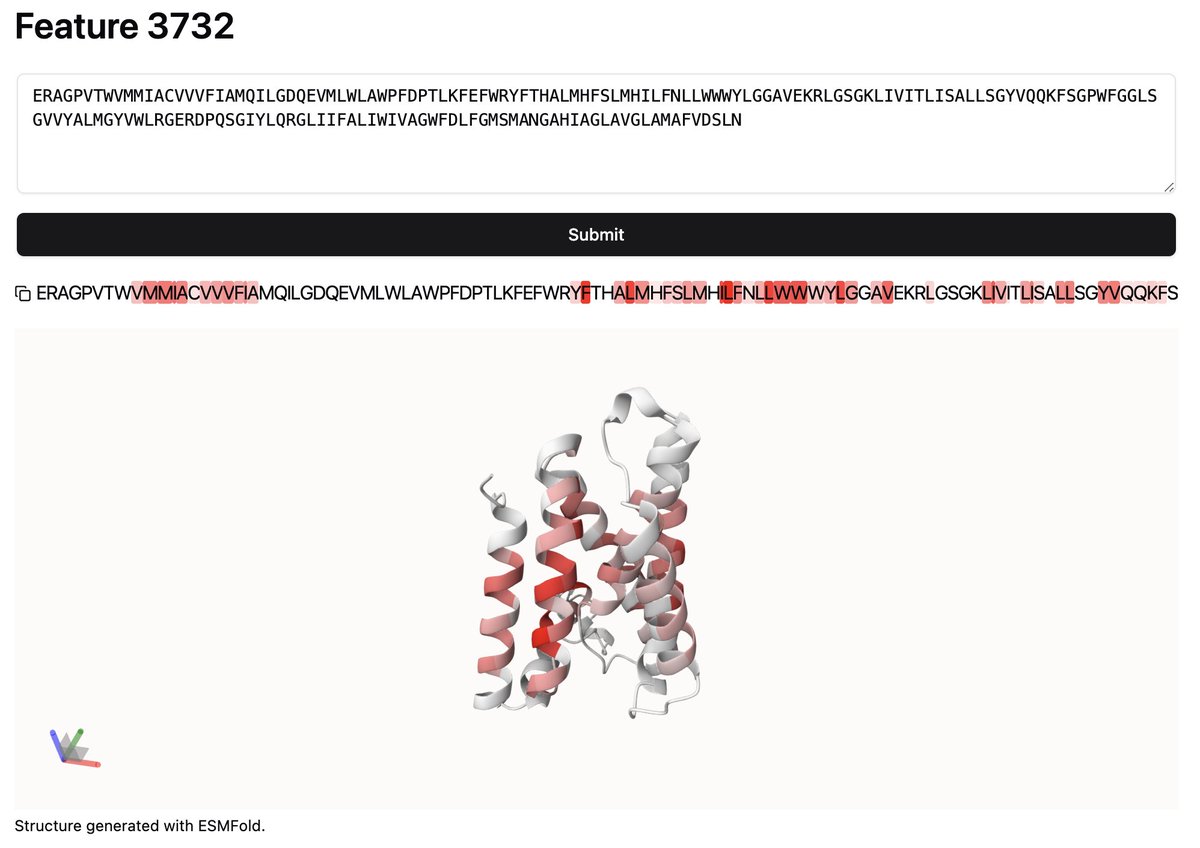

I spent quite a while trying to find the ones that recognize the membrane, but couldn't! This is the closest I got

NEW EPISODE 🎧 Susana Vazquez Torres crossed continents to become a scientist. In our lab, she's used AI to create new antitoxins for snakebites. Apple: podcasts.apple.com/us/podcast/the… Spotify: open.spotify.com/episode/580T5E…

The Google Colab version of RFdiffusion with the conditional fold generation option worked well! We successfully placed two helices in the beta barrel structure. The RMSD between prediction and X-ray is only 0.3 Å! colab.research.google.com/github/sokrypt… colab.research.google.com/github/sokrypt…

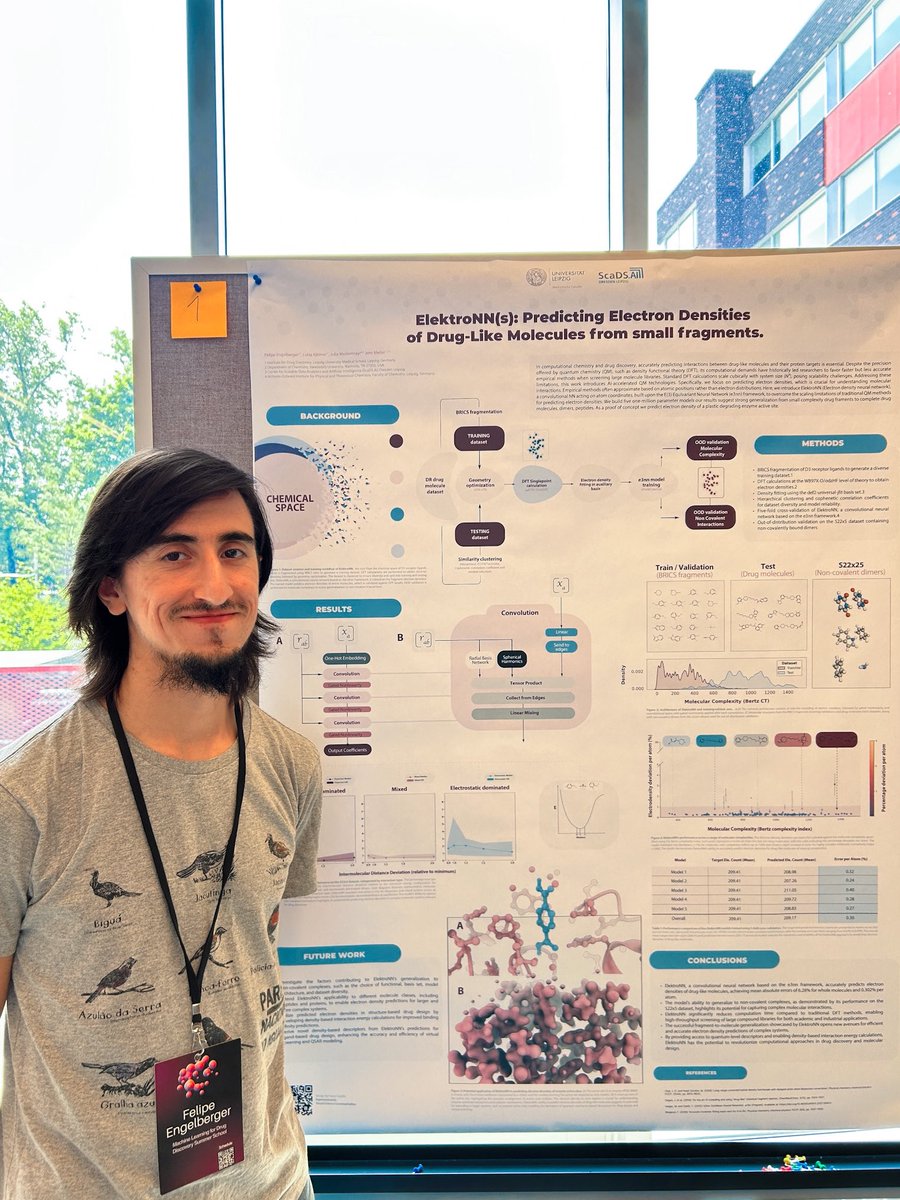

Yesterday, I had the chance to present my first spotlight poster at #MoML2024 at @Mila_Quebec AI Institute! I presented joint work done with @luisa_kaermer and our amazing advisors, @JWestermayr and @MeilerLab. Huge thanks to @Esporascicomm for the amazing graphic design work!

New paper: How can you tell if a transformer has the right world model? We trained a transformer to predict directions for NYC taxi rides. The model was good. It could find shortest paths between new points But had it built a map of NYC? We reconstructed its map and found this: