Sabitlenmiş Tweet

Arcstone Founder

253 posts

Arcstone Founder

@founderarcstone

Founder building authorization-first systems for high-impact decision governance.

Katılım Aralık 2025

201 Takip Edilen24 Takipçiler

@roydanroy 100% Ai changes symbolic math in the next few years

English

How are mathematicians facing the wave of rapidly advancing AI-for-math capabilities?

Jeremy Avigad (CMU prof and co-author on the original 2015 system description paper for Lean) just posted a paper with his thoughts in the wake of the Math, Inc. announcement on sphere packing.

andrew.cmu.edu/user/avigad/Pa…

There are a lot of interesting passages in here, including a bit of the back story of the Math, Inc. bomb drop and how it was initially received by the humans working on the formalization project.

But, as for how mathematics proceeds, here's the key last passage:

"We need to remember our strengths: mathematicians are problem solvers and theory builders extraordinaire. Rather than fight the use of AI in mathematics, we should own it. It is not enough to keep up with current events and design benchmarks for AI researchers; we need to play an active role in deploying the technology and molding it to our purposes. We also need to learn how to raise our students with the wisdom to use the new technologies appropriately, and we need to be careful that we still manage to impart core mathematical intuitions and understanding. Figuring out how to use AI effectively to achieve our mathematical goals won’t be easy, but mathematicians have always embraced challenges—indeed, the harder, the better. If we face AI head-on and stay true to our values, mathematics will thrive. We just need to show up and get to work."

The next few years should be a golden era for mathematics. For those of us working on the frontier, I hope we do well by our mathematician colleagues.

English

@MrEwanMorrison Yea Ai and academic policy will need to be updated

English

@justinskycak I think Ai changes the game on this lol 😂

English

Verifying Fields Medal-level mathematics demonstrates that AI agents like Gauss are ready to accelerate the frontiers of mathematical research. Scaling autoformalization will transform mathematical knowledge and discovery by making the full landscape of known results searchable, composable, and machine-navigable.

English

We are pleased to share that using Gauss, we have completed a ~200K LOC formalization of Maryna Viazovska’s 2022 Fields Medal theorems on optimal sphere packing in dimensions 8 and 24.

This is the only Fields Medal-winning result from this century to be completely formalized, and is the largest single-purpose Lean formalization in history.

We are honored to have assisted @SidharthHarihar1 and the rest of the sphere packing team in this achievement.

math.inc/sphere-packing

English

@justinskycak Retrieval proves memory.

Judgment proves learning.

English

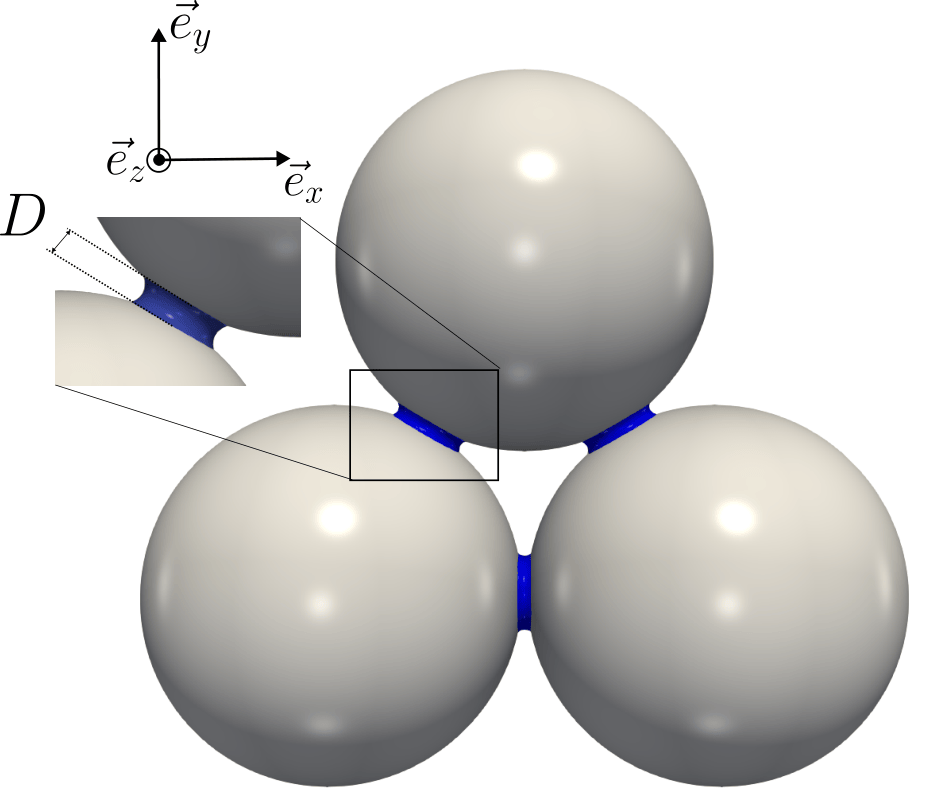

@PhysRevE This is a great example of hysteresis as geometry with memory.

The irreversibility isn’t in the material—it’s in the path the system is forced to take.

English

Scientists present a numerical approach that simulates the condensation and evaporation of capillary bridges in simple and complex granular materials, allowing them to capture the emergence of #hysteresis from irreversible geometric transitions.

🔗 go.aps.org/4aL6IVp

English

@bravo_abad This is an important step toward unifying deconvolution across omics.

Treating tissues as mixtures with shared latent structure—and learning that structure across modalities—feels like the right abstraction.

English

A universal deep learning framework for deconvolving transcriptomic, proteomic, and metabolomic data

Tissues are mixtures of cell types, and knowing their proportions is essential for understanding disease, drug response, and development. Single-cell technologies can measure this directly but remain expensive and difficult to scale. Deconvolution algorithms offer an alternative: given a single-cell reference, they estimate cell-type abundances from bulk tissue data. The problem is that existing tools have been developed in silos—transcriptomic methods assume count distributions that don't transfer to proteomics, spatial tools can misattribute variability outside their context, and for metabolomics, dedicated deconvolution tools simply didn't exist.

Tianyi Zhao and coauthors address this with DECODE: a deep learning framework unifying deconvolution across transcriptomics, proteomics, and metabolomics.

The architecture has four stages. Stage 1 generates pseudotissue training samples by mixing single cells at random proportions. Stage 2 uses adversarial training to align representations between synthetic and real tissue data, removing batch effects from differences in donors, platforms, and conditions. Stage 3 introduces contrastive learning with a self-attention denoiser: noise is injected into training pairs, and the model learns to separate purified tissue features from noise by pulling positive pairs together and pushing noise apart. Stage 4 offers two inference pathways—one when the single-cell reference covers all tissue cell types, another routing through the denoiser when references are incomplete, which is the common real-world scenario.

Benchmarking spans 15 datasets, 7 scenarios, and 11 competing methods. DECODE achieves top performance across nearly all settings. The metabolomics results are particularly striking—with only hundreds of features and high similarity across cell types, all other methods produced unusable results while DECODE maintained accurate estimates.

Applied to breast cancer cohorts, DECODE revealed higher T cell and perivascular-like cell abundances in nonmetastatic tumors, consistent with known protective signatures. In mouse liver data spanning three omics layers, it recovered expected cell proportions with high cross-omics consistency.

The broader lesson is architectural: rather than encoding omics-specific assumptions, DECODE uses adversarial alignment and contrastive denoising to learn a shared space where deconvolution becomes modality-agnostic—a design principle suggesting many problems currently requiring separate specialized models may yield to unified frameworks that learn to handle data heterogeneity during training.

Paper: nature.com/articles/s4159…

English

@NightSkyNow This is a good reminder that “missing” often means misframed.

If dark matter only couples gravitationally, we shouldn’t expect it to show up where our detectors are loudest.

English

🚨 Dark Matter Might Not Be Missing — It Might Be Next Door

What if scientists haven’t failed to find dark matter… because it isn’t really here? 🤯

For decades, researchers have searched for dark matter — the mysterious substance that makes up about 27% of the universe and holds galaxies together. It doesn’t glow, reflect light, or interact like normal matter. And despite years of powerful experiments, not a single dark matter particle has been directly seen.

Now comes a mind-bending idea that could change everything. Physicist Stefano Profumo suggests dark matter may live in a hidden “mirror universe”, right alongside ours. This shadow universe could have formed during the same Big Bang, but evolved differently — with its own atoms, forces, and even dark black holes. We can’t see it, touch it, or detect it directly… but its gravity may be quietly shaping our universe.

There’s more. Profumo also explores the idea that dark matter could still be forming today, slowly emerging from the expanding edge of the universe itself. Just like black holes release particles from their boundaries, the very frontier of space and time may be creating dark matter — a process that began at the birth of the cosmos and never stopped.

These ideas are still unproven, but they reveal something powerful: dark matter may not be invisible because our technology is weak — it may be invisible because it exists beyond our cosmic neighborhood. If true, the greatest mystery in modern physics isn’t hiding in our detectors… it’s hiding just beyond what we can see.

The universe may be far stranger — and far bigger — than we ever imagined. 👀

English

@ControlAI @NPCollapse The control problem isn’t intelligence—it’s permission.

Banning capability doesn’t create control; it just drives it underground.

What we lack is a mature framework for admissible action, not smarter systems.

English

ControlAI advisor Connor Leahy (@NPCollapse): We are barelling headlong towards superintelligence.

If we build systems vastly smarter than humans and cannot control them, the future will belong to them, not us.

There is a solution: ban the development of superintelligent AI.

English

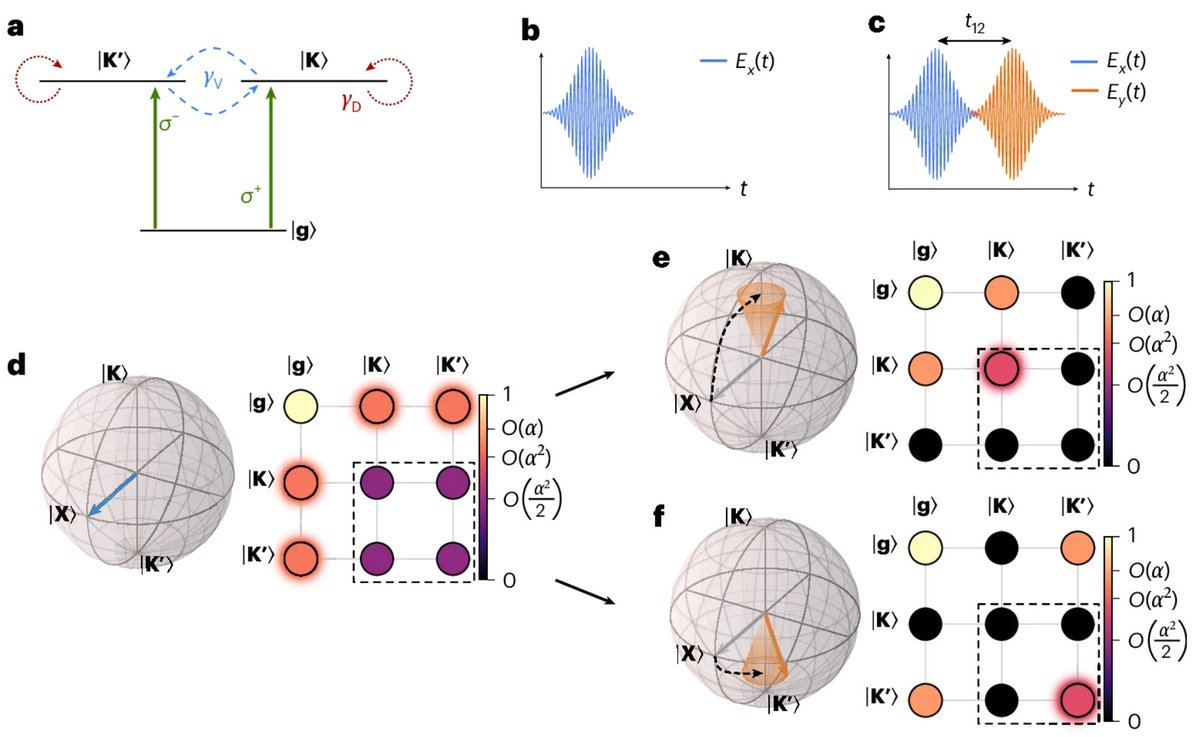

@NaturePhotonics Valleytronics keeps getting more interesting.

Encoding information in which valley—and controlling it coherently with light—feels like computing in phase space, not just state space.

English

New article online: Encoding and manipulating ultrafast coherent valleytronic information with lightwaves.

go.nature.com/4l0oyqC

English

@BrainyScience Careful with the framing.

Intelligence isn’t located anywhere—it emerges from constrained network dynamics.

Brains, mycelium, and societies rhyme not because they’re identical, but because selection favors distributed architectures under constraint.

English

@wonderofscience What’s striking isn’t the radiation—it’s the trace.

An invisible process becomes legible only when it crosses a medium that can’t ignore it.

English

@MITEngineering @naturemethods @MITMechE Predicting cell behavior minute-by-minute is impressive.

The next frontier is knowing when prediction should yield to constraint—development isn’t just dynamics, it’s admissibility under irreversible choices.

English

In a new @NatureMethods paper, a team, including researchers in @MITMechE, developed a way to predict, minute by minute, how individual cells will fold, divide, and rearrange during a fruit fly’s earliest stage of growth.

news.mit.edu/2025/deep-lear…

GIF

English

@incprodmon This paper quietly says the scary part out loud:

collapse isn’t bad answers—it’s crowding out human general knowledge.

When systems substitute judgment instead of aggregating it, intelligence decays even as accuracy rises.

English

Super interesting!

"AI, Human Cognition and Knowledge Collapse" by Acemoglu, Kong, and Ozdaglar.

"We study how generative AI, and in particular agentic AI, shapes human learning incentives and the long-run evolution of society’s information ecosystem."

nber.org/papers/w34910

English

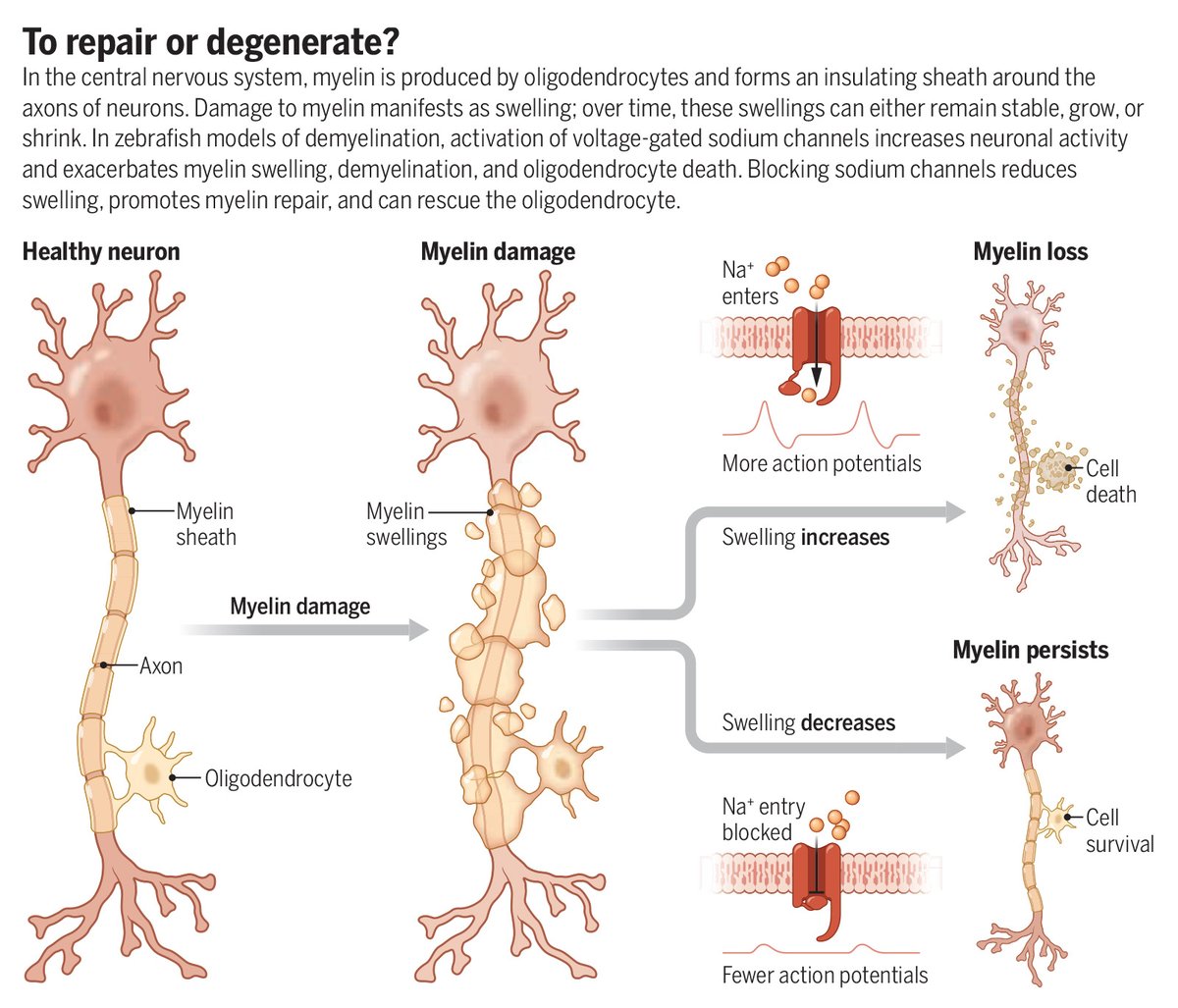

@ScienceMagazine This diagram is the whole story.

Damage doesn’t kill systems—unchecked excitation does.

Without damping, repair pathways flip into degeneration.

Civilizations, like neurons, need brakes before they need rockets.

English

In the central nervous system, myelin is produced by oligodendrocytes and forms an insulating sheath around the axons of neurons.

In a new Science study, researchers report that damage to myelin initially causes swelling before leading to loss of myelin sheaths. They also demonstrate that swollen myelin can persist despite damage and can dynamically remodel to prevent sheath loss.

Learn more in a new #SciencePerspective: scim.ag/4aKXuXQ

English

@elonmusk Space helps.

But without agency, roles, and reversibility, you don’t escape the mouse utopia—you just give it rockets. 🚀

English

@skglearning I think it’s worth considering some questions should not have answers

English