juliette pluto 🌌

2.4K posts

juliette pluto 🌌

@foundjuliette

hacker, machine whisperer, typo-generator. Adversarial robustness @GoogleDeepMind. views mine.

Brooklyn, New York Katılım Haziran 2014

713 Takip Edilen5.5K Takipçiler

When Anthropic released a complex 30K word doc and said Claude was trained to follow it, I was pretty sceptical. Turns out it kinda works!

We red teamed Claude's constitution following, and it's gotten much better! Positive update for the ability to align models in nuanced ways

arya@AJakkli

There's been a lot of buzz around Claude's 30K word constitution ("soul doc") and unusual ways Anthropic is integrating it into training. If we can robustly train complex values into a model, that's a big deal for safety. But does it actually work? Yes, surprisingly well!

English

We just launched Codex Security!

Probably a no-brainer for most teams to turn on. Some things I'm excited about it:

- Agentic security review leveraging our SOTA models

- Always on codebase scanning

- Detailed reports with code paths on vulnerabilities

- Auto-fix any report with a PR

Teams and enterprises can try it out through Codex web.

English

@KentonVarda Capability is there; just temporarily bottlenecked by misaligned RL rewards.

English

@mahaoo_ASI @willccbb it’s okay; but you must print then tokens as paper first, which can then scan, as long as you burn it after.

English

@rmcentush Reminds me of that time I inadvertently stole all of my bf’s saved passwords, bc he let me log into his iPad to setup a HomePod. iCloud auto sync did the deed.

English

apple is probably the only company i trust with basically my entire life — my ID, financial data, messages/contacts, health records, etc.

is that rational? probably not. but it might matter enormously as these models get embodied in the physical world + reach broader consumer use. a steady flow of tokens, all the time.

apple's core competency has always been designing computers that people trust. if everything becomes a computer and models continue to viciously compete, having that trust is probably a good place to be

aidan@aidanshandle

Says something about Apple's value that people are willing to make a one time $600 payment for their AI to be able to access the ecosystem

English

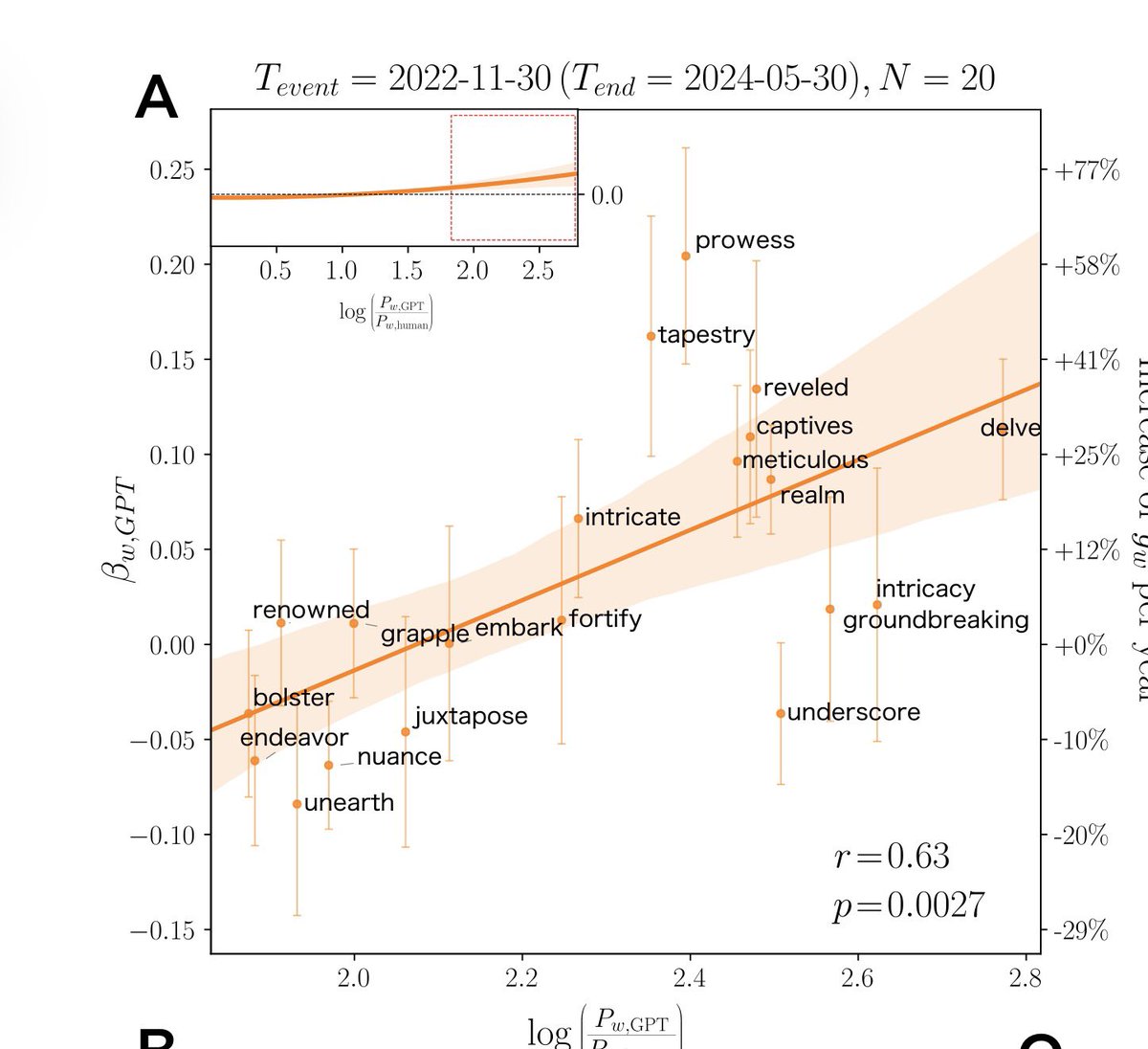

Everyone is starting to sound like AI, even in spoken language

Analysis of 280,000 transcripts of videos of talks & presentations from academic channels finds they increasingly used words that are favorites of ChatGPT

Model collapse, except for humans arxiv.org/pdf/2409.01754…

English

@_arohan_ It was a long time ago, so back then probably not

English

@foundjuliette Thats an incredible achievement! Go ♊️!!

I wonder if the reviewer is also using gemini

English

Everyone is talking as if their code is pristine. But do you remember the flame wars?

In every good codebase, there are enforced rules, like style guides and review norms. Google has style guides with an incredible level of detail on how to write “good” code. To get the ability to approve code changes, you often had to go through a lengthy training/qualification process. I got my C++ one fairly easily after writing an Arena list (IIRC, Yonathan Zunger blessed me with the powers), but Python was very, very hard to get.

Once ML started taking off, ML codebases, especially the ones full of linear algebra, started conflicting with style guides that were originally written for servers and data processing code. That pushed ML in a different direction. Over time, ML codebases developed their own unofficial but commonly agreed patterns.

You see it in the basics. x for examples, w for weights. Then codebases matured and started using einsums more systematically. Later @NoamShazeer introduced Noam notation to make tensor algebra easier to read, like x_BLT.

Having experienced all of this and having worked across different parts of the stack, I’m pretty sure engineers and researchers from different layers would have called each other’s code slop. In fact, in my career, ML framework flame wars were pretty common.

Now what’s funny is that frontier models, especially Claude Code, when you prompt them with style guides and do decent context engineering, can write better rule-following code than I can. I get tired. I’d rather keep state for more interesting things in my head.

English

@_arohan_ In the process there was exactly one time when I received readability feedback that asked me to change smth. And upon review, it was revealed that the code generated by Gemini in fact had correctly followed the guidelines, and the human had misinterpreted them.

English

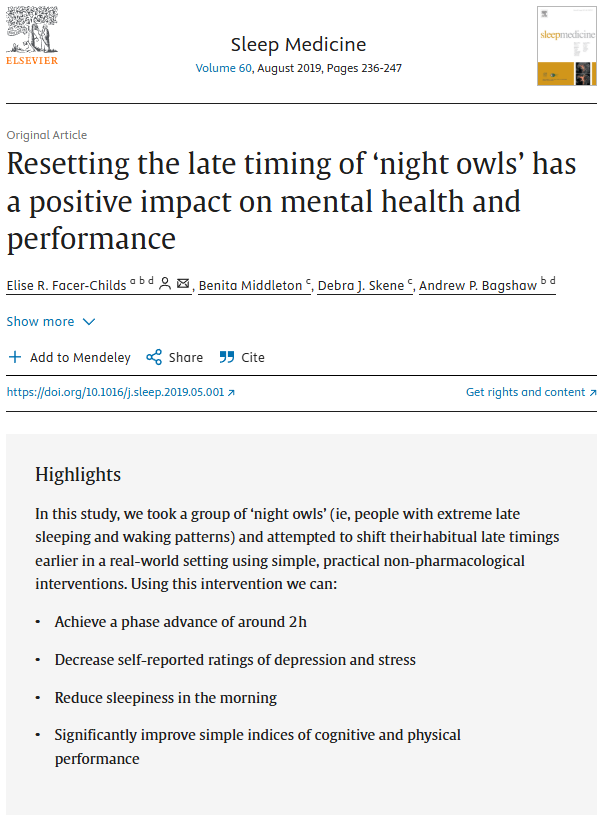

@NTFabiano @PaulaGhete n = 22 (12 in experimental arm); effectively unblinded; control group’s sleep delayed during study period suggesting confounding factors; depression improvements measured via self-report in participants who knew they were “fixing” their sleep; no follow-up on sustainability.

English

@joao_batalha copper ~400W/mK, lined with silver (for food safety) is just as good, and ~10x cheaper

English