Roberto Garcia

29 posts

Roberto Garcia

@garctrob

Computational Math @HazyResearch @ Stanford | Prev @ HRT, Jane Street

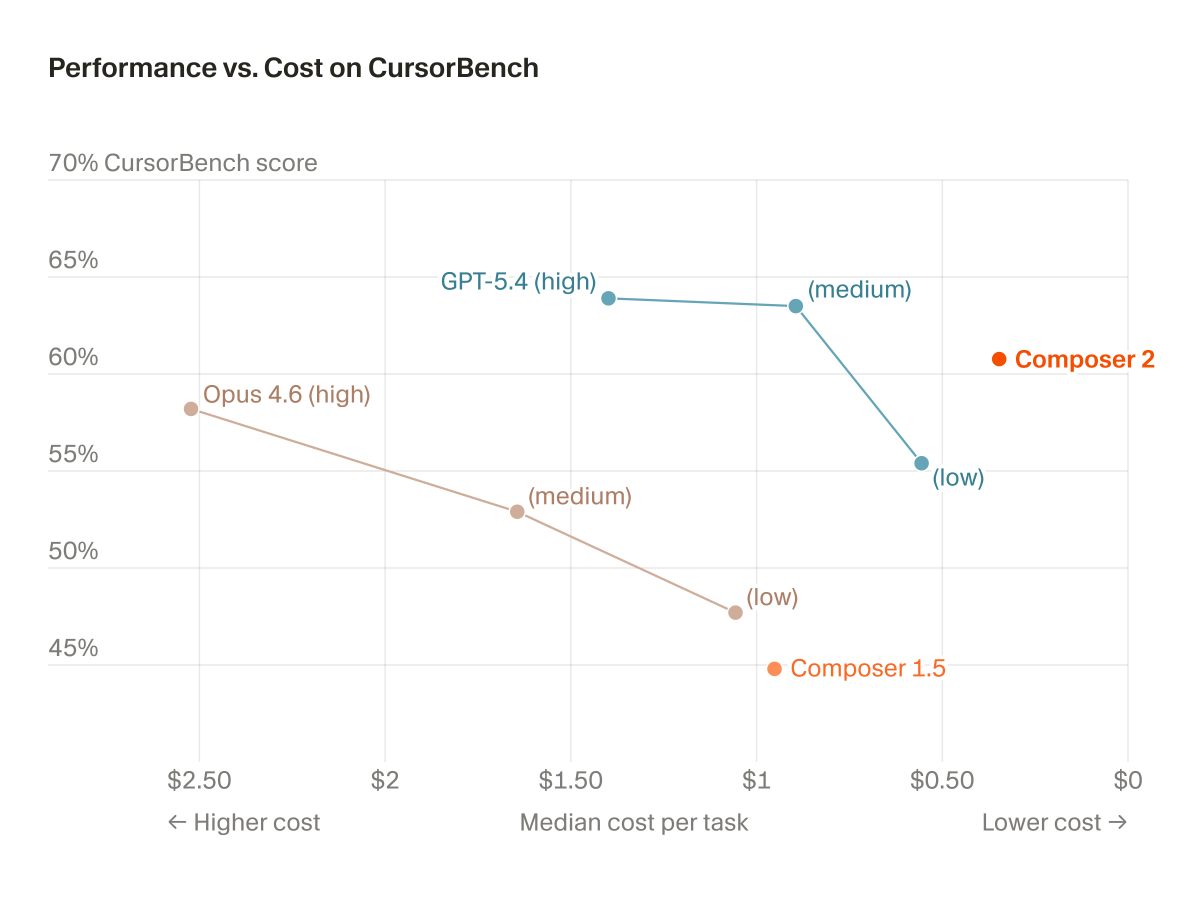

Composer 2 is now available in Cursor.

🎙️ First time doing this 🙂 — I filled in for François on a one-off podcast episode with @alex_damian_ and @jerrywliu! We had a really fun, wide-ranging conversation about AI, theory, and how research actually gets done. Watch here 👇 youtu.be/6eQiKI9Q-Sk

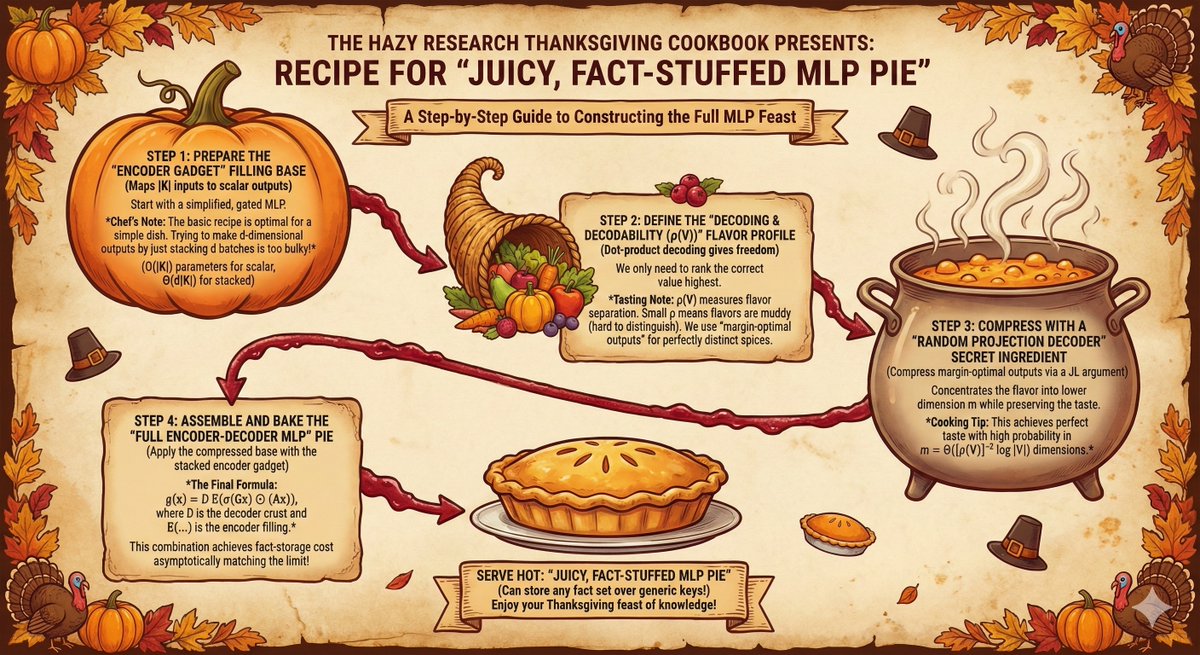

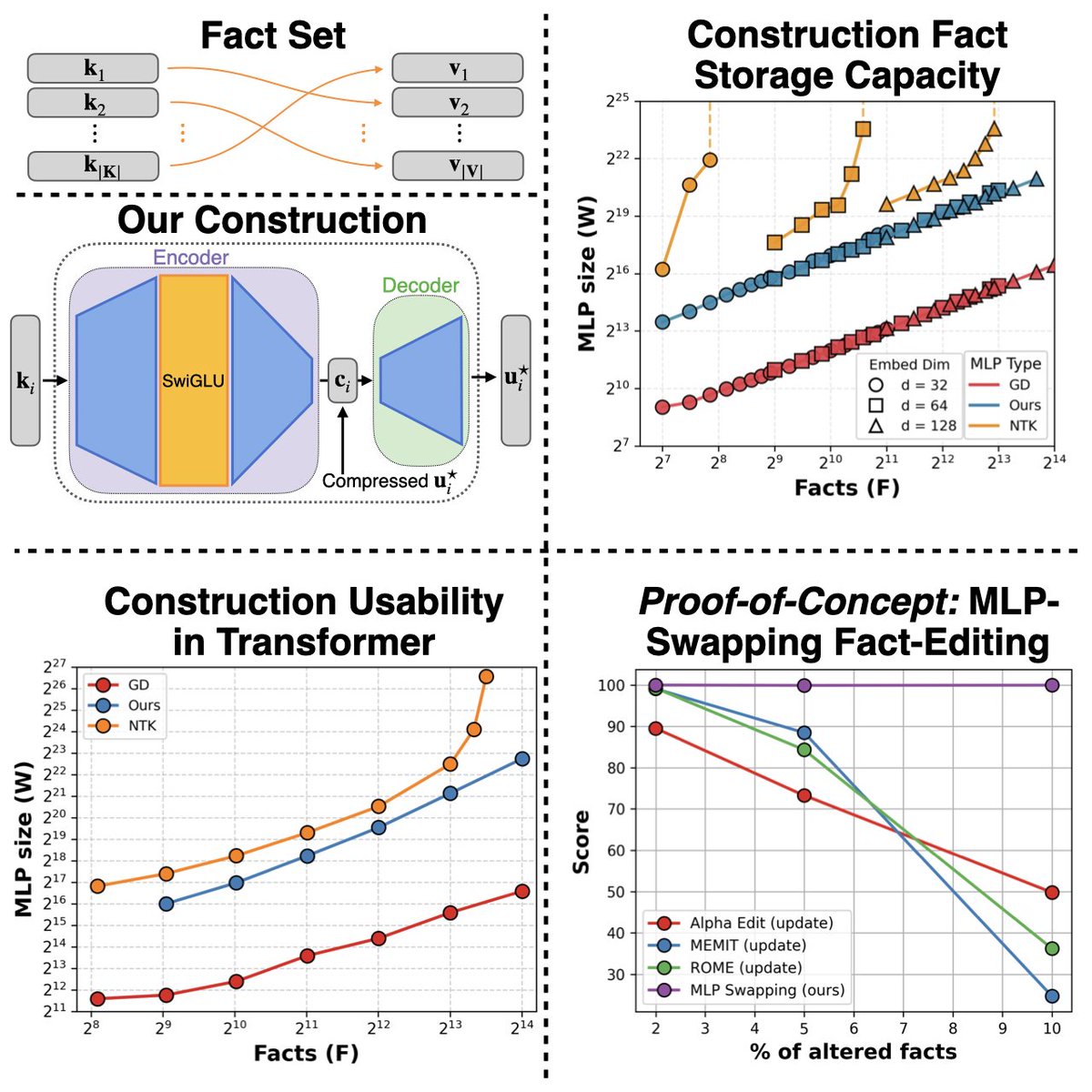

Happy 🦃 Thanksgiving weekend! 🍂 This year, we cooked up a new recipe for juicy fact-storing MLPs. Instead of picking apart trained models, we asked: Can we construct fact-storing MLPs from scratch? 🤔 Spoiler: we can & we figured out how to slot these hand-crafted MLPs into Transformer blocks as modular fact stores! 🧩 New work with @garctrob @ronnygjunkins @jerrywliu @dylan_zinsley @EyubogluSabri Atri Rudra @HazyResearch! 🧵👇

Part 2 of our MLPs blog post is out! 👀 This time, we’re here to tell you the story 📖 of our quest for a construction that: ✅ Handles general embeddings 🌐 ✅ Asymptotically matches the information-theoretic limit 📊📈 ✅ Is usable within transformers 🤖✨

Happy 🦃 Thanksgiving weekend! 🍂 This year, we cooked up a new recipe for juicy fact-storing MLPs. Instead of picking apart trained models, we asked: Can we construct fact-storing MLPs from scratch? 🤔 Spoiler: we can & we figured out how to slot these hand-crafted MLPs into Transformer blocks as modular fact stores! 🧩 New work with @garctrob @ronnygjunkins @jerrywliu @dylan_zinsley @EyubogluSabri Atri Rudra @HazyResearch! 🧵👇

Happy 🦃 Thanksgiving weekend! 🍂 This year, we cooked up a new recipe for juicy fact-storing MLPs. Instead of picking apart trained models, we asked: Can we construct fact-storing MLPs from scratch? 🤔 Spoiler: we can & we figured out how to slot these hand-crafted MLPs into Transformer blocks as modular fact stores! 🧩 New work with @garctrob @ronnygjunkins @jerrywliu @dylan_zinsley @EyubogluSabri Atri Rudra @HazyResearch! 🧵👇

ChatGPT set agreeableness level

Announcing Olmo 3, a leading fully open LM suite built for reasoning, chat, & tool use, and an open model flow—not just the final weights, but the entire training journey. Best fully open 32B reasoning model & best 32B base model. 🧵