Tae Hwan Jung

128 posts

Tae Hwan Jung

@graykoder

Former AI Engineer | Web3 Developer & Degen: https://t.co/MkJcrSYUac

Day 131/365 of GPU Programming I've been spending time today working on inference for Qwen3.5 (24 GatedDeltaNet layers and 8 GatedAttention layers in a 3:1 pattern) with the goal of reducing latency on my local Nvidia machine without too much of a hit on benchmark quality. Some notes to self from optimizing inference for a hybrid mamba+attention model: - I'm learning that K/V head counts can differ inside the linear-attention block. For example, this model has 16 K heads but 32 V heads (GQA2 inside GDN). From what I can tell, a lot of kernels out there assume k_heads == v_heads, so requires modifications before they can be adopted on such a setting. - Also noticed moving AWQ from g32 to g128 can change quality benchmarks by quite a few percentage points. The g128 recipe is less aggressive but recoverable with the right calibration data. - Learning that calibration data itself is a decision point. Switching from raw web text to an instruction blended corpus seems to preserve instruction following accuracy better at the same bit width (idk, maybe that's obvious to others). A great resource on the Qwen 3.5 model family is @rasbt's amazing Qwen3.5 0.8B From Scratch. Really recommend going through the jupyter notebook to get a better feel for the model architecture.

DS4 by @antirez is a great project! It would be great if @Apple would share an M5 Max 128GB MacBook with him to tune the Metal 4 kernels to make prefill faster on new hardware. 🙏

tested out @antirez' ds4.c this morning. so impressive and delivers. on a M3 max, 128GB, stock ds4 settings: - 14–15 t/s at 62K pre-filled actual coding conversation - memory usage was flat during gen ~85GB res - disk cache is ~8GB for a full 100K context window - thermals were normal, light fan activity - inference server is rock solid so far biggest constraint: anytime there's a compact, we pay the wait-time price of a fresh prefill (~1min per 10k context) before we are back in action. sequential inference + multiple agents in parallel performance is unclear, will report back. I'm so amped.

월간 다운로드 110만 건을 기록하는 PyPI 패키지가 해킹당해 정보 탈취 악성코드가 유포 공격자가 인기 있는 elementary-data 패키지인 파이썬 패키지 인덱스(PyPI)의 악성 버전을 배포하여 개발자의 민감한 데이터와 암호화폐 지갑을 탈취, 위험한 릴리스는 0.23.3 버전

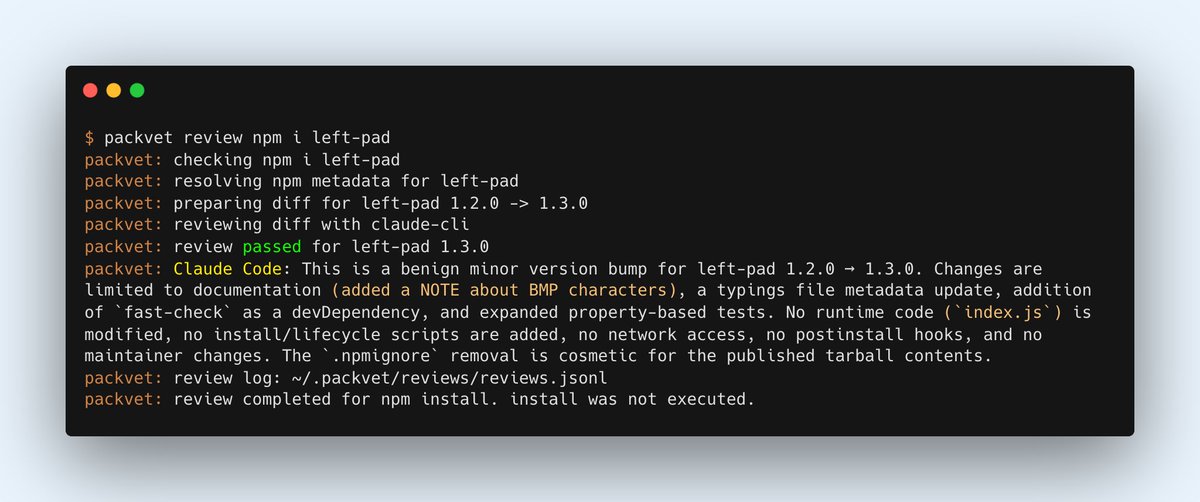

🚨 CRITICAL: Active supply chain attack on axios -- one of npm's most depended-on packages. The latest axios@1.14.1 now pulls in plain-crypto-js@4.2.1, a package that did not exist before today. This is a live compromise. This is textbook supply chain installer malware. axios has 100M+ weekly downloads. Every npm install pulling the latest version is potentially compromised right now. Socket AI analysis confirms this is malware. plain-crypto-js is an obfuscated dropper/loader that: • Deobfuscates embedded payloads and operational strings at runtime • Dynamically loads fs, os, and execSync to evade static analysis • Executes decoded shell commands • Stages and copies payload files into OS temp and Windows ProgramData directories • Deletes and renames artifacts post-execution to destroy forensic evidence If you use axios, pin your version immediately and audit your lockfiles. Do not upgrade.

We’ve identified a security incident that involved unauthorized access to certain internal Vercel systems, impacting a limited subset of customers. Please see our security bulletin: vercel.com/kb/bulletin/ve…