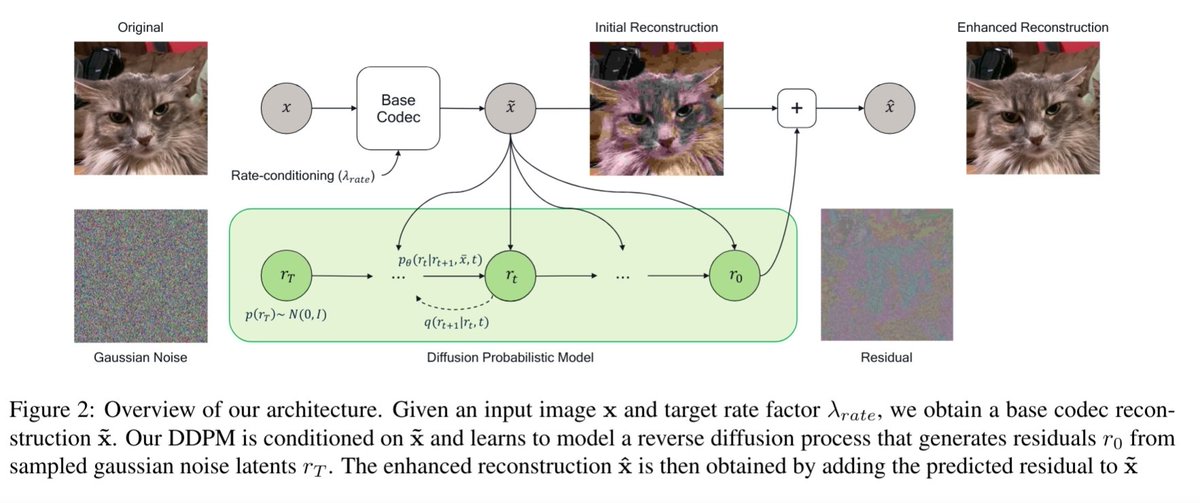

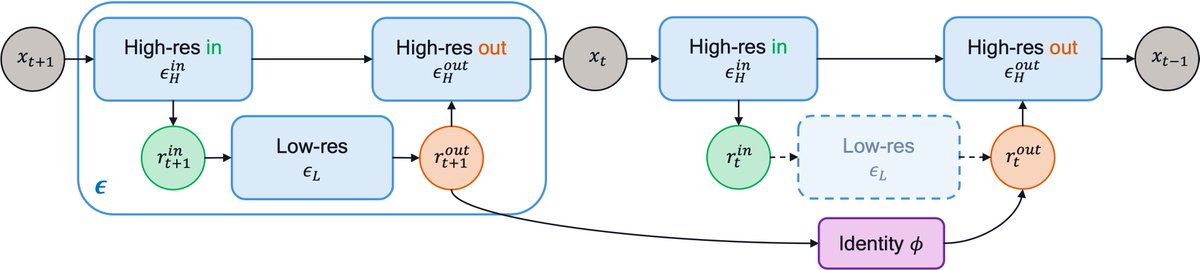

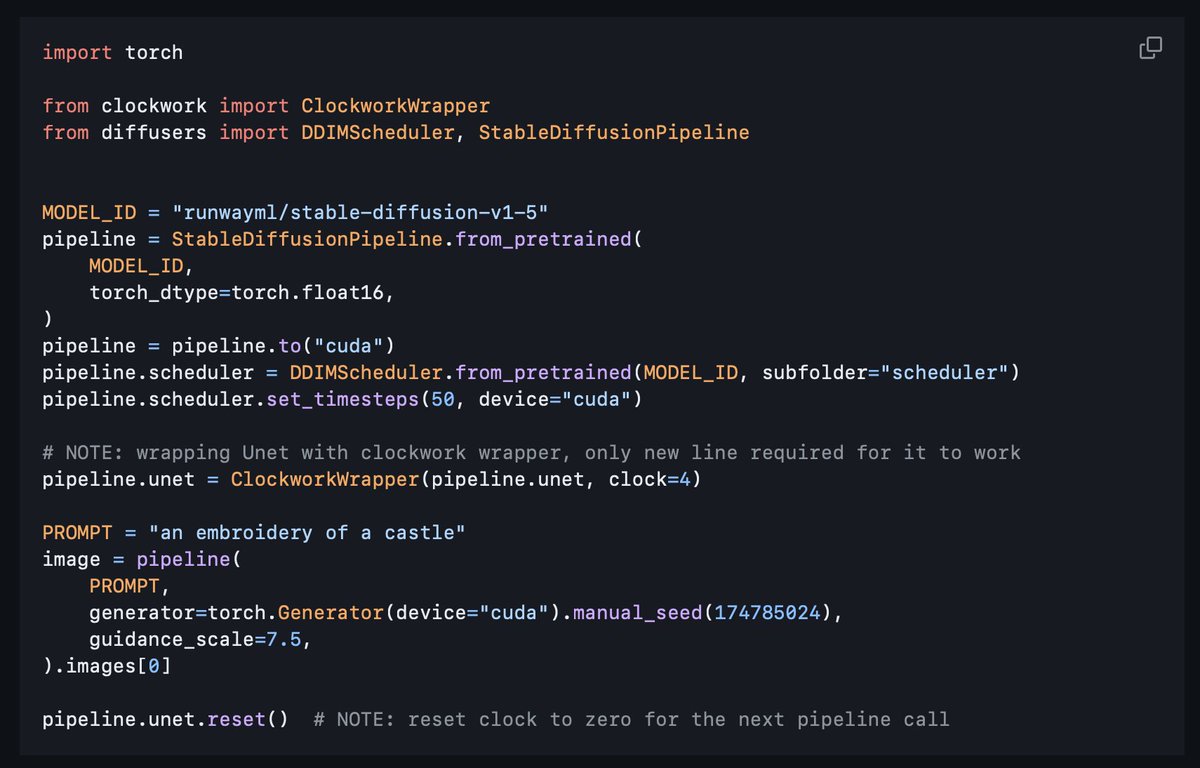

Do we need to run the whole UNet for all the diffusion steps? No! Accelerating your Diffusion Model with a simple trick, even without retraining ?! w/ Amir Ghodrati, @noor_fathima_ , @gsautiere, Fatih Porikli and @peterjensen_ arxiv.org/abs/2312.08128 Code: Stay tuned