augustus odena

291 posts

augustus odena

@gstsdn

Something new. Previously: AI research at TBD Labs / Meta; cofounder at @AdeptAILabs; Invented Scratchpad / Chain-of-Thought; Google Brain

This is wild. theaustralian.com.au/business/techn…

You can just do things (genetically sequence your dog’s tumors and design a bespoke mRNA cancer vaccine to save her life)

@ProfSchleich @dbroockman @j_kalla If Democrats want to stay relevant, and to deliver for the public, they cannot wait for unions to change. They need to break more often with their friends. nytimes.com/2026/02/23/opi…

The proposed 175 Park Avenue skyscraper in Manhattan should not be allowed to mog the Chrysler building this bad

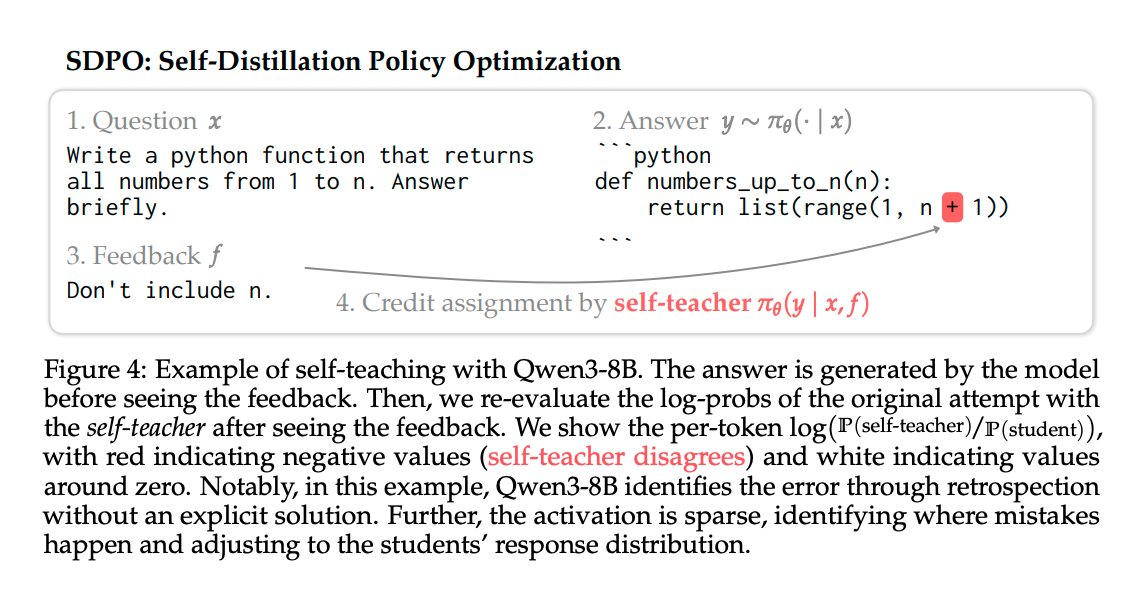

I have a bunch of thoughts about continual learning and nothing to do with them (I'm working on something else) so I figured I'd just turn them into a post: First: I think people use "continual learning" to point at a cluster of issues that are related but distinct. I'll list the issues and then speculate about what might fix them. a) Catastrophic Forgetting: If you train on a distribution D_1 and then do SFT on another distribution D_2, you'll often find that your performance on D_1 degrades. The extent of this issue is maybe overstated and is more true for SFT than for RL, but it's still real. There's also an important limit case that IMO is a "smell" for the way we train models currently: repeated data can seriously harm model performance. Humans don't have this problem - they eventually just stop updating on redundant information. b) No integration of new knowledge into existing concepts: If I tell you that I'm from Michigan, you will update your representation of me to include that fact, but you will also change your representation of Michigan. Michigan becomes "a place where someone I know is from". If people ask you questions about Michigan in the future, you may answer those questions with this knowledge in mind. If I tell a chatbot that I'm from Michigan, that fact may get stored in a memory file about me, but it won't affect the model's representation of Michigan. c) No consolidation from short-term memory to long-term memory: Models are good at accumulating information in context up to a point, but then they run out of context (or effective context) and performance degrades. They are missing a mechanism for deciding what's important to retain and then taking action to retain it. d) No notion of timeliness: When you tell a human something, they also retain *when* they learned it, and that "time tag" becomes part of the representation. Humans experience a stream of facts unfolding through time. As a result we form an implicit model of history/causality. Many people can answer "who is the current Pope?" without doing a special search step. Now that we've enumerated the issues, we can think about solutions. In AI it's always worth asking why the simplest solution can't work. The very simplest thing to try is what chatbots currently do: maintain a text file of memories. IMO it's obvious why this is unsatisfying relative to what humans are doing, so I won't dwell on it. I expect there are many refinements you could make here around learning to manually manage the text file, but I also expect these approaches to be brittle. A slightly smarter thing that's still pretty simple is to just keep updating the model during deployment. I actually do think that something like this could work OK, but we probably need a few tweaks. Some combination of the following seems worth pursuing: 1. Sparser updates: Catastrophic forgetting is plausibly worsened by updating all parameters at once. I'd bet either selective parameter updates or making the models themselves sparser could help a lot here. @realJessyLin has some nice work here. 2. Update only on surprising data: Updating on every new datapoint feels wrong. We want a mechanism that decides what’s important/surprising and only updates on that subset. A crude version: automatically generate questions about a datapoint and only update if the model fails to answer them. The hippocampus also has interesting mechanisms for doing this that seem worth trying to emulate. 3. Don't train on the raw datapoint w/ the standard objective. Given that we've decided a datapoint is surprising, I don't think we should just train on it using the standard objective. We may want to automatically generate questions about a given corpus and train on the answers (as in e.g. the Cartridges work) and we may also want to modify the objective. One option is to do prompt distillation with the facts in context - the intuition being that the consolidated model ought to answer the question as though it has the facts on hand. These are "in-paradigm" approaches compatible with LLMs. I bet they’ll yield real progress, but I’m also starting to suspect something less in-paradigm may be needed for a really satisfying solution. That’s for a different post though.