Eduardo Velasquez @[email protected]

2.1K posts

Eduardo Velasquez @[email protected]

@guardivelasquez

Senior software engineer with over 35 years of experience in software development and architecture.

Lynnwood, WA, USA Katılım Temmuz 2009

477 Takip Edilen206 Takipçiler

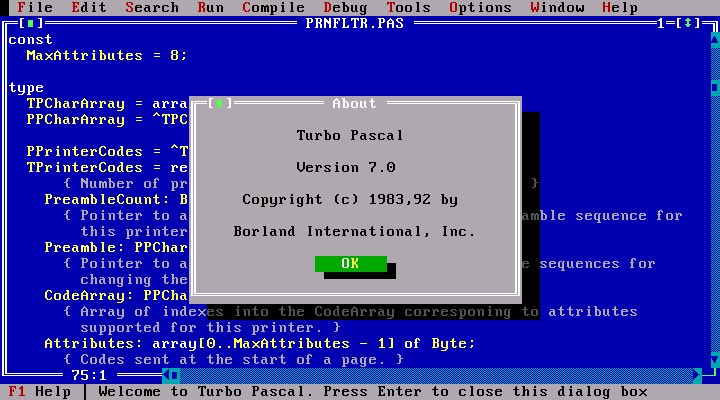

@ChShersh 7.0? That’s a newer version, I started with 2.0. I’ll get out of my sarcophagus and reorganize my wraps…

English

Eduardo Velasquez @[email protected] retweetledi

@KooKiz @guardivelasquez It's not a great patterns but the null-flow analysis doesn't handle certain things well so it's kinda necessary in many cases (and or the alternative being way more complexity).

English

@KooKiz @guardivelasquez Maybe the other person will know, but is that value ever going to be null? Cause if so, It should be

TheType? Name = null;

If its not supposed to be null ever,then it should be

The Type Name { public get; init; } Which will gently remind you if you dont assign in a constructor

English

Eduardo Velasquez @[email protected] retweetledi

The BIGGEST lie in AI LLMs right now is “It learns.”

We are confusing a Context Window with a Brain. They are not the same thing.

The cold reality is that AGI is much further away than the hype suggests.

1. The "Read-Only" Problem

Your brain physically changes when you learn. Synapses fire, pathways strengthen. You evolve.

An LLM is a read-only file.

Once training finishes, that model is stone cold frozen. It never learns another thing. When you correct it, it doesn't "get smarter." It just pretends to agree with you for the duration of that specific chat session. Close the tab, and the lesson is gone forever.

2. The "Context" Trap

"But it remembers what I said earlier!"

No, it doesn't.

Engineers are just re-feeding your previous sentences back into the prompt, over and over again, at massive compute cost.

That isn't memory. That is a scrolling teleprompter.

We are simulating continuity by burning GPU credits, not by building a persistent mind.

3. The RAG Band-Aid

Because models can't learn, we built an entire infrastructure of Vector DBs and RAG (Retrieval-Augmented Generation) to glue external data onto them.

It’s duct tape.

We are trying to fix a lack of intelligence with a search engine. We are building systems that are 90% scaffolding and 10% model, trying to force a static equation to act like a fluid thinker.

4. The Result?

We have built the world’s greatest improviser, but it has severe anterograde amnesia.

It can fake a conversation, but it cannot grow. It cannot compound knowledge.

True AGI requires Online Learning—the ability to update weights in real-time without catastrophic forgetting.

We don't know how to do that yet. Not at scale. Not stably.

Until we solve the "Static Weight" problem, we aren't building a mind. We're just building a really fancy autocomplete.

Inference != Intelligence.

English

Eduardo Velasquez @[email protected] retweetledi

holy sh*t. this is hands down the coolest website i have ever found in my life. it's a live feed of the freaking Hubble Telescope AND James Webb Space Telescope. and the resolution is honestly so incredible i didn't think it was real.

unbelievable.

spacetelescopelive.org

English

Eduardo Velasquez @[email protected] retweetledi

@jaketapper Your struggle is not to see it…

English

@GamewithDave Mine starts with 196

English

Eduardo Velasquez @[email protected] retweetledi

I finally got time to finish and publish a website that has been long time coming. If you're into home automation, this might be something for you. homeautomationcookbook.com

English

@TheTNHoller Would shooting someone and not killing them be barely legal too? Their mental contortions to justify the unjustifiable are mind blowing. 🤦

English

@guardivelasquez @NeuralWave_ @trikcode Not by the OP. Come on man, do I need to spell out everything?

English

@NeuralWave_ @trikcode I came here to see the answer. No answer. I guess I should have known an account named wise/trikcode would be a troll.

English

Eduardo Velasquez @[email protected] retweetledi

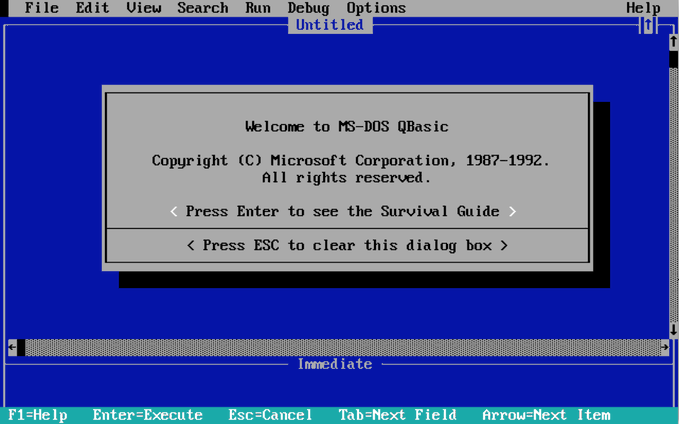

I restore old computers, and am always curious how their classic performance compares to modern PCs.

Are they a hundred times faster? A thousand? A million?

Here are the stats. I wrote a Dhrystone test in K&R C that runs on everything I own, unmodified, from the PDP-11/34 up to my M2 Mac Ultra Pro.

FWIW, the Mac Pro is 200000X as fast as the 11/34!

Code: github.com/davepl/pdpsrc/…

English

@anton_fimin @Aaronontheweb Nah! Diagonal slices all the way! 😂

English