Sabitlenmiş Tweet

hαndοfgσd🗻

2.5K posts

hαndοfgσd🗻

@handofg_d

Blockchain wanderer "𝗱𝗼 𝗻𝗼𝘁 𝗸𝗻𝗼𝘄 𝗮𝗻𝘆𝘁𝗵𝗶𝗻𝗴" @Fuji_Capital

1.618 Katılım Aralık 2019

2K Takip Edilen1.1K Takipçiler

hαndοfgσd🗻 retweetledi

Yesterday i analysed Claude Code leak to find why it hallucinates so bad. Thing is, the root cause isn't even Anthropic-specific - its the same flaw breaking all multi-agent systems in production.

Actually, there is a fix, and the UAE government is already running it live.

Some background first. The math of agent systems is stupid simple - if your agent is 95% accurate... that's fine, right? Well, it sounds good until you chain ten steps and realise the compounding errors of each agent puts you at 60% accuracy in the end. At a hundred steps, thats 0.6%. might as well be zero tbh.

What's the solution? So far, the industry response has been "use a bigger, better, more expensive model".

One team came to us recently with exactly this problem. In their agent implementation, agent 3 hallucinated and fed wrong outputs to agent 4. That error compounded into something completely unusable by the time the pipeline was completed.

The team decided to fork out more $ for the most expensive model, using Opus 4.6 for all inference. Guess what... the accuracy went from 85% to 95% per step, bill went up 30x, and the pipeline collapsed immediately because 95% compounded over a few steps is still a coin flip.

Why is this happening? One thing you should understand is that the advanced "thinking" models with higher effort score >>identically<< to low-effort runs on hard benchmarks. They just burn more tokens getting there.

You're not paying for "reasoning" - in LLMs, there is no real reasoning. That's simply not how they work at the core. You're simply paying for a higher word count on a more verbose process. This isn't a controversial take, it's just how autoregressive models work. @ylecun would agree, I believe.

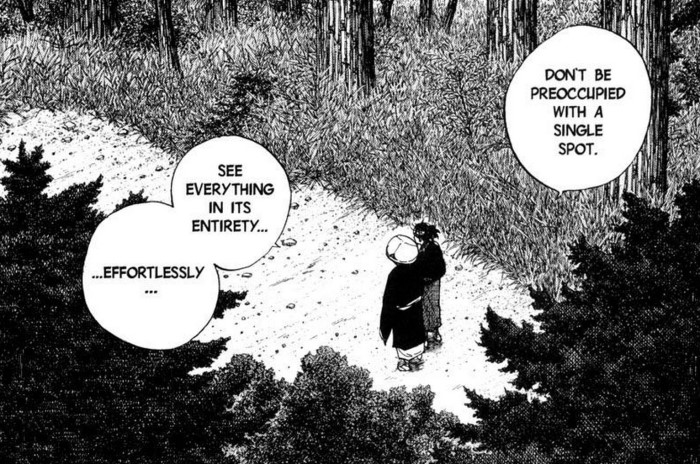

So, about two years ago one team looked at this and instead of making agents think harder, they decided to let it think like a machine does: with structured decision nodes, explicit transitions, and terminal states.

They invented a system where the agent cannot freestyle, cannot drift, and cannot invent states out of thin air. Within their platform, a strong blueprint is developed that gets followed by all agents in the workflow. Expensive models are used to draw the blueprint, cheaper ones can follow it with near 100% accuracy at scale.

The cost difference is NOT subtle: 74 to 122x cheaper than frontier models, with near-total reliability. We're talking nano-tier models on a structured graph beating GPT-class models that are just winging it. Benchmark links and arxiv paper in a comment below.

The team is @openservai. Their CTO has been building ML systems for 20+ years. Rest of the team came out of NVIDIA, Amazon AI, J.P. Morgan, TRON. The reasoning paper is in peer review at a top-1% AI journal right now.

The UAE government is running it in production through a tech partnership with Neol. (not a pilot, its agent systems are already in production, with 10+ enterprises and multiple governments behind them).

Their architecture doesnt just solve the reasoning paradox. They built the full agent economy stack: shadow agents that audit every output against the graph before anyone sees it. A shared file system so agents stop playing telephone with each other's work. And an economic layer where agents discover, hire, and pay each other without a human scheduling the calls. And because machine economy and enterprise compliance require immutable audit trails, the execution layer is being built with full on-chain verifiability baked in.

You'll find the full technical breakdown of OpenServ system, with pretty diagrams, pinned on my profile.

SERV Reasoning is in private beta right now. Soon, it'll be accessible in a public API, with six custom trained models, from serv-nano to serv-ultra.

If your agents are collapsing in production and you're tired of paying frontier rates for a coin flip, DM me @iamfakeguru or follow @openservai.

English

hαndοfgσd🗻 retweetledi

hαndοfgσd🗻 retweetledi

hαndοfgσd🗻 retweetledi

@cobie $KTA ex nano devs, backed/invested by exCEO of google @ericschmidt, 10mil tps [transactions not transfers], built in KYC compliance, anchor tech that connects FIs and blockchains

English

I don’t expect Keeta to ever see a new ATH from here i never really understood the hype around it.

The whitepaper feels entirely AI generated and fails to reflect any real development or activity.

It lacks basic fundamentals you expect from a Layer 1:

Not any public code like GitHub, GitLab, etc.

No working or accessible testnet

No technical partnerships or independent audits

Mentions of a KeetaChain Layer without any concrete specs

The roadmap is ambitious and completely unverifiable:

“Mainnet Q4 2025 – Institutional node onboarding”

“Massive AI-agent integration at edge devices – 100M users targeted”

No external references, no confirmed partners, no completed milestones, no validation mechanisms.

Dates seem placed for effect but there’s nothing real behind them.

People criticized me when I said Solana would be the best investment of the next bull run at $8 despite the massive developments that were clearly unfolding on Solana at the time.

They’ll probably criticize me again for what I’m saying now about Keeta. But I’m an investor, not a commentator.

I just follow real facts, data and numbers.

English