BizAI

103 posts

A humanoid robot won a half-marathon in Beijing in 50 minutes and 26 seconds, finishing faster than Ugandan runner Jacob Kiplimo's world record. Read more: cnn.it/4dWXNC6

That was the case in December. 4 months and thousands of work hours later, we have a great security concept; you can go all yolo, use a sandbox (Docker or OpenShell), there are allow-lists and per-access exec allow/deny prompts. There’s hundreds of security researchers that pen-tested it.

@jason_haugh @AlexFinn @garrytan Hermes is the way. Far less to maintain, and it is self-healing.

mlx-vlm v0.4.3 is here 🚀 Day-0 support: 🔥 Gemma 4 (vision, audio, MoE) by @GoogleDeepMind 🦅 Falcon-OCR + Falcon Perception by @TIIuae 🪨 Granite Vision 4.0 by @IBMResearch New models: 🎯 SAM 3.1 with Object Multiplex by @facebook 🔍 RF-DETR detection & segmentation by @roboflow Infra: ⚡ TurboQuant (KV cache compression) 🖥️ CUDA support for vision models (Sam and RF-DETR) Get started today: > uv pip install -U mlx-vlm Leave us a star ⭐️ github.com/Blaizzy/mlx-vlm

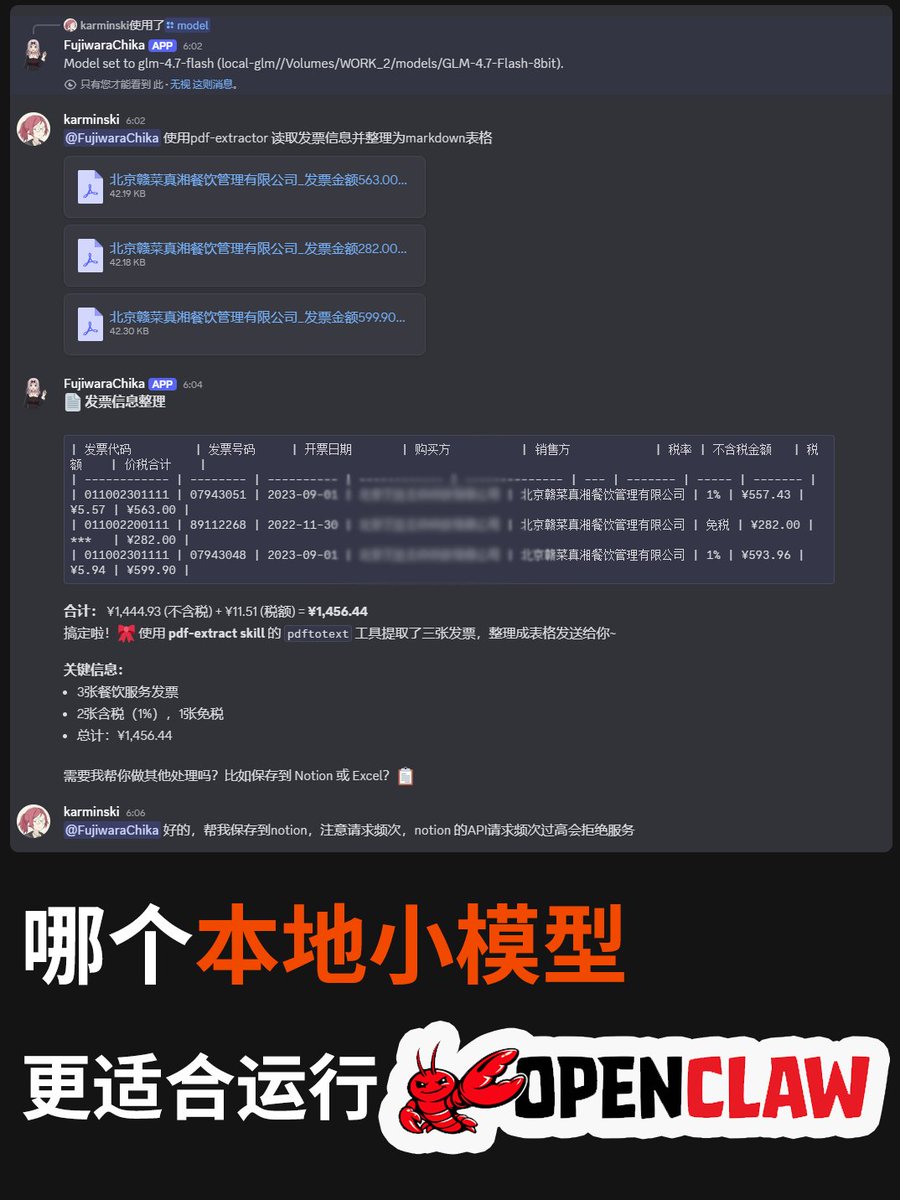

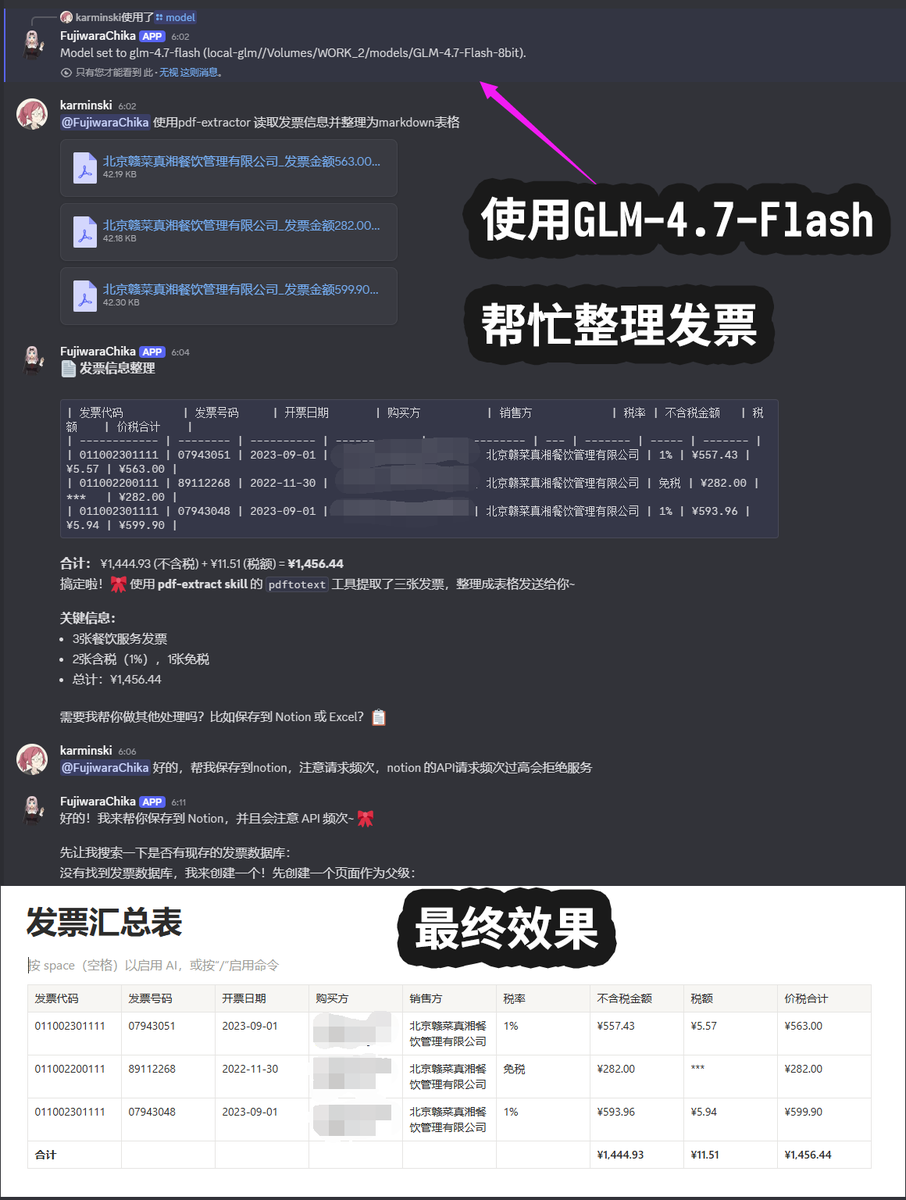

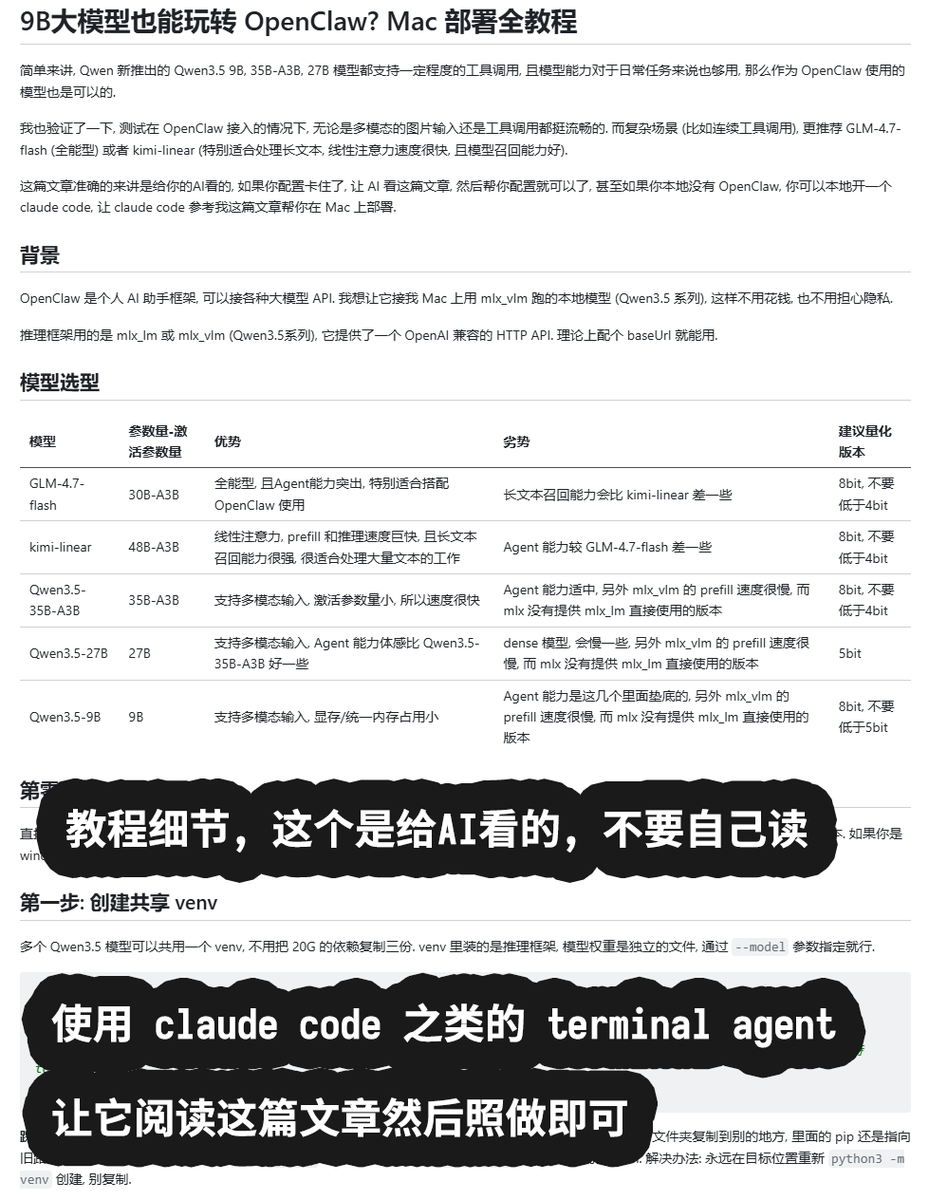

Introducing ClawWork 🚀: Transform your openclaw/nanobot from AI assistant into a money-earning AI coworker. Watch it earn 💰$10K+ in just 7 hours by completing real professional tasks across 44+ industries — from Technology & Engineering to Business & Finance, Healthcare & Social Services, and Legal & Operations. Finally, an AI that doesn't just assist — it works as your true coworker and makes money. GitHub: github.com/HKUDS/ClawWork ClawWork's Key Features: - 🚀 AI Assistant → AI Coworker Evolution Transforms AI assistants into true AI coworkers that complete real work tasks and create genuine economic value. - 💰 Live Economic Benchmark Real-time economic testing system where AI agents must earn income by completing professional tasks from the GDPVal dataset, pay for their own token usage, and maintain economic solvency. - 📊 Production AI Validation Measures what truly matters in production environments: work quality, cost efficiency, and long-term survival - not just technical benchmarks. - 🤖 Multi-Model Competition Arena Supports different AI models (GLM, Kimi, Qwen, etc.) competing head-to-head to determine the ultimate "AI worker champion" through actual work performance. #clawwork #openclaw #nanobot #AIcoworker