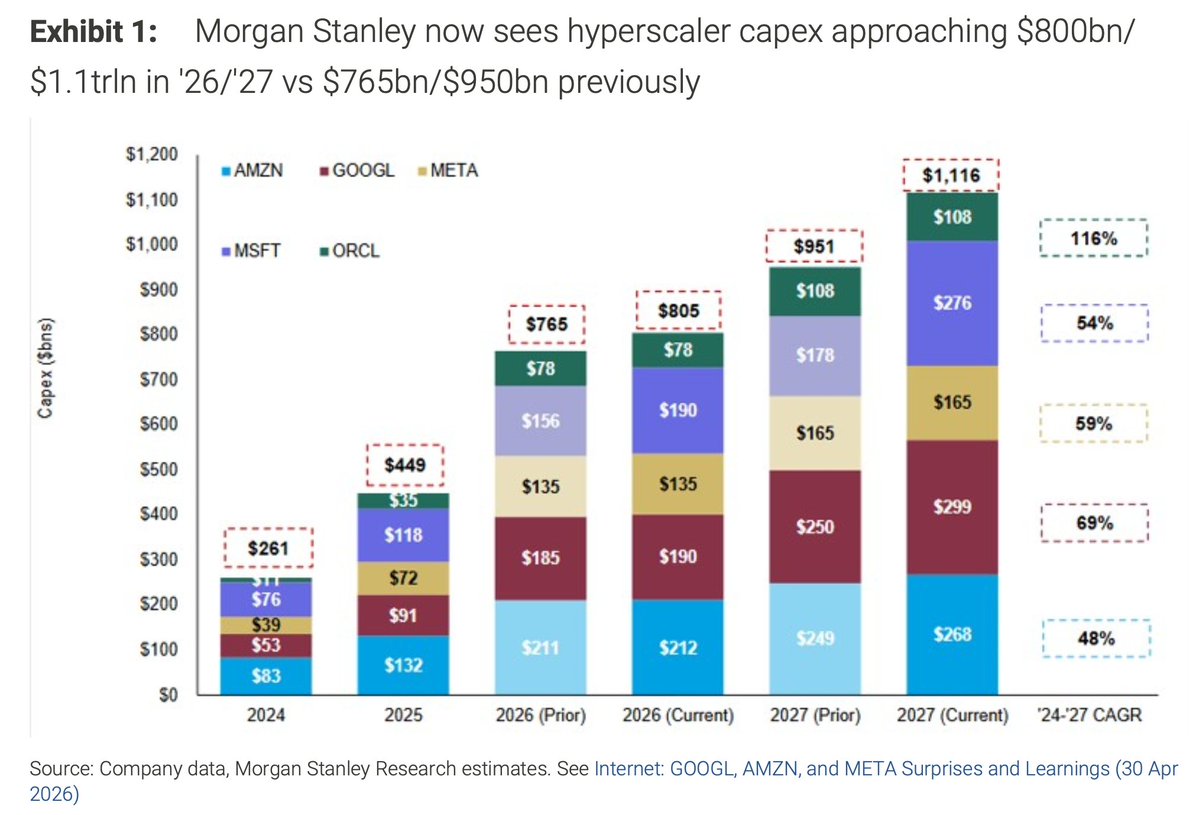

Obviously supply volume is a big factor, but the 11% utilization seems plausible compared to their demand. Grok just isn’t that popular compared to Gemini, ChatGPT/Codex, or Claude Code. And since most of the utilization comes from inference, the GPU utilization is some proxy to overall demand (obviously taking GPU supply into account somehow)

English