huhhrsh

306 posts

100k H100 cluster for the Whale, and we get open source AGI next year

The greatest innovation to come out of China was not Paper Clocks Printing Banking Gunpowder or even Tiktok Deepseek And the absolute army of drones, EVs, and robots It was the government system that raised 1 billion people out of poverty and then proceeded to export their hard work and innovations globally.

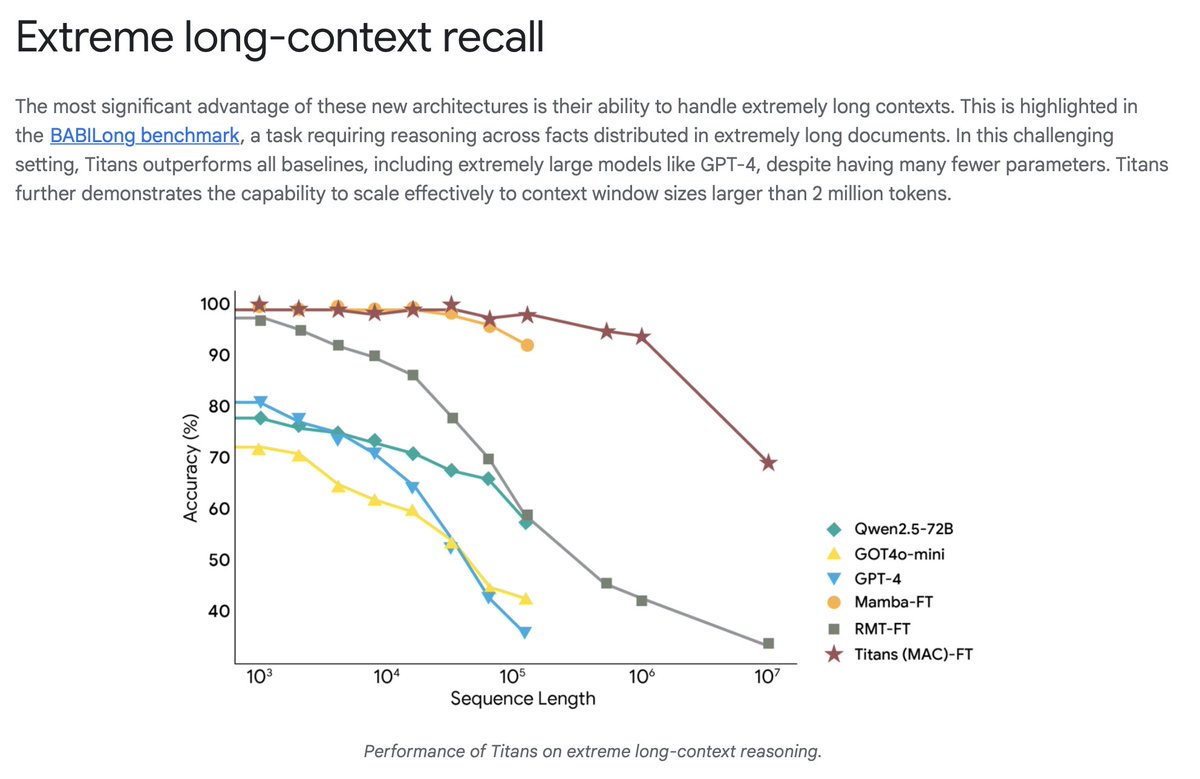

This morning at NeurIPS, Rich Sutton reminded us that we need continual learning to reach AGI. This afternoon, Ali Behrouz presented a Google poster paper, Nested Learning, which provides new ideas on the path to continual learning. I recorded the 40 minute talk as it might be useful for some researchers in the audience. For the rest of us, I subscribe to Andrej Karpathy's suspicion that it will take a 5-10 papers like this to move us to AGI from where we are now, just like it took about 10 papers to move from 2012's AlexNet to ChatGPT. At the very end, I ask Ali how far along to continual learning this represents. Full paper link below, as well as a YouTube link. ps. sorry about the first 2 minutes of bad audio since there were 2 idiots standing beside me have a conversation right in front of this presenter in a rather packed poster presentation. Honestly, tamp down your egos guys and show come common courtesy!

From the past 1 year me and my co-founder have been trying to build smart glasses like Meta Ray-Ban, targeting to assist and solve visual inspection in large-scale manufacturing to reduce takt time and make things more efficient. Back in July @RealityLabs published their research "A generic non-invasive neuromotor interface for human-computer interaction", which was followed by announcements of Meta Ray-Ban Display and Meta Neural Bands. Since the last few weeks we have been trying to reproduce the research with whatever resources we could gather, from DIY muscle sensor kits to researching VR headset controllers, and finally had some clarity and decided to make our own nerve bands from first principles. As an embedded systems engineer, I see it as a Bluetooth input device which you can connect with your computer, laptops, and smartphones, which will take input from EMG electrodes and an IMU sensor. For the POC prototype I'm using an ESP32. I'm making use of RTOS to keep all the tasks deterministic and with low latency. At the moment, I'm figuring out how to get a clean EMG signal from the sensor and visualize it so that I can use that data plus the IMU data and build a machine learning model to better predict controlling gestures. Will be sharing regular development updates here. Curious to know what you guys think.

New model alert in Transformers: EoMT! EoMT greatly simplifies the design of ViTs for image segmentation 🙌 Unlike Mask2Former and OneFormer which add complex modules like an adapter, pixel decoder and Transformer decoder on top, EoMT is just a ViT with a set of query tokens ✅