Bhaktavaschal Samal

7.3K posts

Bhaktavaschal Samal

@bspectacledGOAT

As long as I offer an abundance of solutions in artificial intelligence, so long I’m alive; a lack of solutions will foreshadow my extinction.

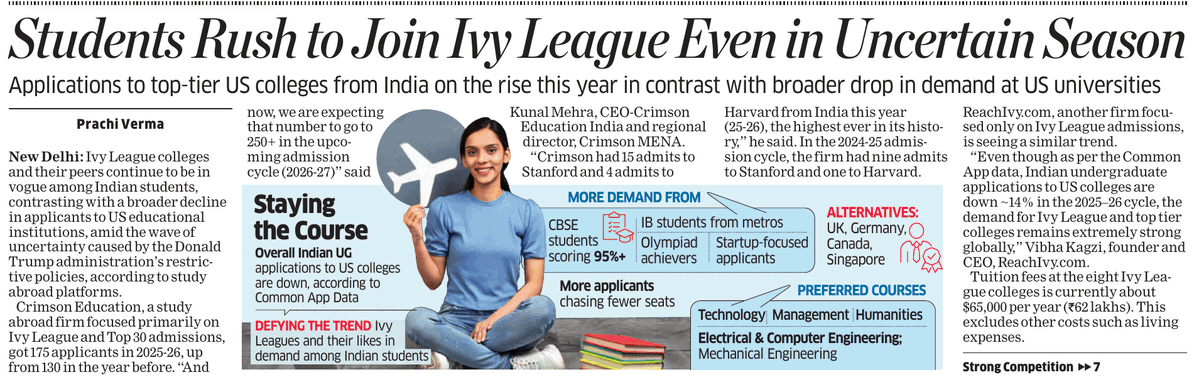

Narayana Murthy trashes AI as hype, asks IT leaders to be less greedy ndtv.com/india-news/nar…

New Google paper: A forecast needs context, not just history. Some patterns are caused by events, not time. Nexus reframes forecasting as a reasoning problem, where events and numbers have to explain each other. Nexus argues that forecasting improves when models read the world around the numbers, not just the numbers themselves. In the Zillow tests, one Claude-based version cut average MAPE by 86.6% versus direct chain-of-thought prompting. That matters because most time series models are fluent in pattern, but mute about cause. A housing inventory curve can reflect seasonality, mortgage pressure, migration, layoffs, and local supply, while a stock price can be bent by earnings, regulation, hype, and fear. Nexus separates those jobs instead of asking one prompt to do everything. One agent turns messy historical text into a clean event timeline, one reads the broad regime, another tracks local shocks, and a synthesizer reconciles them with calibration from past errors. The interesting result is not merely that context helps, but that structure helps the language model use context without losing the time series. The evidence is still narrow: Zillow counts, seven equities, post-cutoff data, and single-run evaluations, so this is not a universal law of forecasting. But the direction is clear: future forecasters will not only extrapolate curves; they will argue about what made the curve move. ---- Paper Link – arxiv. org/abs/2605.14389 Paper Title: "Nexus : An Agentic Framework for Time Series Forecasting"

MBBS from AIIMS Delhi, took IITM Online degree during college, then cleared GATE Exam with AIR-1 and took M.Tech in IISC Bangalore. Now works as director in a US based Medical Informatics company

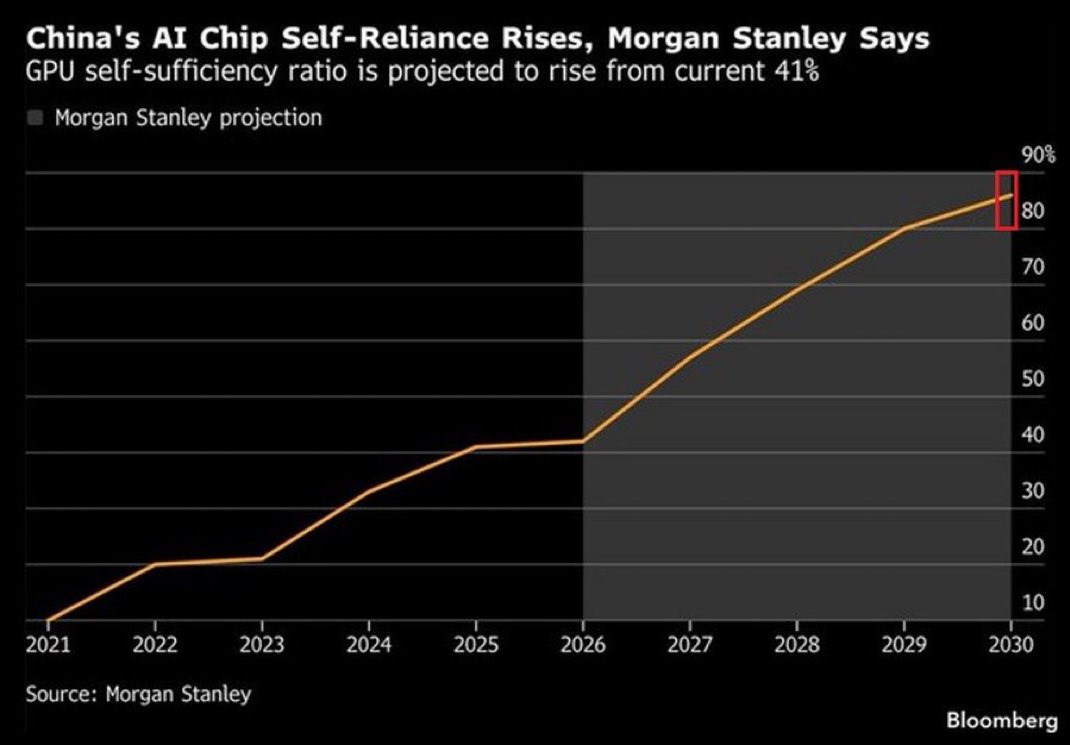

China is reducing its reliance on foreign AI chips: China's AI chip self-sufficiency ratio is up to a record 41%. This measures the proportion of domestic AI chip demand met by locally produced chips, rather than imported ones. This ratio has QUADRUPLED over the last 5 years. The AI chip self-sufficiency ratio is now projected to more than DOUBLE to ~85% by 2030, according to Morgan Stanley. In other words, China could meet nearly all of its own AI chip demand domestically within 5 years. China's AI chip independence is accelerating.

continual learning is coming in 2026. i'd bet anything on it. it won't necessarily be “just update the weights after every interaction.” it’ll be layered: - context/memory adapts 'fast' - weights adapt 'slow' - the system preserves plasticity instead of overwriting itself "Learning, fast and slow" is a signal in that direction. and if academia is publishing papers on it, you best believe the AI labs are on top of it too. it will be a 1000x more important breakthrough than reasoning. it's honestly terrifyingly awesome.

Netherlands: PM Modi says this is a decade of crisis, expressing concern over energy crisis. Warns that gains of last few decades can be wiped off if the situation doesnt improves.