Egor Riabov

6.3K posts

Egor Riabov

@imobulus

Math freak with trace amounts of musician

OpenAI’s global policy chief, Chris Lehane, thinks the discussion around AI has gotten out of hand. "When you put some of those thoughts and ideas out there, they do have consequences.” 📝: @ceodonovan sfstandard.com/2026/04/15/ope…

Universal HIGH INCOME via checks issued by the Federal government is the best way to deal with unemployment caused by AI. AI/robotics will produce goods & services far in excess of the increase in the money supply, so there will not be inflation.

Happy Tax Day, New York. We’re taxing the rich.

mostly i'm just fucking sick of arguing with people who have never even heard of the sequences

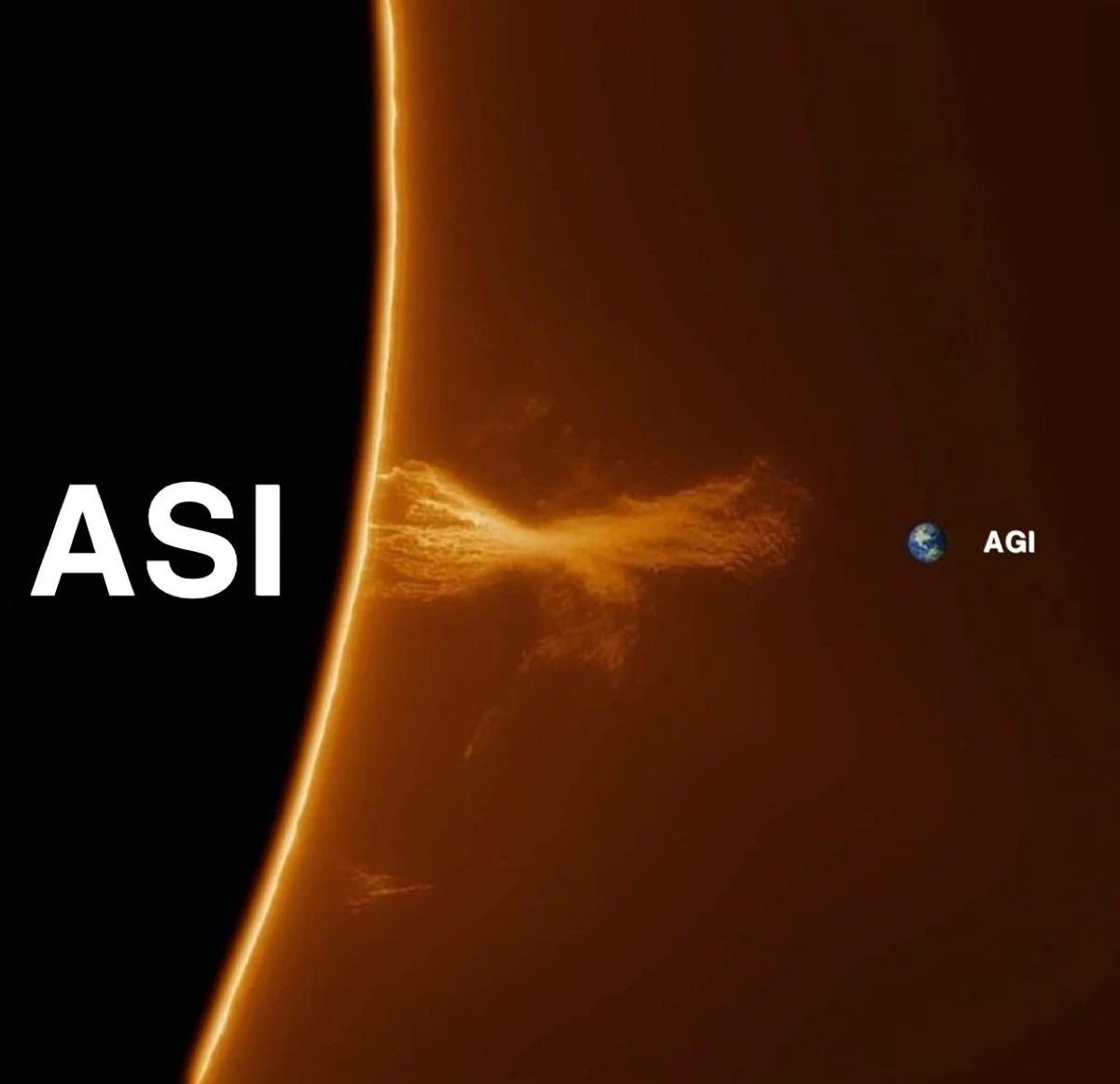

it is confirmed, we reached AGI

On AI consciousness: 1. Functionalism is the most popular view of philosophy of mind, which basically says sufficiently complex machines *will* be conscious. 2. Most other views are also compatible with AI consciousness (e.g. identity theory, panpsychism). 3. Eliminativists say humans aren't conscious either. 4. Another 11% are agnostic, higher than almost any other question.

Will models be aware that they're being trained? @Mihonarium on Bayesian Supercycle.

I think a lot of people's attitude to AI doing autonomous science will come down to whether they think the point of science is understanding or the point is getting the right answer.