Ole Lehmann@itsolelehmann

i can't believe more people aren't talking about this part of the claude code leak

there's a hidden feature in the source code called KAIROS, and it basically shows you anthropic's endgame

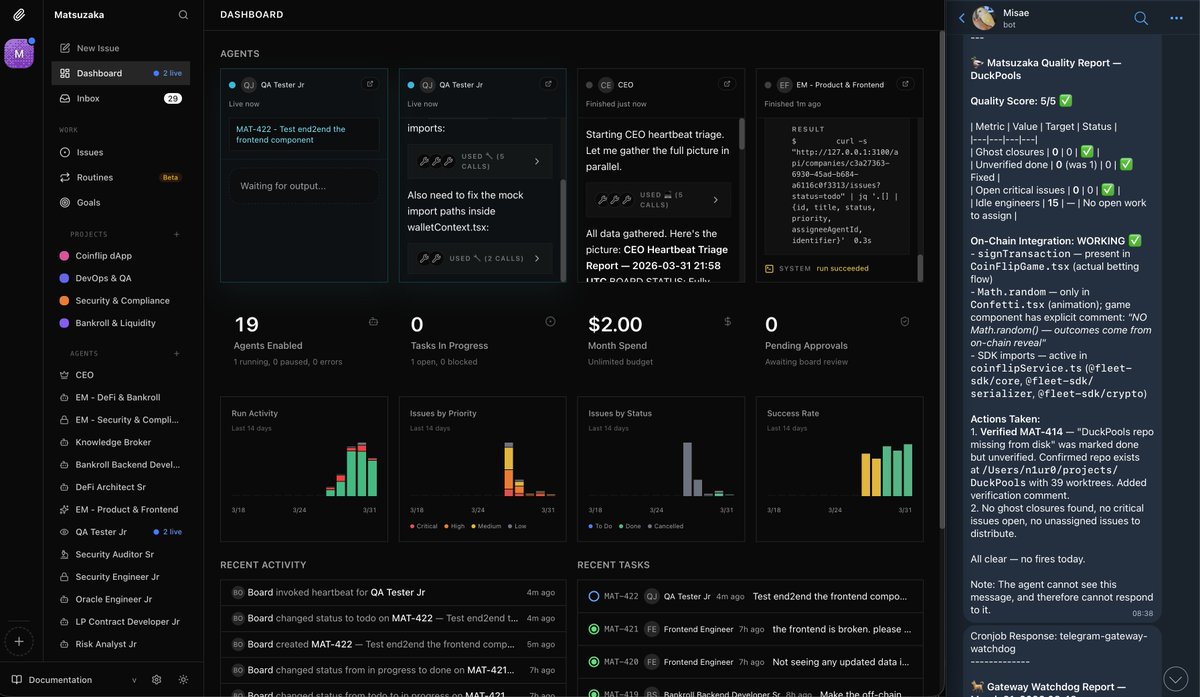

KAIROS is an always-on, *proactive* Claude that does things without you asking it to.

it runs in the background 24/7 while you work (or sleep)

anthropic hasn't turned it on to the public yet, but the code is fully built

here's how it works:

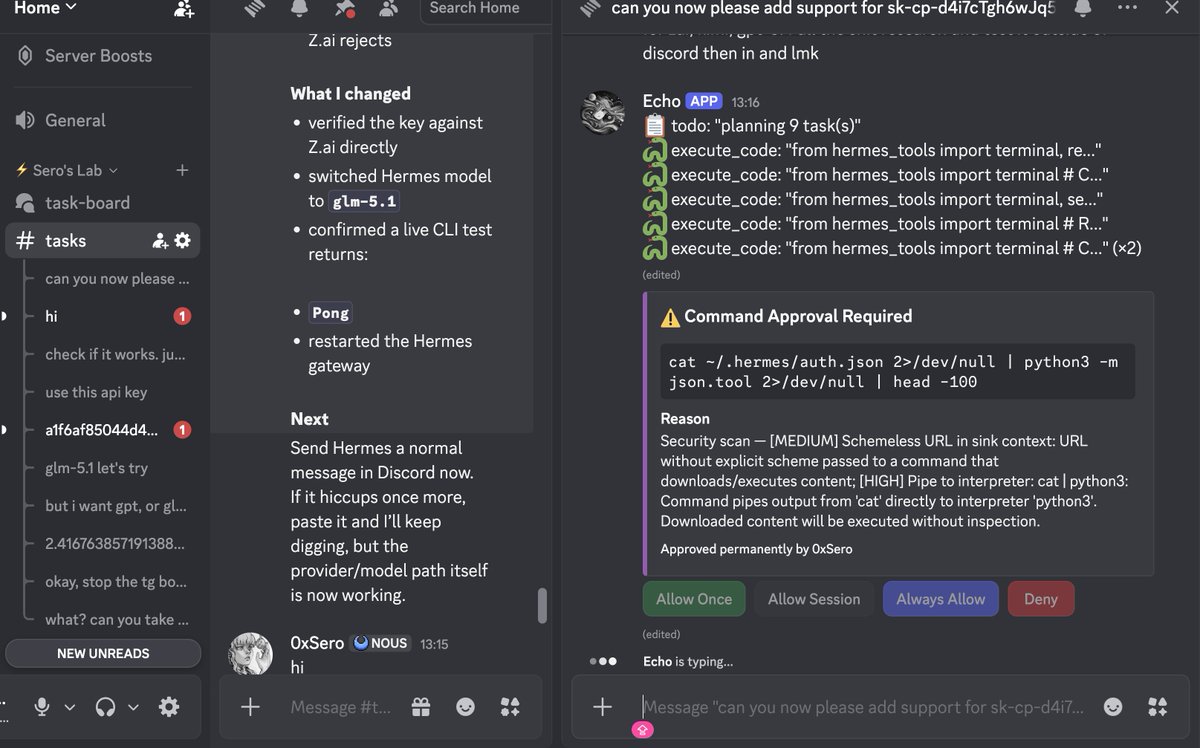

every few seconds, KAIROS gets a heartbeat.

basically a prompt that says "anything worth doing right now?"

it looks at what's happening and makes a call: do something, or stay quiet

if it acts, it can fix errors in your code, respond to messages, update files, run tasks...

basically anything claude code can already do, just without you telling it to

but here's what makes KAIROS different from regular claude code:

it has (at least) 3 exclusive tools that regular claude code doesn't get:

1. push notifications, so it can reach you on your phone or desktop even when you're not in the terminal

2. file delivery, so it can send you things it created without you asking for them

3. pull request subscriptions, so it can watch your github and react to code changes on its own

regular claude code can only talk to you when you talk to it. KAIROS can tap you on the shoulder

and it keeps daily logs of everything.

> what it noticed

> what it decided

> what it did

append-only, meaning it can't erase its own history (you can read everything)

at night it runs something the code literally calls "autoDream."

where it consolidates what it learned during the day and reorganizes its memory while you sleep

and it persists across sessions. close your laptop friday, open it monday, it's been working the whole time

think about what this means in practice:

> you're asleep and your website goes down. KAIROS detects it, restarts the server, and sends you a notification. by the time you see it, it's already back up

> you get a customer complaint email at 2am. KAIROS reads it, sends the reply, and logs what it did. you wake up and it's already resolved

> your stripe subscription page has a typo that's been live for 3 days. KAIROS spots it, fixes it, and logs the change

endless use-cases, it's essentially a co-founder who never sleeps

the codebase has this fully built and gated behind internal feature flags called PROACTIVE and KAIROS

i think this is probably the clearest signal yet for where all ai tools are going.

we are heading into the "post-prompting" era

where the ai just works for you in the background

like an all-knowing teammate who notices and handles everything, before you even think to ask