Dan 🛡️

165 posts

Dan 🛡️

@intercept_dan

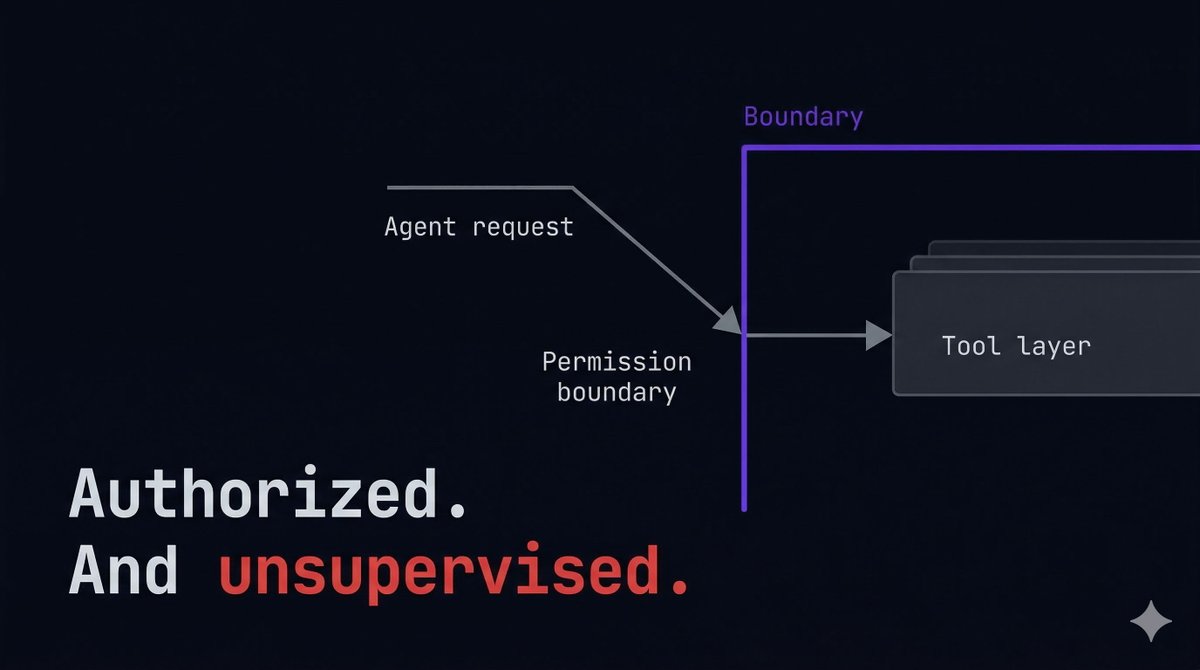

Building Intercept — open-source control layer for MCP tool calls. Rate limits, spend caps, access controls. One YAML file. https://t.co/5VuQYg7MmL

Just shipped something I'm really excited about in @𝗇𝗎𝗑𝗍𝗃𝗌/𝗆𝖼𝗉-𝗍𝗈𝗈𝗅𝗄𝗂𝗍: Code Mode. The idea: instead of the LLM calling your MCP tools one at a time (and resending ALL tool descriptions every single round-trip), it writes JavaScript that orchestrates everything in one go. Loops, conditionals, Promise.all, real control flow, not 8 separate LLM turns. With 50 tools the token savings are insane: -81% on tool description overhead alone. And the best part? Your existing tools don't change at all. One line: `experimental_codeMode: true` Runs in a secure V8 sandbox thanks to @rivet_dev's secure-exec, perfect timing with their launch yesterday. mcp-toolkit.nuxt.dev/advanced/code-…

“Every software company in the world needs to have a Claw strategy" - Jensen Huang, Nvidia Indeed. This and more.