Hebb Rule is Enough for AGI/A Creative I - Jayan

1.5K posts

@isolvedagi2

creation = subjective higher order novelty maximization = obj function of all life/intelligence/AGI. A Hebbian SNN reservoir does this by default. see blog

India made a movie trying to aura farm their war with Pakistan and I’m sorry but I can’t stop laughing 🤣

Daily reminder the average Indian man has less grip strength than the average Brazilian woman.

Madhavan became famous as Farhan in Three Idiots. Ranveer Singh became famous as Murad in Gully Boy. Look at them now.

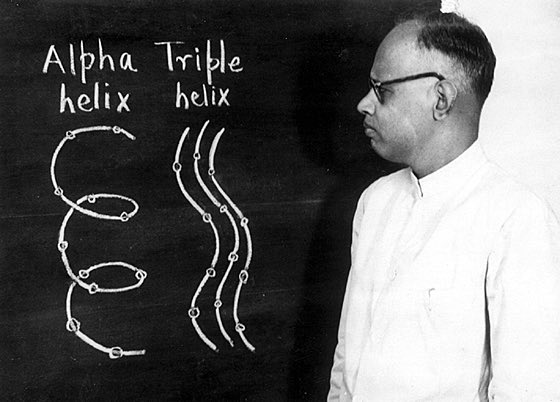

Neuroscience has become increasingly concerned with prediction, and machine learning with causal explanation, with each field adopting methods from the other, writes @gershbrain. Will this bring us closer to understanding neural systems? thetransmitter.org/the-big-pictur…

Who is this illiterate now? 1. India had fully developed Calculus atleast 250 years before Newton in kerala school starting with Madhava. The development started much before. 2. India had developed Algebra (Bij Ganit) almost 2000 years before Gauss. 3. It was India whose Trigonometry the west learnt & Regiomontanus copied our sanskrit textbooks which helped Euler even devise a trigonometric formula centuries later. I can keep on giving you fact checks. But please move ur lazy ass and study yourself instead of asking these stupid embarrassing qs. Ur lack of education isnt the fault of Indians.

Former OpenAI researcher Scott Aaronson: AI superintelligence may render humans as obsolete as baboons in zoos. It is a potential successor species. Says, global leadership is unprepared to manage this existential shift over the next 25 years.

Books would be shorter if the average IQ was higher. Higher fluid intelligence seems to be highly correlated with more rapid generalization from fewer samples. Thus, the length of books is often just to repeat ideas enough times, and enough different ways, for more typical brains to grasp it.