Thomas Paul Mann@thomaspaulmann

Your computer, finally personal.

Today we're launching Glaze, the second product in Raycast's history. It's a big moment for us, and I want to share the thinking behind it.

Something is fundamentally changing about software. We see it every day inside our own team. People who never wrote a line of code are now contributing directly to our codebase. The barrier between "having an idea" and "making it real" is collapsing. And that changes everything.

For six years, we've obsessed over what makes a great desktop app. The speed. The polish. The feeling of something that truly belongs on your computer. We've poured that into Raycast, and hundreds of thousands of people use it every day. But all that knowledge was locked inside our team.

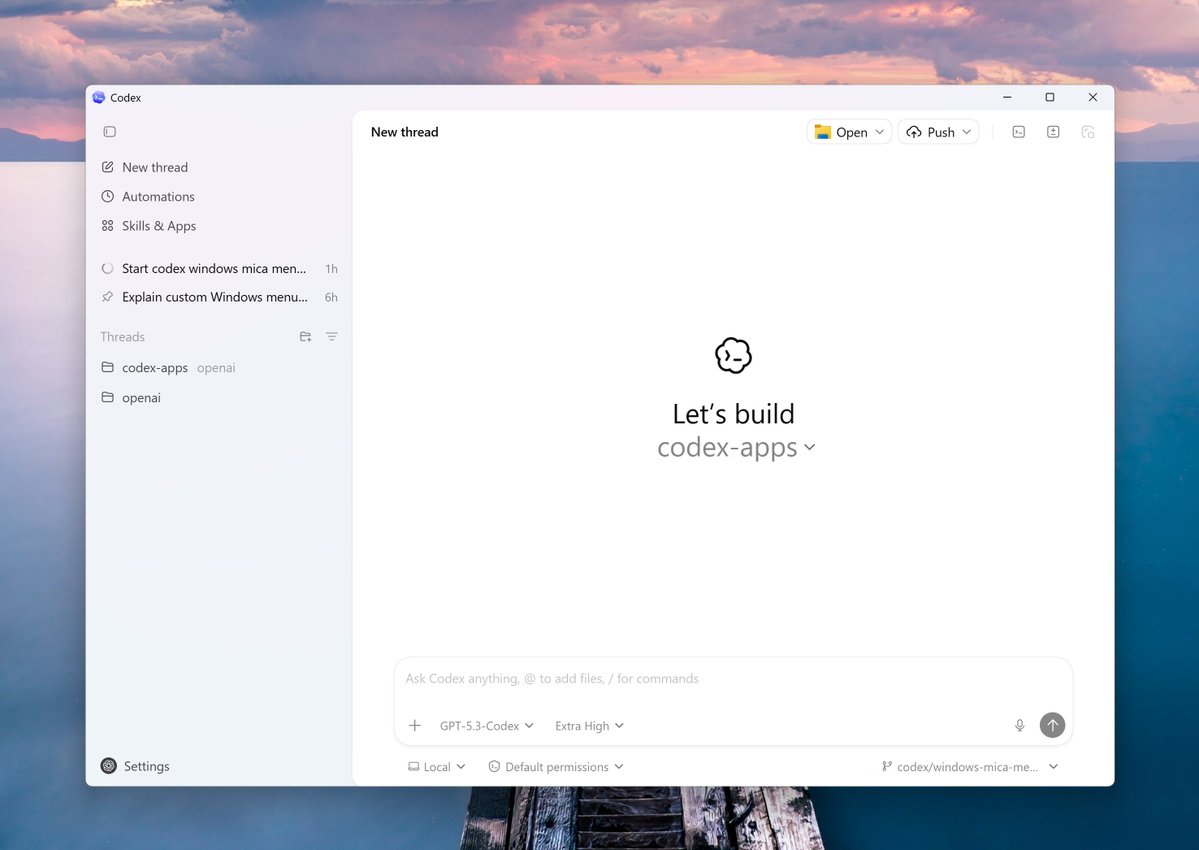

With Glaze, we're commoditizing it. Everything we've learned about building beautiful, capable desktop apps is now available to everyone. Tell Glaze what you want and it builds a real app that lives in your dock or taskbar. It launches instantly, works offline, and taps into the full power of your desktop. Beautiful by default and personal when you want it to be.

It's fun for individuals and works just as well for teams. Our support team built a Glaze app connected to GitHub that runs their entire extension review workflow. Others have built dozens of internal tools. When you can shape software around how your team actually works, everything clicks.

Here's what gets me most excited: we think Raycast becomes even more important in a world full of Glaze apps. Glaze apps will be deeply integrated with Raycast, connecting them all together in ways nobody else can do. The two products make each other better.

A small team started building Glaze from scratch last summer. What they've shipped in that time still blows my mind. When we started Raycast, we set out to change how people use their computers. Glaze is the next chapter of that mission.

We're opening the private beta today, March 4th. Mac only to start. Existing Raycast users will get priority access soon. We can't wait to see what you create and I’ll share some of my apps over the next couple of days.

💠