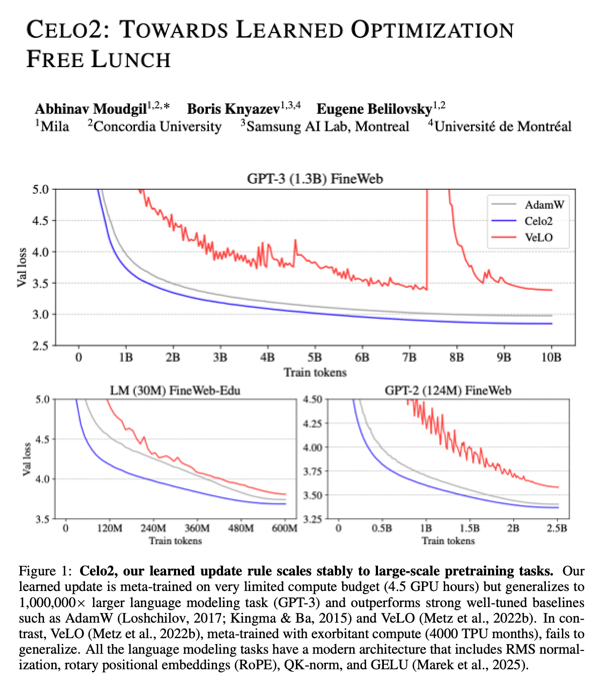

Have you ever trained a neural network using a learned optimizer instead of AdamW? Doubt it: you're probably coding in Pytorch! Excited to introduce PyLO: Towards Accessible Learned Optimizers in Pytorch! . Accepted at @icmlconf ICML 2025 CODEML workshop 🧵1/N