Jim Bohnslav

7.1K posts

Jim Bohnslav

@jbohnslav

training VLMs @zoox

Ghostty 1.3 is now out! Scrollback search, native scrollbars, click-to-move cursor, rich clipboard copy, AppleScript, split drag/drop, Unicode 17 and international text improvements, massive performance improvements, and hundreds more changes. ghostty.org/docs/install/r…

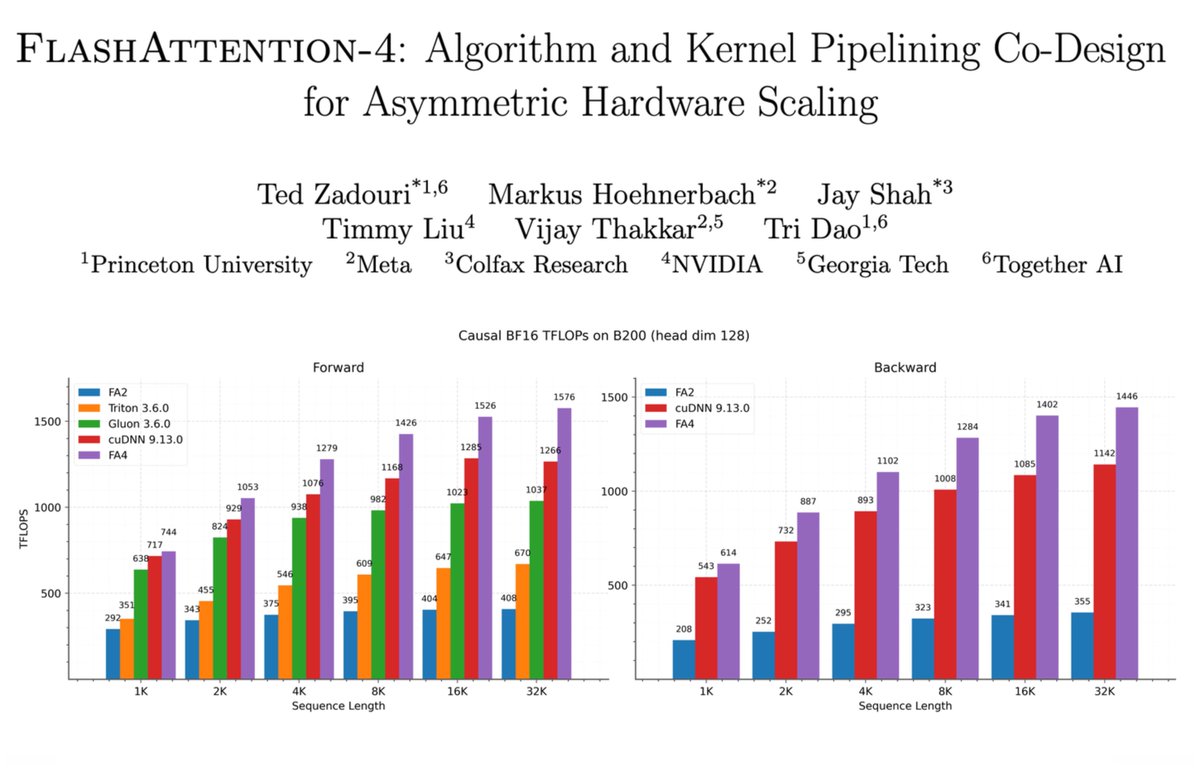

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

Today we're showing Helix 02 that can tidy a living room fully autonomously Figure is designed so when you leave the house, your home resets exactly how you like it

i’m joining forces with @ylecun and an incredible group of people to start AMI Labs @amilabs. AMI isn’t a conventional lab. we don’t intend to become one. a lot to say about why this moment matters, but for now we’re heads down building. join us: amilabs.xyz

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

Um… Claude Code just created a .claire directory? For its git worktrees. Who is Claire?