Dylan Huang

247 posts

Dylan Huang

@dphuang2

Thinky. Passionate nerd. Developer experience.

Mantic used Tinker to RL gpt-oss-120b on judgmental forecasting; the result outperformed frontier models on event predictions. Combined with @_Mantic_AI's forecasting architecture, task-specific training takes us to the cusp of automated superforecasting.

Good piece on the "war time" at Cursor. Some interesting quotes: - The company’s new mandate was labeled “P0 #1”—priority zero: “Build the best coding model.” - Cursor estimated last year that a $200-per-month Claude Code subscription could use up to $2,000 in compute, suggesting significant subsidization by Anthropic. Today, that subsidization appears to be even more aggressive, with that $200 plan able to consume about $5,000 in compute, according to a different person who has seen analyses on the company’s compute spend patterns. forbes.com/sites/annatong…

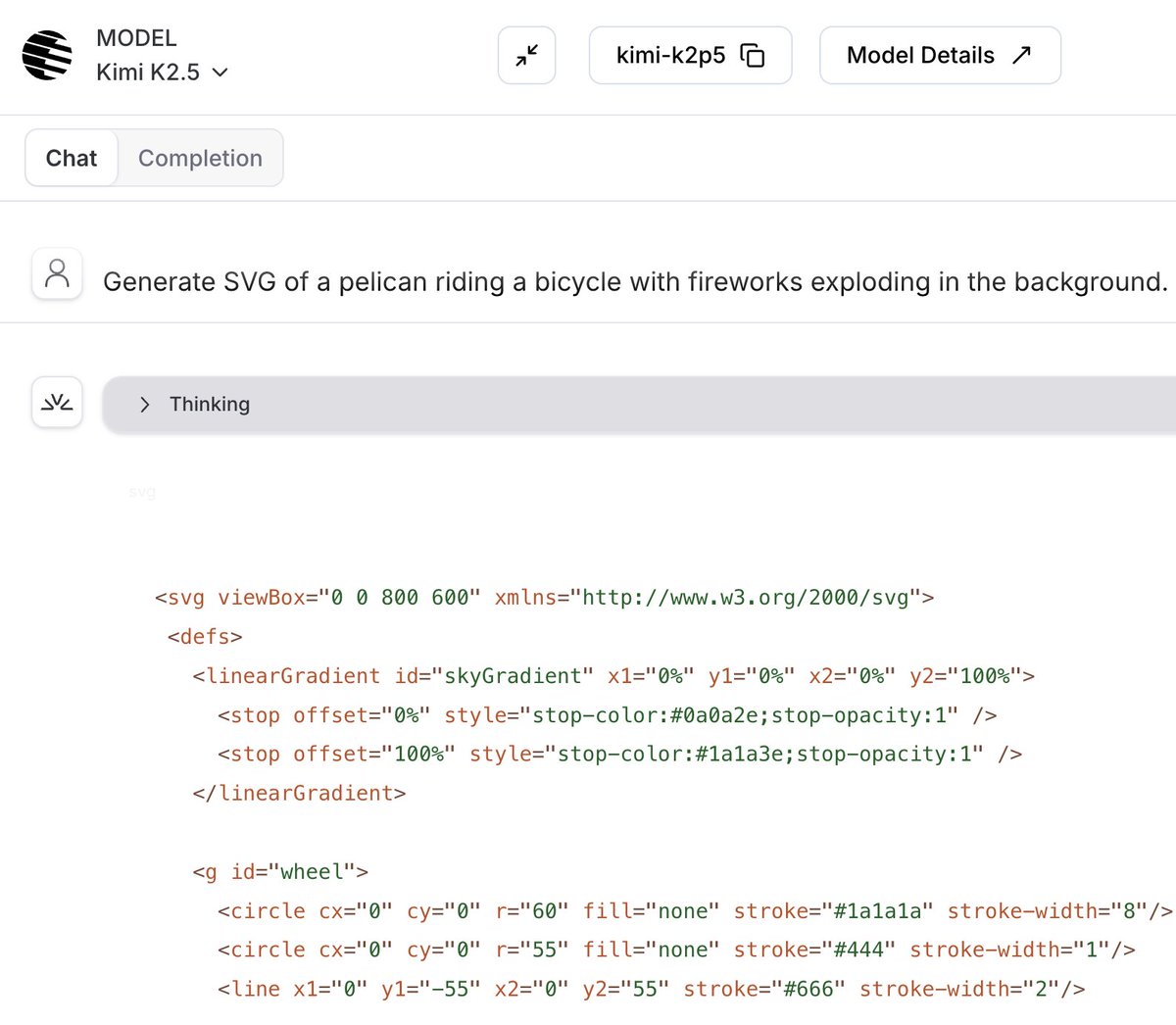

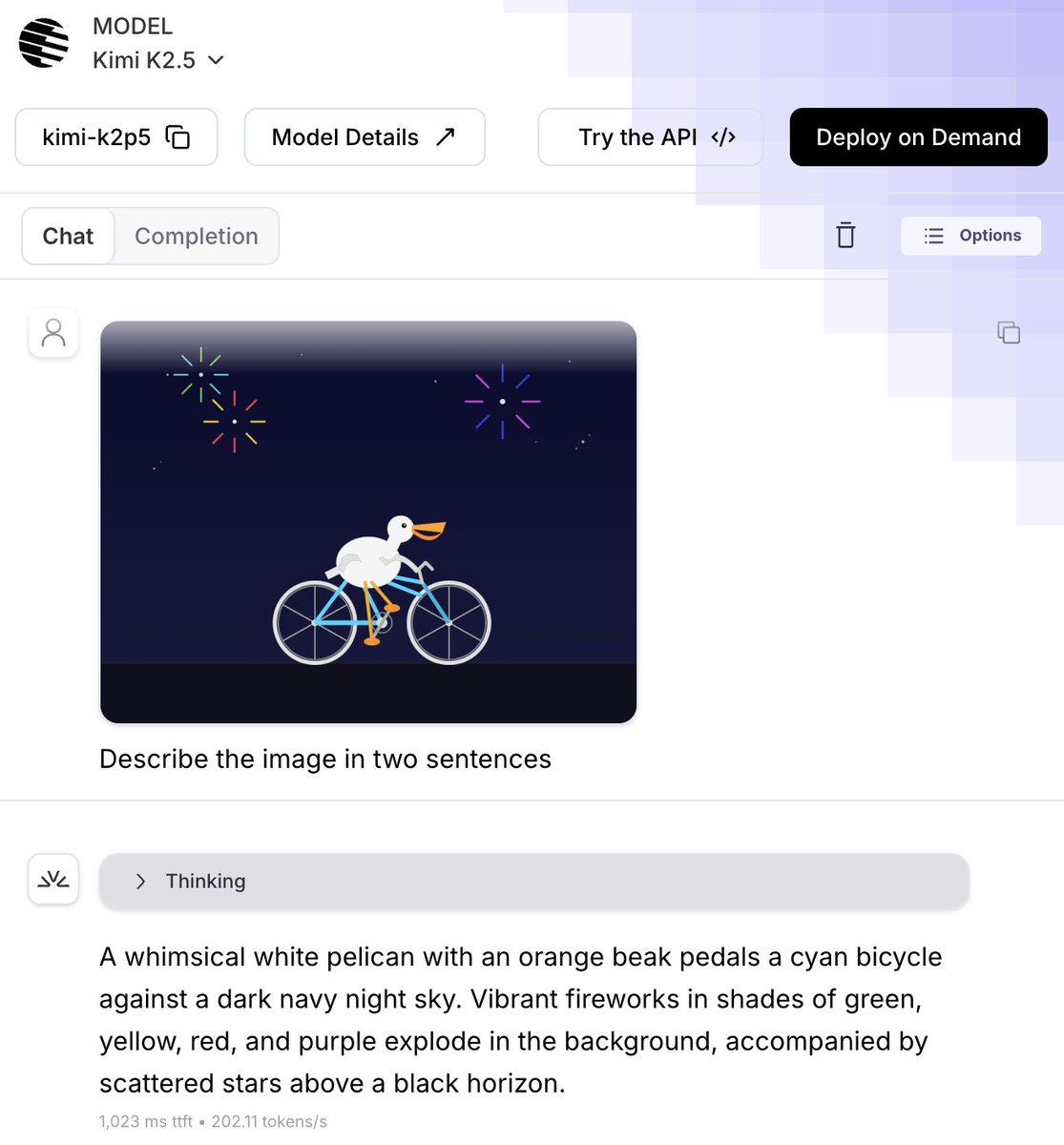

Search agents, whether they're powering deep research, or multi-step QA over a private corpus, spend most of their time and compute in the research loop: query, search, reason, repeat. We wanted to make that loop faster and more accurate. So we optimized two things jointly: the retrieval stack itself, and the planner that decides when and how to search. A trained planner on our fastest retrieval config matches an untrained planner on the most expensive one, at half the latency. Every arrow in this plot points up and to the left. [1/n]