Sabitlenmiş Tweet

Jennifer Hsia

21 posts

Jennifer Hsia

@jen_hsia

PhD student @mldcmu | Prev. @PrincetonCS

Pittsburgh, PA Katılım Aralık 2021

180 Takip Edilen181 Takipçiler

Jennifer Hsia retweetledi

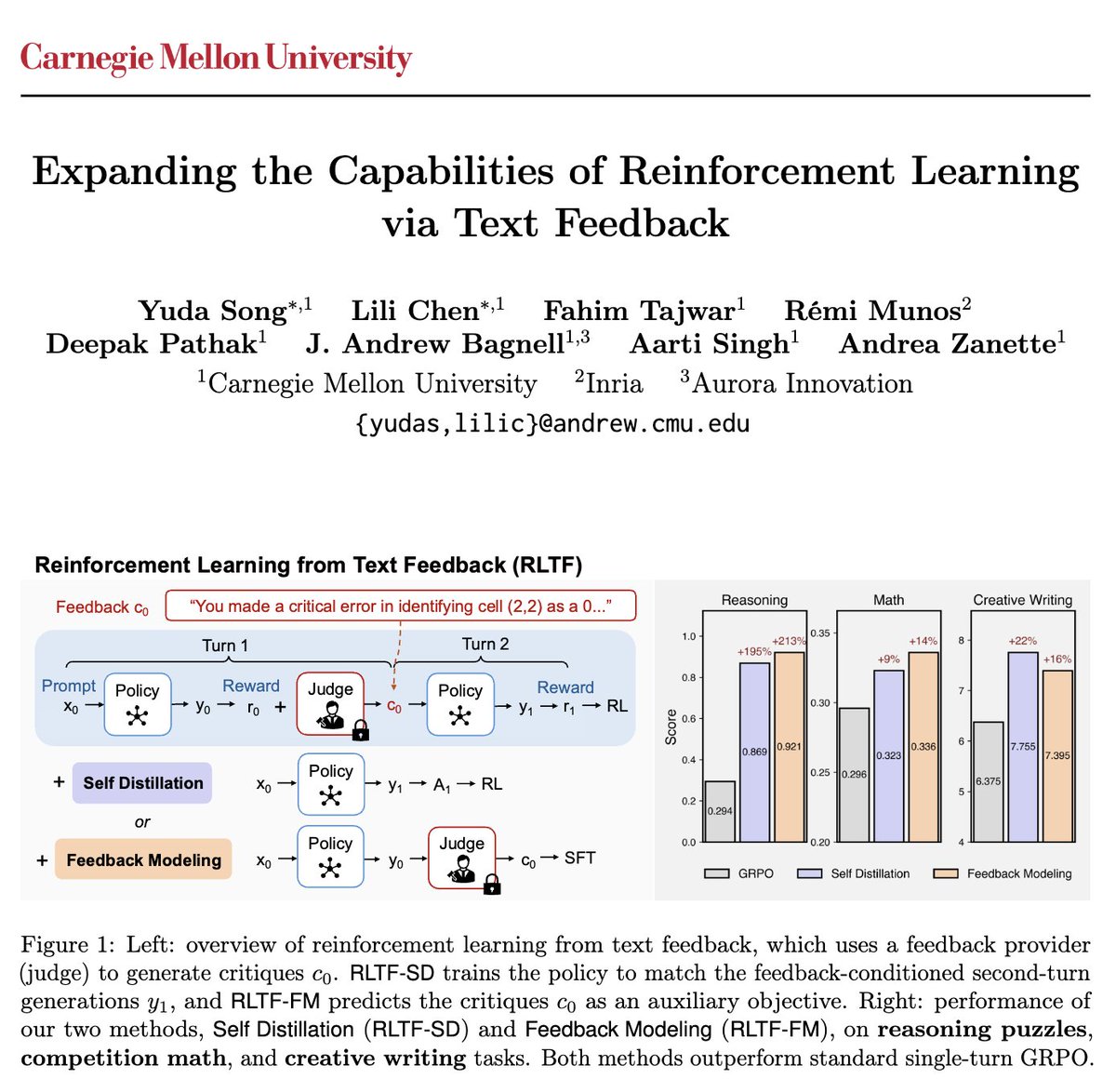

Are we done with new RL algorithms? Turns out we might have been optimizing the wrong objective.

Introducing MaxRL, a framework to bring maximum likelihood optimization to RL settings.

Paper + code + project website: zanette-labs.github.io/MaxRL/

🧵 1/n

English

Jennifer Hsia retweetledi

Excited to share our work at #ICML2025!

📍 East Exhibition Hall A-B E-1707

🗓️ Wed July 16, 11am–1:30pm

📄 github.com/neulab/ragged

Jennifer Hsia@jen_hsia

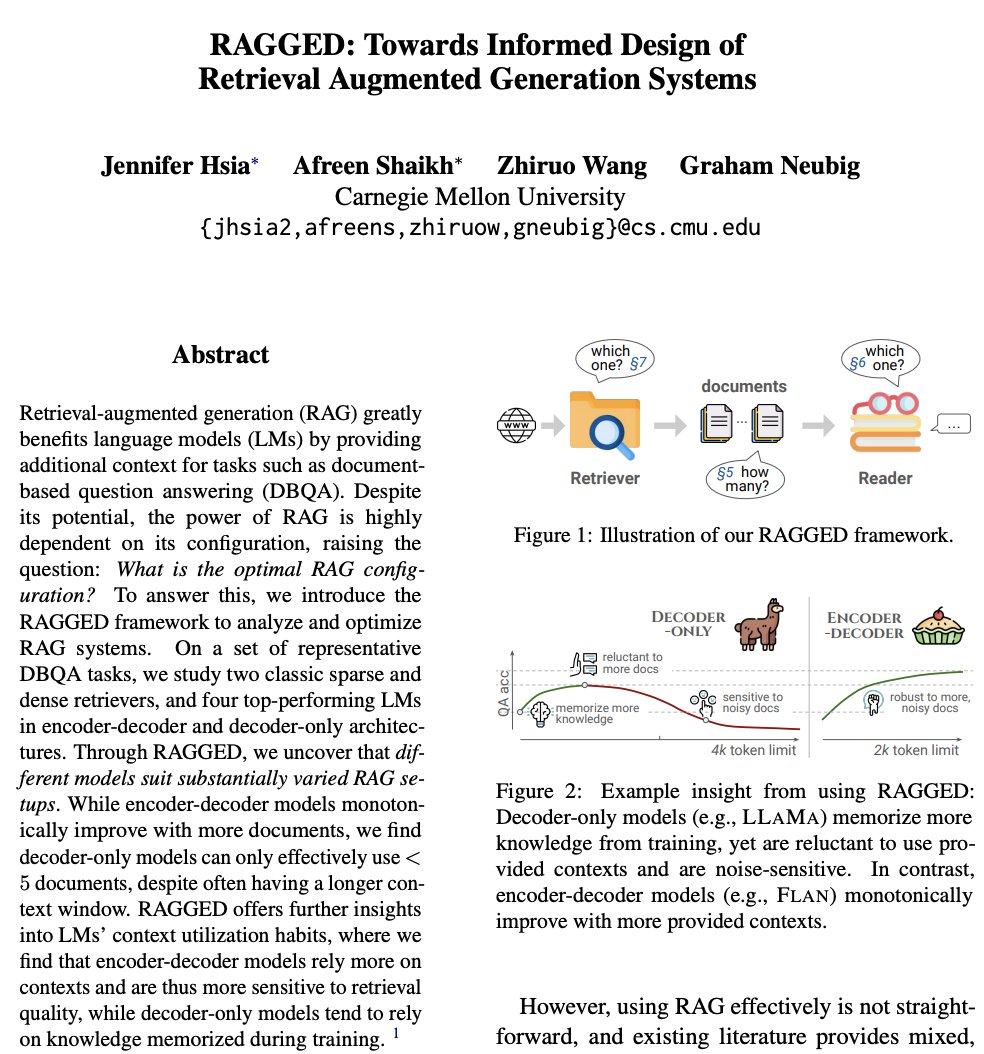

1/6 Retrieval is supposed to improve generation in RAG systems. But in practice, adding more documents can hurt performance, even when relevant ones are retrieved. We introduce RAGGED, a framework to measure and diagnose when retrieval helps and when it hurts.

English

6/6 Thankful for the collaboration w/ Afreen Shaikh, @ZhiruoW, @gneubig!

🔗Paper: arxiv.org/abs/2403.09040

🔗Project page: github.com/neulab/ragged

English

Jennifer Hsia retweetledi

1/6 🚀 Excited to share that BrainNRDS has been accepted as an oral at #CVPR2025!

We decode motion from fMRI activity and use it to generate realistic reconstructions of videos people watched, outperforming strong existing baselines like MindVideo and Stable Video Diffusion.🧠🎥

English

Jennifer Hsia retweetledi

🧵 Are "medical" LLMs/VLMs *adapted* from general-domain models, always better at answering medical questions than the original models?

In our oral presentation at #EMNLP2024 today (2:30pm in Tuttle), we'll show that surprisingly, the answer is "no".

arxiv.org/abs/2411.04118

English

Jennifer Hsia retweetledi

Estimating notions of unfairness/inequity is hard as it requires that data captures all features that influenced decision-making. But what if it doesn't? In our work (arxiv.org/abs/2403.14713), we answer this question

w/ @dylanjsam @MichaelOberst @zacharylipton @brwilder

English

Jennifer Hsia retweetledi

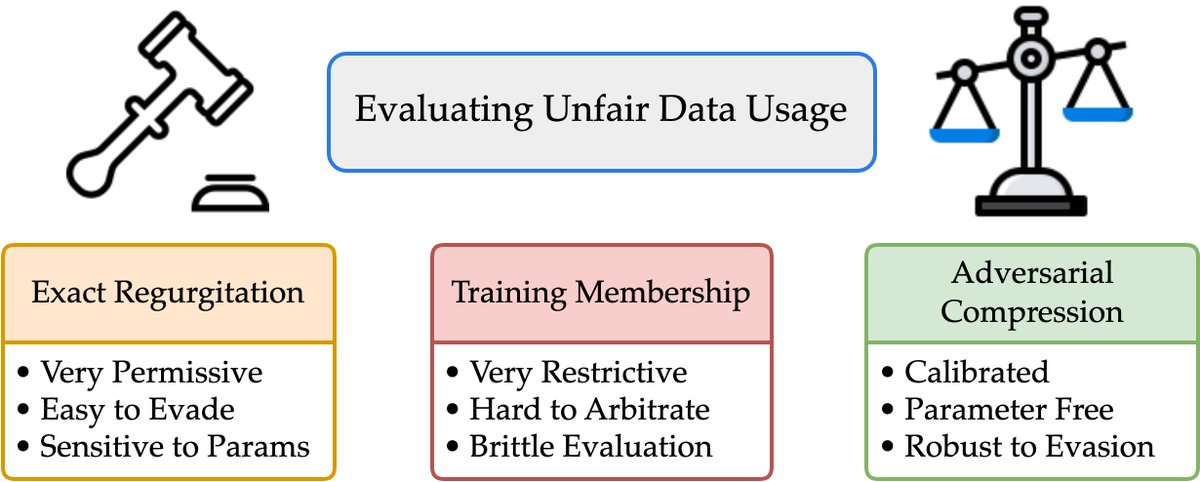

1/What does it mean for an LLM to “memorize” a doc? Exactly regurgitating a NYT article? Of course. Just training on NYT?Harder to say

We take big strides in this discourse w/*Adversarial Compression*

w/@A_v_i__S @zhilifeng @zacharylipton @zicokolter

🌐:locuslab.github.io/acr-memorizati…🧵

English

Jennifer Hsia retweetledi

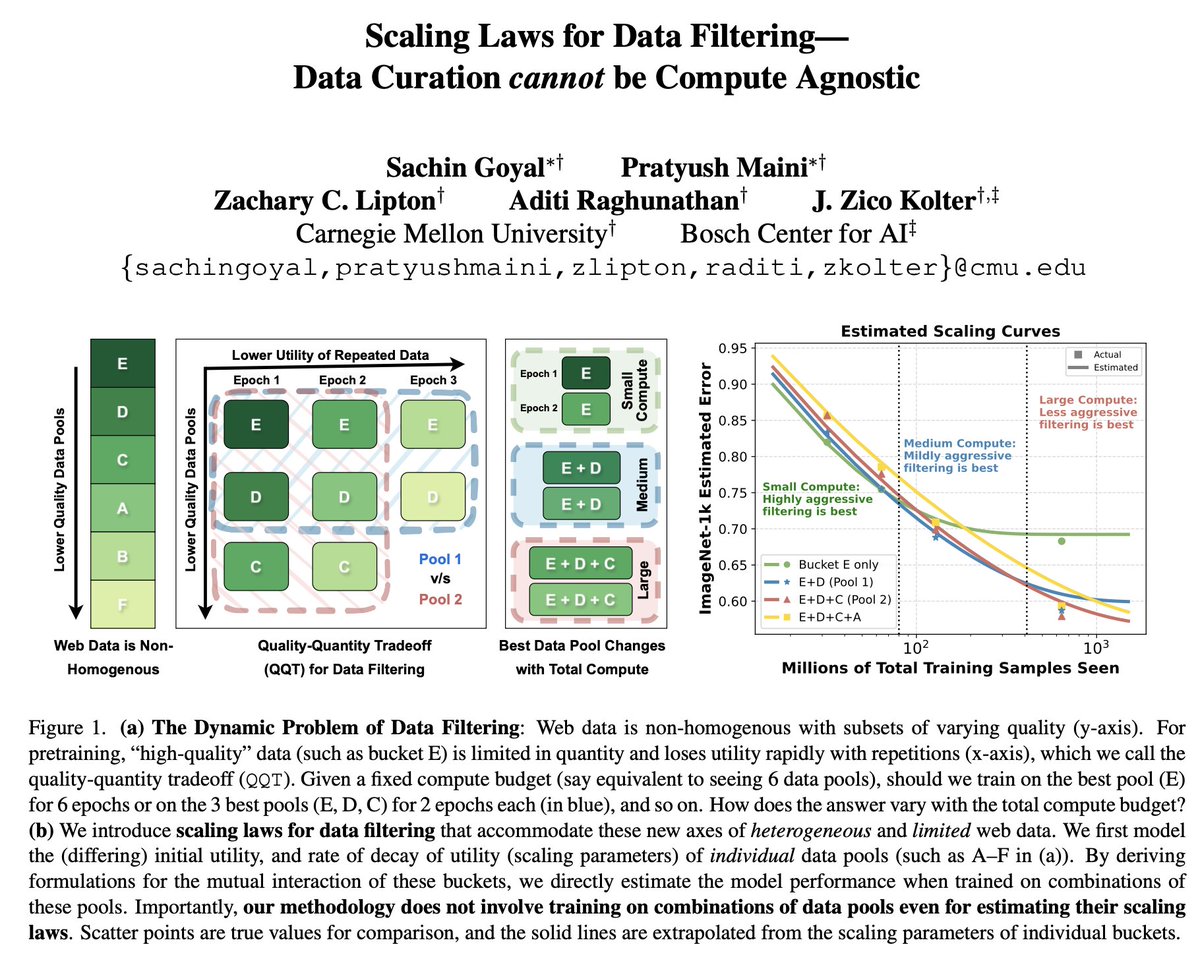

1/ 🥁Scaling Laws for Data Filtering 🥁

TLDR: Data Curation *cannot* be compute agnostic!

In our #CVPR2024 paper, we develop the first scaling laws for heterogeneous & limited web data.

w/@goyalsachin007 @zacharylipton @AdtRaghunathan @zicokolter

📝:arxiv.org/abs/2404.07177

English

Jennifer Hsia retweetledi

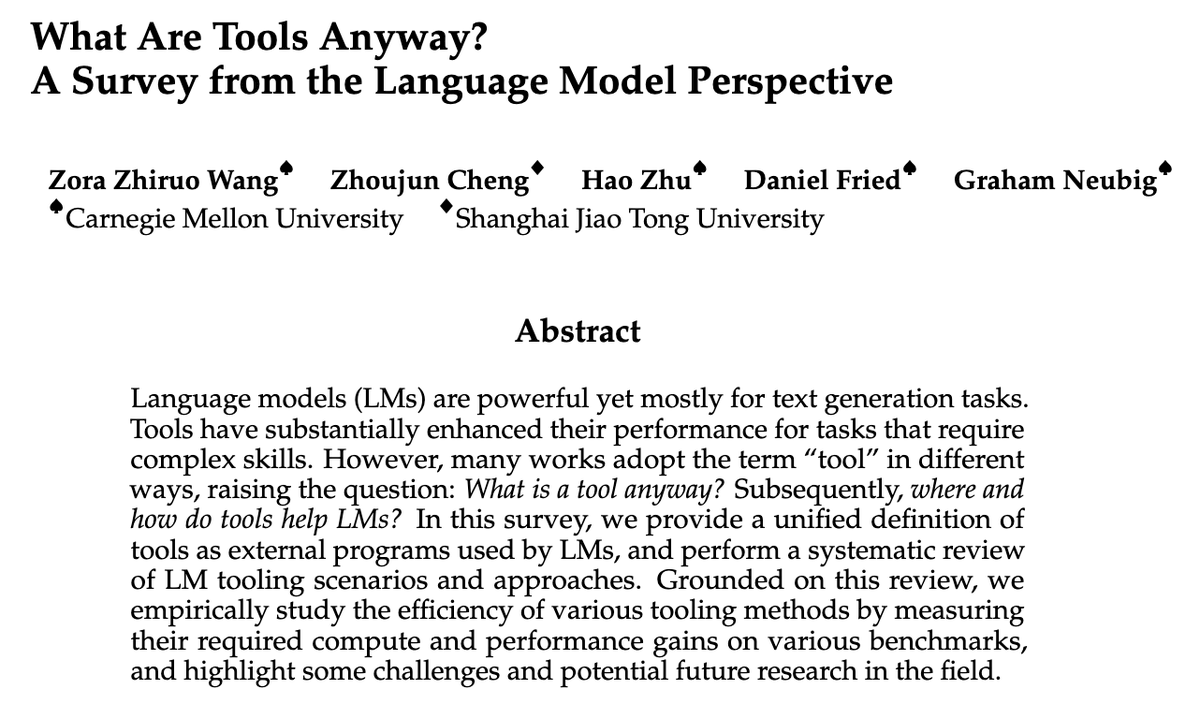

Tools can empower LMs to solve many tasks. But what are tools anyway?

github.com/zorazrw/awesom…

Our survey studies tools for LLM agents w/

–A formal def. of tools

–Methods/scenarios to use&make tools

–Issues in testbeds and eval metrics

–Empirical analysis of cost-gain trade-off

English

Jennifer Hsia retweetledi

Quite an Interesting analysis by Jennifer Hsia, Afreen Shaikh, @ZhiruoW & @gneubig : arxiv.org/abs/2403.09040

Awaiting the RAGGED repo to be published: github.com/neulab/ragged

English

5/5 With RAGGED, you can easily optimize your RAG systems, analyze data slices with common features, and more.

Try out our RAGGED framework and let us know what you think!

GitHub: github.com/neulab/ragged

Joint work w/ Afreen Shaikh, @ZhiruoW, @gneubig

English

4/5 Finding #2: Retriever-Reader synergy 🤝

The synergy between retriever and reader models can make or break your RAG system. Its effectiveness depends on the domain, question type, and reader's sensitivity to retrieval quality.

RAGGED helps you pinpoint the best pairings.

English

1/5 Unleash the full power of RAG systems! 🔥

Introducing RAGGED, a framework for finding the optimal RAG configurations and bypassing common pitfalls.

Dive deep into our findings: arxiv.org/pdf/2403.09040…

English