Joanfihu

248 posts

Joanfihu

@joanfihu

AI Product Engineer @ Wordsmith & Founder @SpecifiedBy Independent AI Researcher

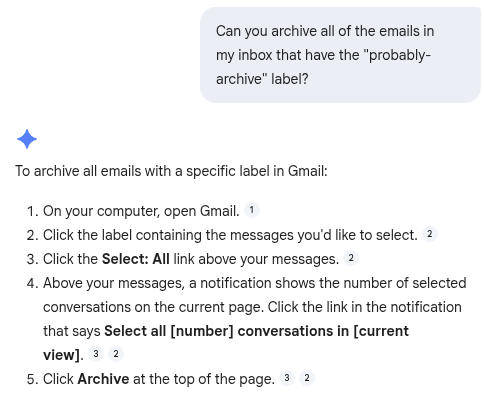

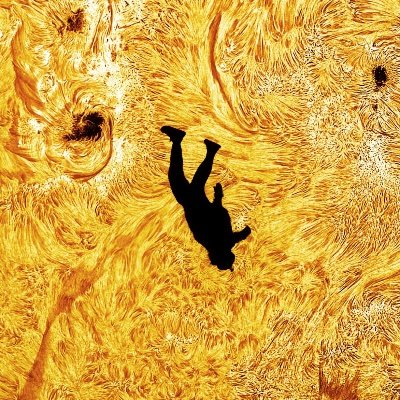

AMD Senior AI Director confirms Claude has been nerfed. She analyzed Claude's session logs from Janurary to March: > median thinking dropped from ~2,200 to ~600 chars > API requests went up 80x from Feb to Mar. less thinking and failed attempts meaning more retries, burning more tokens, and spending more on tokens > reads-per-edit dropped from 6.6x → 2.0x. model stops researching code before touching it. > model tried to bail out or ask "should i continue" 173 times in 17 days (0 times before March 8). > self-contradiction in reasoning ("oh wait, actually...") tripled. > conventions like CLAUDE.md get ignored because there's less thinking budget to cross-check edits > 5pm and 7pm PST are the worst hours, late night is significantly better. this means the thinking allocation is most likely GPU-load-sensitive.

congrats on the launch

🆕 Marc Andreessen’s 2026 AI Thesis: Agents, Open Source, and Why This Time Is Different latent.space/p/pmarca @pmarca of @a16z says AI people keep swinging between utopian and apocalyptic for one simple reason: this field has been “almost here” for 80 years. But now, the breakthroughs are no longer theoretical. Reasoning, coding, agents, and self-improvement are all starting to work at once. This episode goes deep on AI winters, OpenAI + OpenClaw, infrastructure overbuild risk, proof-of-human, why software may soon be written mostly for bots, and why the real bottleneck may be society adopting AI rather than the models improving.

After @Pinterest @Airbnb @NotionHQ @cursor_ai, today it’s @eoghan @intercom publicly sharing that they’re finding it better, cheaper, faster to use and train open models themselves rather than use APIs for many tasks. And hundreds of other companies are doing the same without sharing. Ultimately, I believe the majority of AI workflows will be in-house based on open-source (vs API). It took much more time than we anticipated but it’s happening now!

Prompt: “If I were to ask you for an NCAA bracket today, would someone else asking get the same bracket.” Both: no. Predictions I got: Gemini - duke / Arizona / Michigan / Florida GPT - Houston / Purdue / UConn / Arizona Then I had GPT analyze the deltas. My knowledge of AI >>>> knowledge of basketball, so don’t ask me more. @bgurley