Joe Wu retweetledi

Joe Wu

1.2K posts

Joe Wu retweetledi

I quite like the new DeepSeek-OCR paper. It's a good OCR model (maybe a bit worse than dots), and yes data collection etc., but anyway it doesn't matter.

The more interesting part for me (esp as a computer vision at heart who is temporarily masquerading as a natural language person) is whether pixels are better inputs to LLMs than text. Whether text tokens are wasteful and just terrible, at the input.

Maybe it makes more sense that all inputs to LLMs should only ever be images. Even if you happen to have pure text input, maybe you'd prefer to render it and then feed that in:

- more information compression (see paper) => shorter context windows, more efficiency

- significantly more general information stream => not just text, but e.g. bold text, colored text, arbitrary images.

- input can now be processed with bidirectional attention easily and as default, not autoregressive attention - a lot more powerful.

- delete the tokenizer (at the input)!! I already ranted about how much I dislike the tokenizer. Tokenizers are ugly, separate, not end-to-end stage. It "imports" all the ugliness of Unicode, byte encodings, it inherits a lot of historical baggage, security/jailbreak risk (e.g. continuation bytes). It makes two characters that look identical to the eye look as two completely different tokens internally in the network. A smiling emoji looks like a weird token, not an... actual smiling face, pixels and all, and all the transfer learning that brings along. The tokenizer must go.

OCR is just one of many useful vision -> text tasks. And text -> text tasks can be made to be vision ->text tasks. Not vice versa.

So many the User message is images, but the decoder (the Assistant response) remains text. It's a lot less obvious how to output pixels realistically... or if you'd want to.

Now I have to also fight the urge to side quest an image-input-only version of nanochat...

vLLM@vllm_project

🚀 DeepSeek-OCR — the new frontier of OCR from @deepseek_ai , exploring optical context compression for LLMs, is running blazingly fast on vLLM ⚡ (~2500 tokens/s on A100-40G) — powered by vllm==0.8.5 for day-0 model support. 🧠 Compresses visual contexts up to 20× while keeping 97% OCR accuracy at <10×. 📄 Outperforms GOT-OCR2.0 & MinerU2.0 on OmniDocBench using fewer vision tokens. 🤝 The vLLM team is working with DeepSeek to bring official DeepSeek-OCR support into the next vLLM release — making multimodal inference even faster and easier to scale. 🔗 github.com/deepseek-ai/De… #vLLM #DeepSeek #OCR #LLM #VisionAI #DeepLearning

English

Joe Wu retweetledi

Joe Wu retweetledi

Dearest MCP developers,

You can now monetize your MCP in a few lines of code with @stripe, available today. 💸

- Bill subscriptions or usage-based

- Works with any client

- Supports @Cloudflare's Agents SDK (more to come)

MCP, a new AI-native customer channel. Get started. ⤵️

English

Joe Wu retweetledi

Joe Wu retweetledi

Joe Wu retweetledi

Joe Wu retweetledi

MCP Claude that have full control on ChatGPT 4o to generate full storyboard in Ghibli style ! All automatic I am doing nothing at all, we live a pretty crazy time

@AnthropicAI @OpenAI

English

Joe Wu retweetledi

This is it: The world’s smartest AI, Grok 3, now available for free (until our servers melt).

Try Grok 3 now: x.com/i/grok

X Premium+ and SuperGrok users will have increased access to Grok 3, in addition to early access to advanced features like Voice Mode

English

Joe Wu retweetledi

Joe Wu retweetledi

Joe Wu retweetledi

Joe Wu retweetledi

Sora v2 release is impending:

* 1-minute video outputs

* text-to-video

* text+image-to-video

* text+video-to-video

OpenAI's Chad Nelson showed this at the C21Media Keynote in London. And he said we will see it very very soon, as @sama has foreshadowed.

English

Joe Wu retweetledi

Joe Wu retweetledi

Joe Wu retweetledi

You likely missed it if you only follow ML Twitter but there's a series of mind-blowing tech reports and open-source models coming from China (DeepSeek, MiniCPM, UltraFeedback...) with so much lesson learned and experiments openly shared together with models, data, etc

This level of candid sharing of knowledge and insights is something we've lost in most recent western tech models releases and reports (with the noticeable exception of a few places like the recent AllenAI OLMO release)

Just take a look for instance at these two fresh examples published in the past few days:

- the new MiniCPM blog (amazing super small model – deep dive in the experiments): shengdinghu.notion.site/MiniCPM-Unveil…

- the new DeepSeek Math paper archieving over 60% on MATH: huggingface.co/papers/2402.03…

English

Joe Wu retweetledi

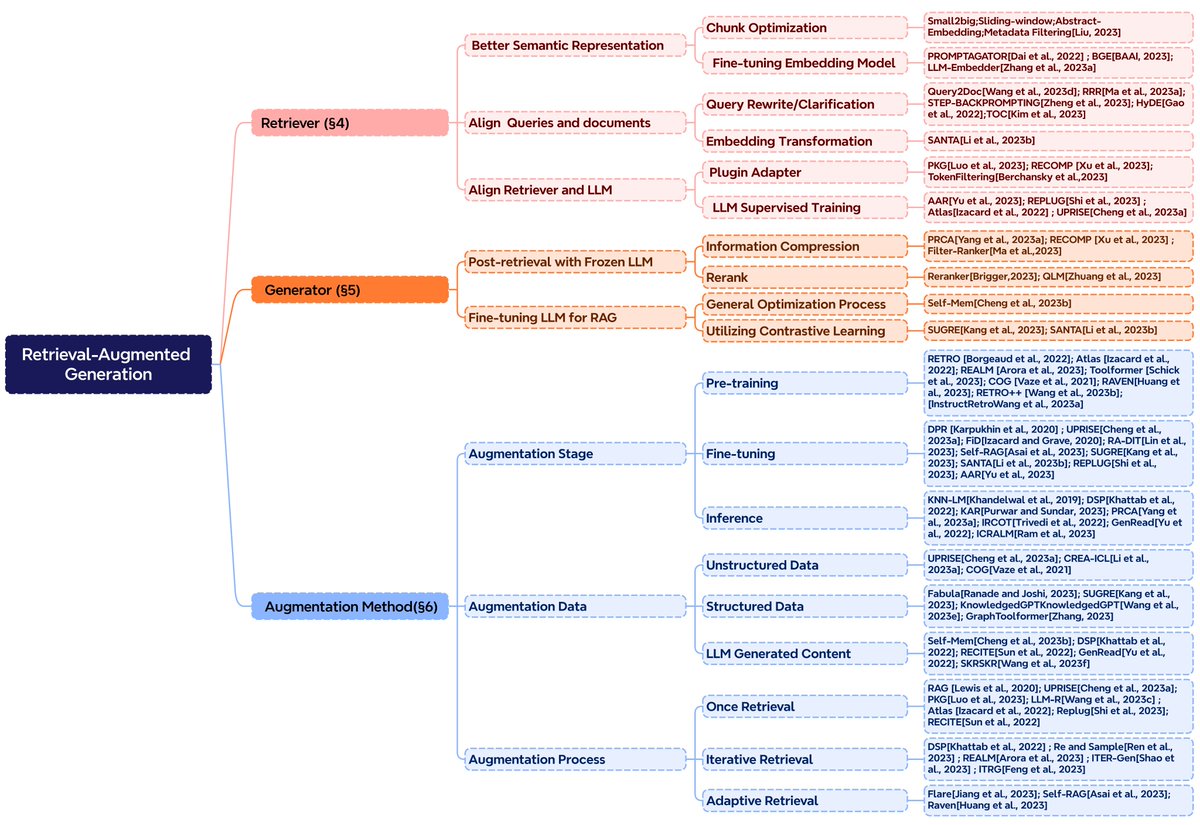

年底最值得一读的 RAG 论文:

《Retrieval-Augmented Generation for Large Language Models: A Survey | 面向大语言模型的检索增强生成技术:调查 [译]》

摘要:

在这篇调查中,我们关注的是面向大语言模型的检索增强生成技术。这项技术通过结合检索机制,增强了大语言模型在处理复杂查询和生成更准确信息方面的能力。我们从同济大学和复旦大学的相关研究团队出发,综合分析了该领域的最新进展和未来趋势。

校对中难免有疏漏指出,有翻译错误请指出!

baoyu.io/translations/a…

九原客@9hills

RAG 综述,建议每个做大模型应用的都读下。 非常不错的总结。 arxiv.org/abs/2312.10997

中文

Joe Wu retweetledi

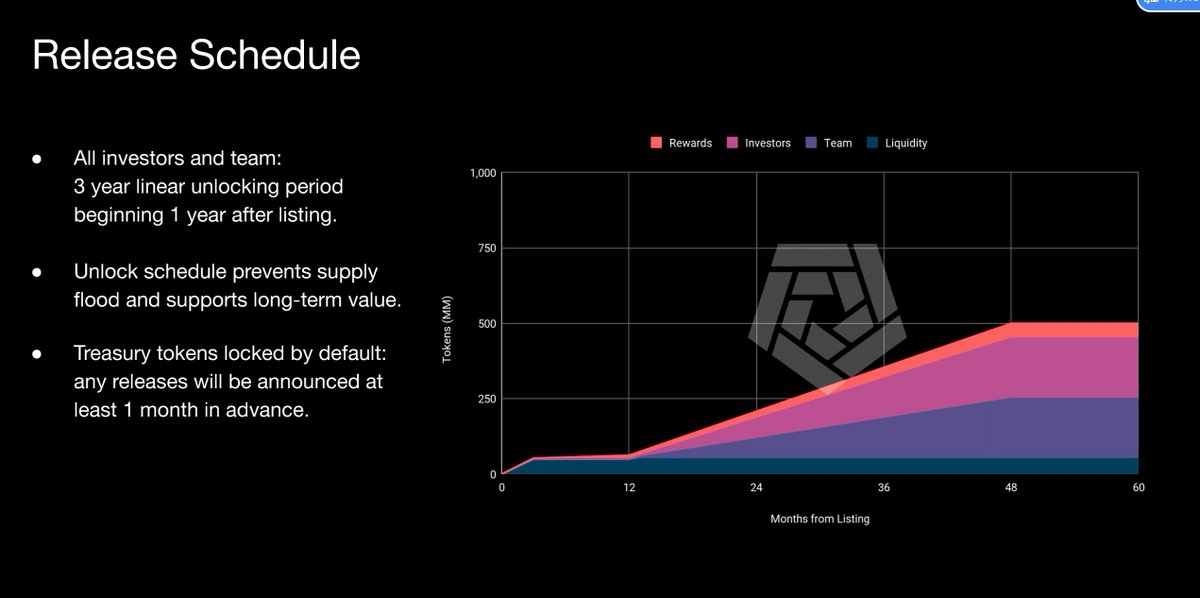

币安IEO的 $Arkm, 类似nansen的链上数据分析工具,看起来数据清洗和追踪都更到位,白名单邀请机制。

产品体验链接platform.arkhamintelligence.com/waitlist?refer…

代币经济模型,算是高度控盘了。简简单单,没有套路,上交易所后的第一年因为团队和VC的币还没解锁,流通筹码不多,嗯,适合炒作。

工具里第一个发币的吧

中文

Joe Wu retweetledi

【ChatGPT4王炸插件——@DefiLlama】

我花了了超过1000个小时使用DefiLlama,浪费了超过60%的时间在交互和筛选信息上。

现在币圈和 #AI 结合的大进展终于来了!#ChatGpt 能与 #DefiLlama 连接,所有相关数据,可以用中文直接查询并让AI帮你总结!🚀

🧵线程详细介绍这个插件,并附上视频教程。

#DeFi

中文

Joe Wu retweetledi