joël

2K posts

Sabitlenmiş Tweet

joël retweetledi

hello I'm new to the stock market is it good when the intel ceo starts praying

Pat Gelsinger@PGelsinger

“Let your eyes look straight ahead; fix your gaze directly before you. Give careful thought to the paths for your feet and be steadfast in all your ways” Proverbs 4:25-26

English

joël retweetledi

joël retweetledi

joël retweetledi

joël retweetledi

@a16z @illscience We see use cases for voice agents across B2B and B2C.

For businesses - replace labor with software!

For consumers - provide access to previously expensive services (therapy, coaching, etc.) + unlock new types of conversations.

Read our full thesis here: gamma.app/docs/a16z-Real…

English

joël retweetledi

joël retweetledi

IMHO, Anthropic and OpenAI are playing a losing game trying to continue scaling compute by hemorrhaging equity capital losses (massively underwater CAC) as their core competitive strategy. Let me unpack this view:

Hyperscalers (specifically MSFT, META and GOOG) have orders of magnitude more resources (talent), users (billions in MAUs) and capital ($200B+ of working capital between them, to be exact.. and this could be 2-3X that quite easily with their liquid stock factored in) to build and scale SOTA models themselves. Additionally, they are in the lovely position to not need to monetize the models whatsoever - they can infuse them in their existing cash cow businesses and transform those value propositions for customers, boosting engagement, revenue expansion, data acquisition and a lot more. "How do we get customers to pay for the models??" is not even a question. You give the models away entirely. META does this explicitly with zero issue in the beautiful absence of a business model conflict.

As a result, I don't see a rosy future for Anthropic or OpenAI at all. Even in the enterprise market. They serve a useful purpose to give interesting product ideas to the hyperscale incumbents. And to entertain the industry. But not much else.

So... where is the real opportunity?

Radically more compute efficient approaches for achieving whatever approximates as "AGI" or "ASI"... which cannot be defined by anyone, but will indeed bring immense value for humanity. Some risks, but vastly more benefits than risks.

In the absence of massively (and I mean orders of magnitude) more compute efficient approaches being unlocked by a startup for scaling machine intelligence without training runs that cost tens of billions of dollars (which is the current regime in the next 6 months), the hyperscaler incumbents will absolutely take 90%+ of the market share.

There is no other path that I can realistically see happening.

English

joël retweetledi

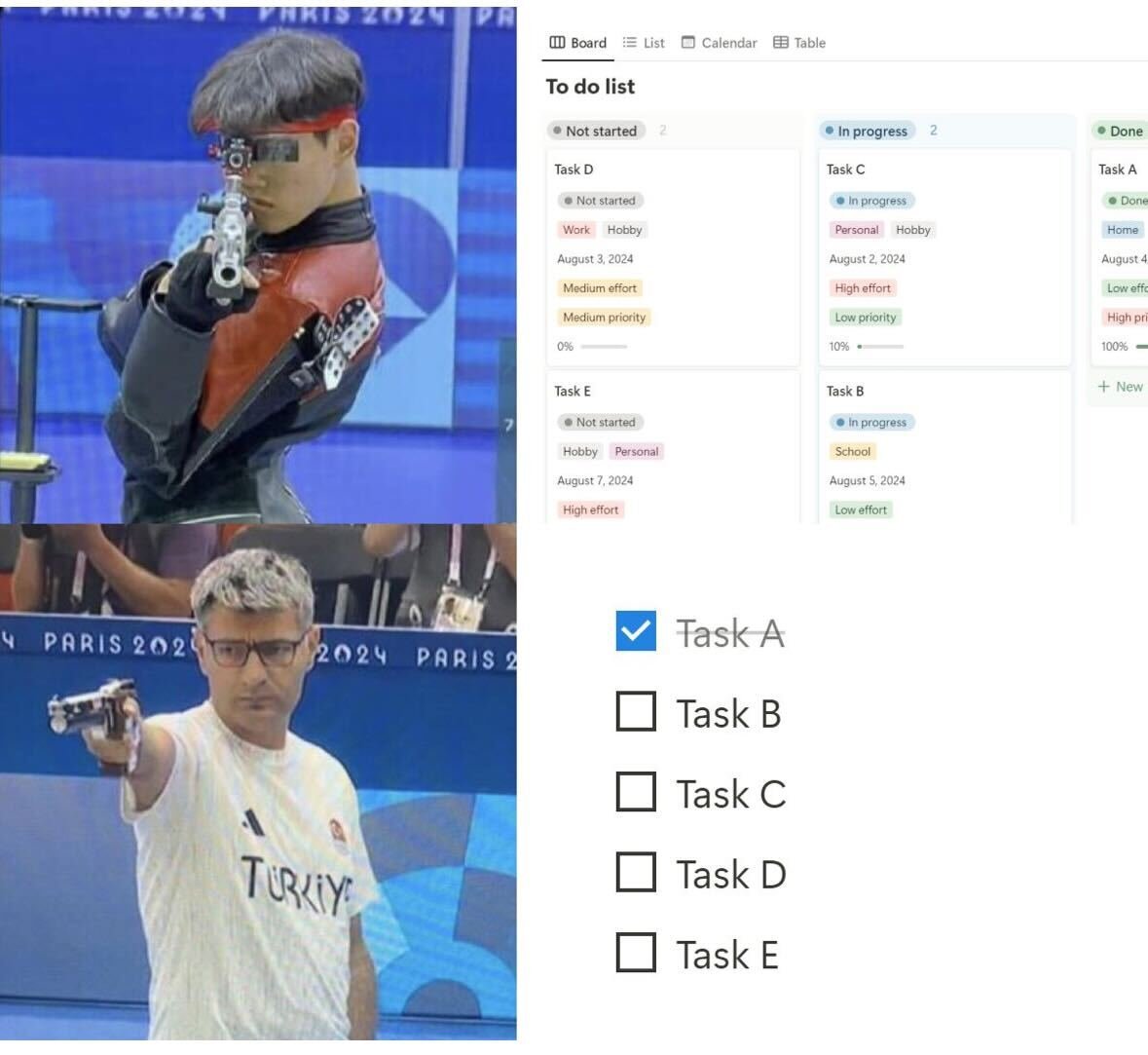

high agency . you could just do things

Dexerto@Dexerto

Taiwanese Parliament member reportedly stole a bill and ran away with it to stop it from being passed

English

joël retweetledi

@bigstrongthumbs agree it sounds more human and (probably!) more accurate - i think there's just a broader sense in which this isn't really solving a problem that exists in the way in which it used to - there must be more interesting applications of what's been developed than a slightly faster GT

English

I feel like I’m actually going insane - this has existed in google translate for about 10 years. Are we being gaslit into re-living 2015???

Morning Brew ☕️@MorningBrew

GPT-4o translating a conversation in real-time Incredible how clean it sounds

English

joël retweetledi