Joseph W Pope

312 posts

DocuSign Personal: $10 to $15 per month. DocuSign Standard: $25 to $45 per user per month. DocuSign Business Pro: $40 to $65 per user per month. A 10-person team on Business Pro pays $4,800 to $7,800 a year. To put signatures on PDFs. A team of 50 pays $24,000 to $39,000 a year. And there is a 100-envelopes-per-year cap on most plans. Send more contracts and you pay extra. Need SMS delivery? $0.40 per send. Need ID verification? $2.50 per attempt. Need premium support? $5,000 to $50,000 per year add-on. You are rationing digital signatures in 2026. DocuSign is a $10 billion company built entirely on this pricing model. Now meet DocuSeal. A free and open source alternative to DocuSign. Created in 2023 by a Ruby developer named Alex who was simply trying to sign one document and realised every solution online was overpriced or required a subscription. Three weeks later he had a working alternative. He pushed it to GitHub under the AGPL-3.0 license. Today it has 11,800+ stars and over 1,000 forks. Bootstrapped. No VCs. No paywalls. Here is what DocuSeal does: - Upload any PDF and turn it into a fillable, signable form - Drag and drop signature fields, dates, checkboxes, file uploads, and 13 field types - Send to multiple signers with custom signing order - Automated email reminders - Mobile signing on any device - PDF signature verification built in - Audit trail for every document - Bulk send and templates - Full API access - Self-host with one Docker command Here is what DocuSeal costs: Zero. Forever. Unlimited documents. Unlimited signers. Unlimited storage. DocuSign limits envelopes. DocuSeal doesn't. DocuSign charges per SMS. DocuSeal doesn't. DocuSign charges for ID checks. DocuSeal doesn't. DocuSign sees your contracts on their servers. DocuSeal doesn't. Here is the wildest part: The median DocuSign contract per Vendr is $17,250 per year. One Reddit thread has people saying "they want me to pay $4.80 per e-signature." Self-host DocuSeal on a $5 cloud server and a 50-person team can sign as many contracts as they want without paying a single dollar. Your contracts never leave your server. Your client lists. Your NDAs. Your employment agreements. None of it touches a third-party company. For individuals who only sign a few contracts a year, you save $180. For small teams of 10, you save up to $7,800 a year. For a 50-person company, you save up to $39,000 a year. Your documents. Your signatures. Your server. 100% Open Source. (Link in the comments)

Boy, 7, dies of brain condition caused by world's most contagious disease - years after he had it as a baby trib.al/Hse12fu

JUST IN: Use of AI in the office is reportedly creating a flood of “workslop” that takes longer to fix than do from scratch.

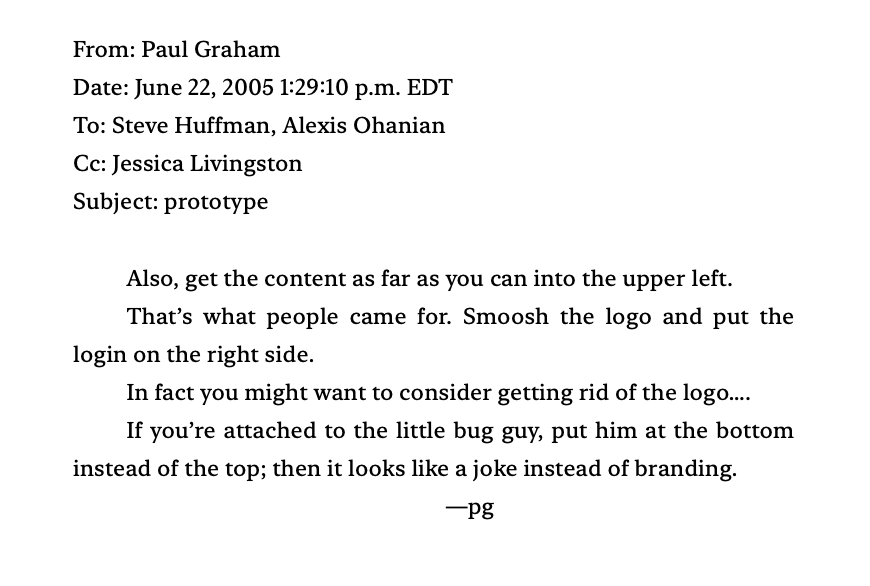

Someone asked what advice founders ignore. That they: 1. Should change their name. 2. Should launch fast. 3. Shouldn't treat fundraising as success. 4. Shouldn't assume they can raise because it's time to. 5. Should fire bad people quickly. 6. Shouldn't talk to acquirers.

@miriamkdaniel Hey Miriam, it would be amazing if we could fix this so people don't get mugged: x.com/sailaunderscor…