Sabitlenmiş Tweet

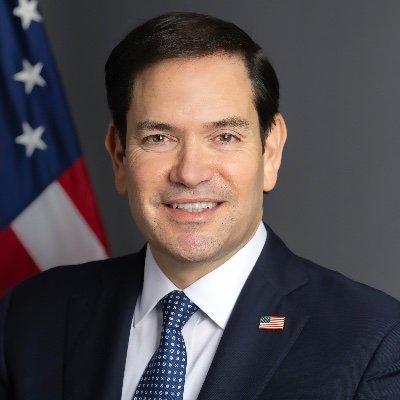

Paramendra Kumar Bhagat

127.5K posts

Paramendra Kumar Bhagat

@paramendra

Entrepreneur/Author/Activist Books https://t.co/dUGQBiAUND paramendra at gmail Himalayan Compute: Grand Solara Vision https://t.co/pCYJsEmgd5

TX Katılım Ocak 2009

34.7K Takip Edilen32.6K Takipçiler

Paramendra Kumar Bhagat retweetledi

Their meteoric rise reshaped the Bay Area and powered Silicon Valley. Is it at an end? sfchronicle.com/projects/2026/…

English

Paramendra Kumar Bhagat retweetledi

No region of the country is as Indian as the Bay Area, and no city in the Bay Area is as Indian as Fremont.

Here, visitors crowd into Hindu temples to pray to Ganesha and other gods. Some public schools teach Hindi and the thwacks of cricket bats on balls ricochet across the biggest park. Inside the city’s many strip malls, Hyderabadi and Maharashtrian food are devoured by homesick immigrants. Women in saris pop in and out of groceries that sell spices and samosas, dal and ghee.

The mayor of this Silicon Valley bedroom community of 227,000 is himself an immigrant from Punjab. Its voters helped send an Indian American — Rep. Ro Khanna — to Congress.

Just a few decades ago, Fremont was as white as any other middle-class East Bay suburb. Today, 30% of residents are of Indian ancestry, the highest share of any city in the Bay Area. Silicon Valley’s hunger for talent, India’s deep bench of tech workers and the immigration liberalization of the 1990s reshaped Fremont — and much of the Bay Area with it.

Similar transformations have unfolded in Dublin and Danville, Livermore and Albany. From 2010 to 2024, Indian Americans and immigrants from India were the fastest-growing ethnic group in a region that now has a larger share of Indians than anywhere else in the country.

So far, the story of this community — whose members are diverse, with varied languages and religions — has been one of rapid success. But that trajectory is now at risk. The engine that powered its rise is threatened as President Donald Trump attacks H-1B visas and paths to citizenship. The anti-immigrant backlash has descended into hate at times against H-1B holders, and Indians specifically, prompting some in the community to consider leaving the U.S. and deterring others from coming here at all.

English

@PeterDiamandis Please find me a 1M investor for this:

Himalayan Compute: 10 Years To A Trillion: Detailed Roadmap himalayancompute.substack.com/p/himalayan-co…

English

@ssankar This is the difference between active listening and passive listening. Passive AI use is not going to lead to major productivity gains.

English

Paramendra Kumar Bhagat retweetledi

Paramendra Kumar Bhagat retweetledi

Today we announced the most ambitious housing plan in our City's modern history: Block by Block.

We're building 200,000 new affordable homes. We're overhauling code enforcement. We're cracking down on bad landlords. We're creating tens of thousands of good-paying jobs. We're building new paths to homeownership. We're investing $5.6 billion in NYCHA — the largest City capital investment in recent history.

New York is facing a historic housing crisis. We're pursuing a historic solution.

All hands on deck. All-of-the-above. All for New York City.

Read more: cbsnews.com/newyork/news/n…

English

Paramendra Kumar Bhagat retweetledi

Paramendra Kumar Bhagat retweetledi

I just got back from SF and I FEEL INSPIRED.

I spent 5 days with frontier AI model teams, AI startup founders, and 3 billionaires.

My takeaways:

1. I had lunch with 3 billionaires. All of them are buying SaaS companies and rebuilding them agent-first. They were deeply inspired by Bending Spoons and Ryan Cohen's eBay deal. Buy the company, cut the headcount, rebuild the tech, add agents, add features, make more valuable experience, raise prices.

2. The frontier model companies are hungry for usage data from the field. They can see API calls and token counts. They can't see the actual workflows. If you're deep in a niche using these models in ways the model companies haven't seen, that understanding is incredibly valuable. Usage intelligence is the new alpha.

3. Consumer AI is massively underbuilt. Every billboard in SF is either B2B inference infrastructure or vertical agent companies. The entire city is optimized for enterprise. Meanwhile you have companies like Cal AI doing $50M ARR in 18 months as a consumer app. I met with a cool few teams doing consumer AI (@paulscherer / @ekuyda)

4. MCP came up in literally every conversation. The companies exposing their product as MCP endpoints are getting pulled into deals they never pitched for. The ones that aren't are becoming invisible to agents. This is the new SEO. If agents can't find you, you don't exist. Building products for agents is the new zeitgeist in general.

5. Not uncommon for hot seed rounds to be $25-50 million valuations. I saw a Series A at $450 million

6. If I had a dollar every time someone mentioned "forward-deployed engineer" this trip I could have funded a seed round. It's the hottest role in SF right now. The person who sits between the agent and the customer, making sure everything actually works.

7. The mood around open source shifted. A year ago it felt like open source was chasing the frontier models. Now founders are telling me Gemma and DeepSeek are good enough for 80% of what they need at a fraction of the cost. The "which model do you use" conversation is being replaced by "which model for which task." Model loyalty kinda feels dead.

8. Voice agents came up more than I expected. Multiple founders told me voice is the interface for the next billion users. The billion people who will never type a prompt will absolutely talk to one.

9. The Obsidian community in SF is weirdly intense. Multiple founders showed me their vaults unprompted. Like showing someone your home gym. It's a flex now. The quality of your knowledge base (second brain?) is becoming a status symbol among builders.

10. Maybe it was just the people I met but the age of the founders is shifting. I met more founders over 40 this trip than any trip before and more founders under age 21 than ever before. Founders getting older and younger at the same time.

11. I spoke to a lot of fast-growing startups, VCs and frontier models who are hiring content creators right now.

12. The restaurant scene in SF is actually better than it's been in years. Founders are going out more. Alcohol is out, not surprisingly.

13. SF doesn't feel like the only place anymore. We all have access to the same frontier models. We all read the same X feed. A founder in NYC or Lagos is calling the same APIs as a founder in SoMa. So in the past it felt like SF was always lightyears ahead, doesn't feel that way anymore. It's okay not to live in SF and have BIG DREAMS.

14. The coworking spaces in SF are half empty but the coffee shops are packed. People want to be around people. I had a few startup ideas here....

15. Walking around the Mission I noticed something: the street-level businesses, the taquerias, the barbershops, the laundromats, none of them use any AI at all.

16. I heard the phrase "agent debt" for the first time. Like technical debt but for agents. When you hack together an agent workflow fast and never clean it up, the system prompts conflict, the memory gets polluted, the tools overlap. 6 months later the agent is doing weird things and nobody knows why lol.

17. Met a few people who carry two phones now. One for personal. One that's basically an agent terminal running Telegram or iMessage connections to their agent fleet.

It's always amazing to get that dose of inspiration in SF. I FEEL INSPIRED.

But I'm so happy to be back home, locked in and building.

We're 12-18 months into a shift that will take 15 years to play out. The urgency in every conversation was real.

What an incredible time to be building.

English

Paramendra Kumar Bhagat retweetledi

I watched a billionaire carry a food tray for his employees.

No assistant. No ego. Just Harsh Mariwala Thing🙏

I worked under him for 19 years.

12 of those reporting directly or one level below.What I learnt from him no MBA class can teach .

During market visits, he would walk to the counter, buy food for the team, pick up the tray himself, and serve us.

A man worth $6.9 billion carrying a tray for people 8 levels below him.

No fancy watch. No designer belt. No expensive pen.

The richest person in the room always looked like the simplest.

He gave me ₹1 crore and said go build a company in Bangladesh.

I built it from nothing. He never micromanaged. Just trusted.

That ₹1 crore business is worth ₹16000 crores today.

Three things I learned from him that no business school will teach you:

1. Have a Right to Win before you enter any market.

2. Innovation is not a department, it is survival.

3. Hire people better than you. Delegate fully. Review relentlessly.

He did not build a ₹1 lakh crore company by being the smartest.

He built it by being the most humble and “Let go approach “

Humility is not weakness. It is the highest form of confidence.

English

Paramendra Kumar Bhagat retweetledi

Hear, hear!

it was so frowned upon in our last raise as our only GTM channel!

Now - linkedin is barely <10% of our overall new ARR - but man, it has a massive influence on existing customers, talent and potential investors!

Garry Tan@garrytan

Look guys, LinkedIn is many things, but it is a place where you can find real customers

English

I’m joining @OpenAI as Chief Marketing Officer, Business.

Some companies build great products. A few change what people believe is possible.

OpenAI is one of them.

Lots to learn. Lots to build. Sleeves rolled up. @thsottiaux @sama @gdb @dhdresser @DaneVahey @jasonkwon

Trishla Ostwal@trishlaostwal

This just in: OpenAI taps ServiceNow's chief marketing officer Colin Fleming as CMO for its business unit adweek.com/media/openai-h…

English

@yonashav Hire me as an outside consultant to help you with it. technbiz.blogspot.com/2026/05/inner-…

English

Paramendra Kumar Bhagat retweetledi

Paramendra Kumar Bhagat retweetledi

Paramendra Kumar Bhagat retweetledi

@NayrhitB The AI Marketing Revolution: How Artificial Intelligence is Transforming Content, Creativity, and Customer Engagement a.co/d/5T9aKEm

The AI Marketing Revolution youtube.com/watch?v=YbYRN2… The 8-Step Sales Playbook: From Prospecting to Closing paramendra.gumroad.com/l/selling

YouTube

English

Paramendra Kumar Bhagat retweetledi

@NayrhitB Digital Marketing Minimum: Channels, Optimization, and Analytics youtube.com/watch?v=LAFTQf…

Digital Marketing Minimum a.co/d/2zLKh8B

Marketing Principles Plus AI a.co/d/b07vhfm

YouTube

English