Julia Rozanova @ ICLR

858 posts

Julia Rozanova @ ICLR

@juliaroz

Working on Payments Foundation Models at @Visa in Cambridge, UK! ~ PhD in Natural Language Processing, Uni of Manchester ~ ~ Catch me on the dance floor! ~

Introducing ml-intern, the agent that just automated the post-training team @huggingface It's an open-source implementation of the real research loop that our ML researchers do every day. You give it a prompt, it researches papers, goes through citations, implements ideas in GPU sandboxes, iterates and builds deeply research-backed models for any use case. All built on the Hugging Face ecosystem. It can pull off crazy things: We made it train the best model for scientific reasoning. It went through citations from the official benchmark paper. Found OpenScience and NemoTron-CrossThink, added 7 difficulty-filtered dataset variants from ARC/SciQ/MMLU, and ran 12 SFT runs on Qwen3-1.7B. This pushed the score 10% → 32% on GPQA in under 10h. Claude Code's best: 22.99%. In healthcare settings it inspected available datasets, concluded they were too low quality, and wrote a script to generate 1100 synthetic data points from scratch for emergencies, hedging, multilingual etc. Then upsampled 50x for training. Beat Codex on HealthBench by 60%. For competitive mathematics, it wrote a full GRPO script, launched training with A100 GPUs on hf.co/spaces, watched rewards claim and then collapse, and ran ablations until it succeeded. All fully backed by papers, autonomously. How it works? ml-intern makes full use of the HF ecosystem: - finds papers on arxiv and hf.co/papers, reads them fully, walks citation graphs, pulls datasets referenced in methodology sections and on hf.co/datasets - browses the Hub, reads recent docs, inspects datasets and reformats them before training so it doesn't waste GPU hours on bad data - launches training jobs on HF Jobs if no local GPUs are available, monitors runs, reads its own eval outputs, diagnoses failures, retrains ml-intern deeply embodies how researchers work and think. It knows how data should look like and what good models feel like. Releasing it today as a CLI and a web app you can use from your phone/desktop. CLI: github.com/huggingface/ml… Web + mobile: huggingface.co/spaces/smolage… And the best part? We also provisioned 1k$ GPU resources and Anthropic credits for the quickest among you to use.

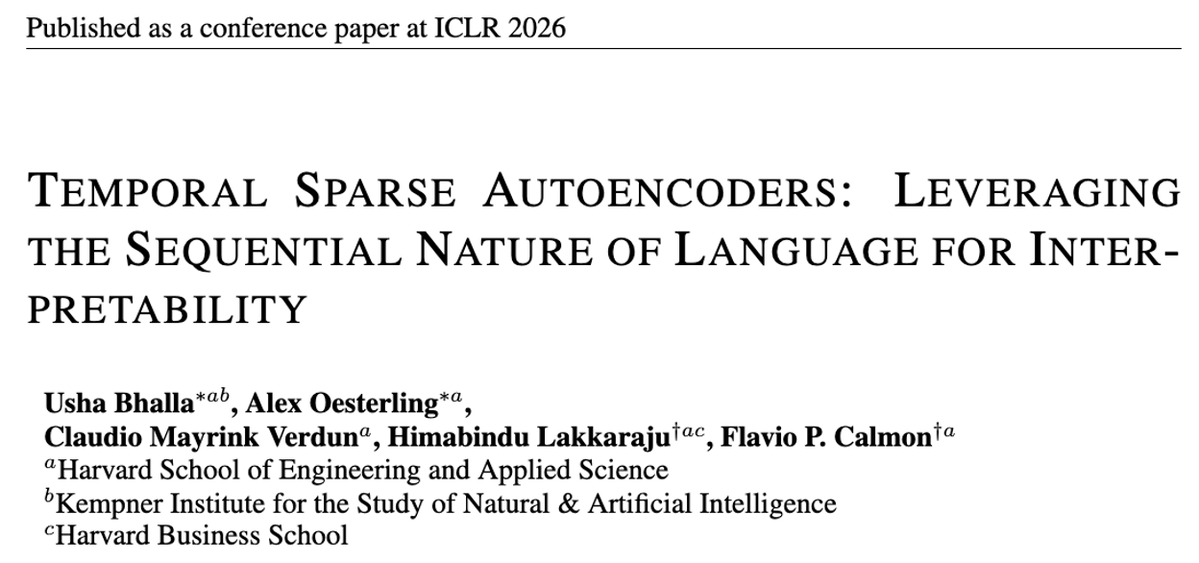

I'm at ICLR with a poster on *DMAP: A Distribution Map for Text* led by the excellent Tom Kempton (@UncleKempez), together with @Visa colleagues. Pop by for a cool story on how our method detected a crucial data error in several major synthetic text detection papers!

really good post about MRCR vs graphwalk, made some visualizations to better understand both evals. still can't explain the MRCR downgrade on opus 4.7 tho, if it's a data thing they could have just included it? so it's not, that mean their modeling choices have a fundamentally different impact on graphwalk vs MRCR?

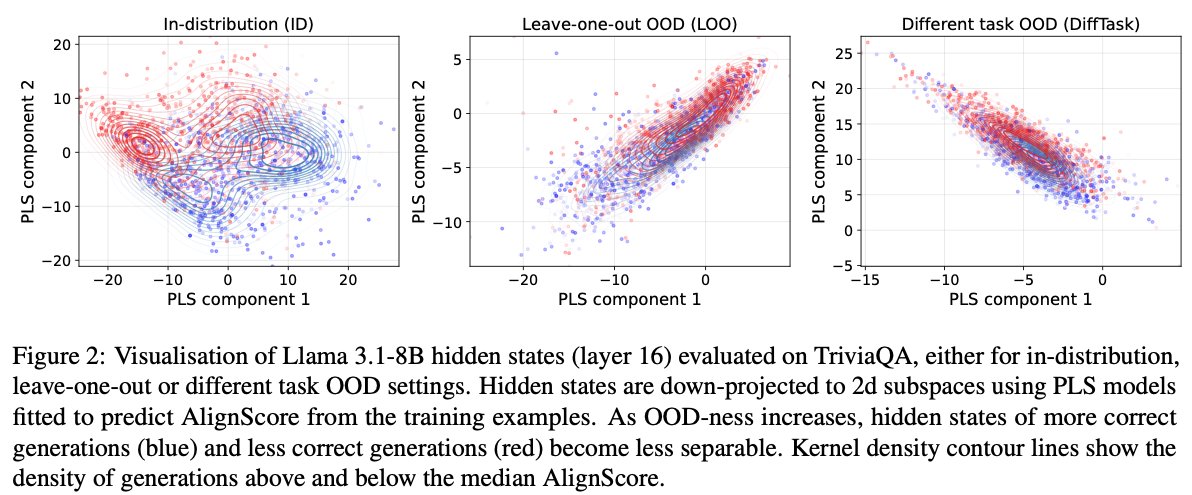

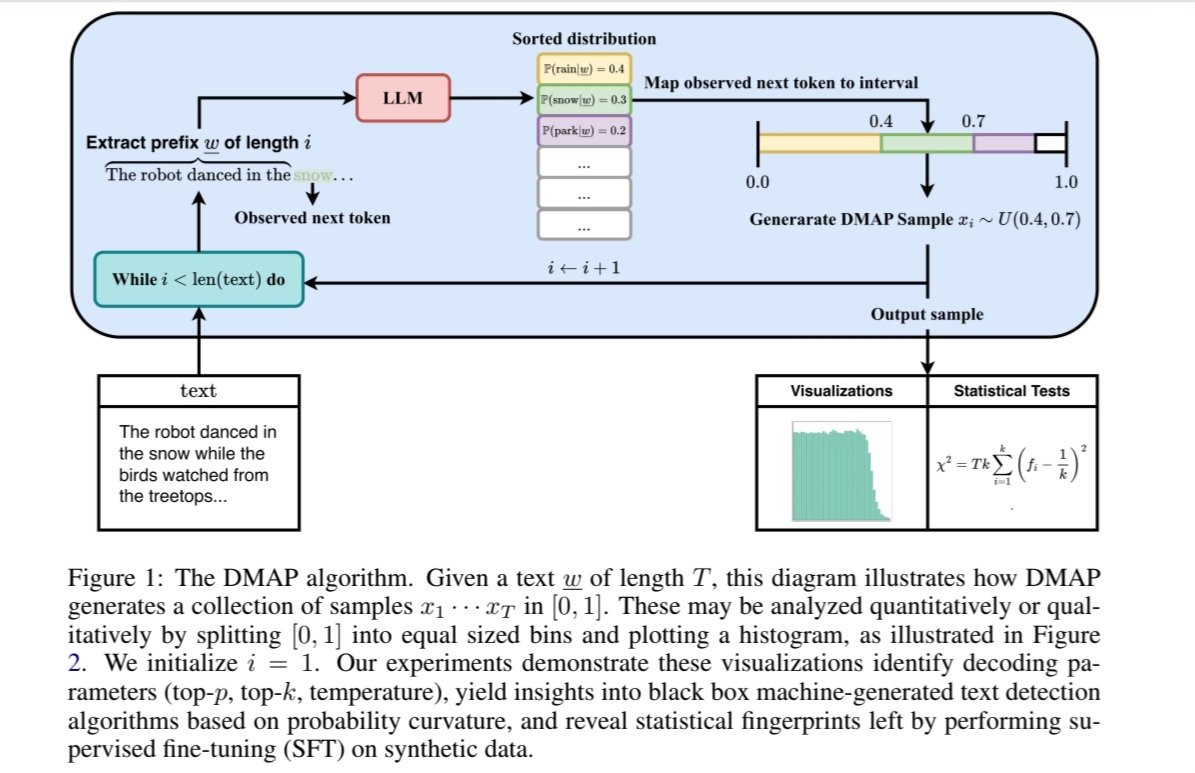

Now reading:

DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE