Matt Karrmann

146 posts

Matt Karrmann

@karrmannmatt

Sucker for a good mental model Working on Presto @Meta. All views expressed are a superposition of my own and my employer's.

San Francisco, CA Katılım Mart 2023

1.4K Takip Edilen81 Takipçiler

Sabitlenmiş Tweet

@ThePrimeagen > * Now coordination is harder because things can "move faster

I've been thinking this too

I assume management is just hoping that power users will steamroll the coordination gap

Will be interesting to see how it plays out...

English

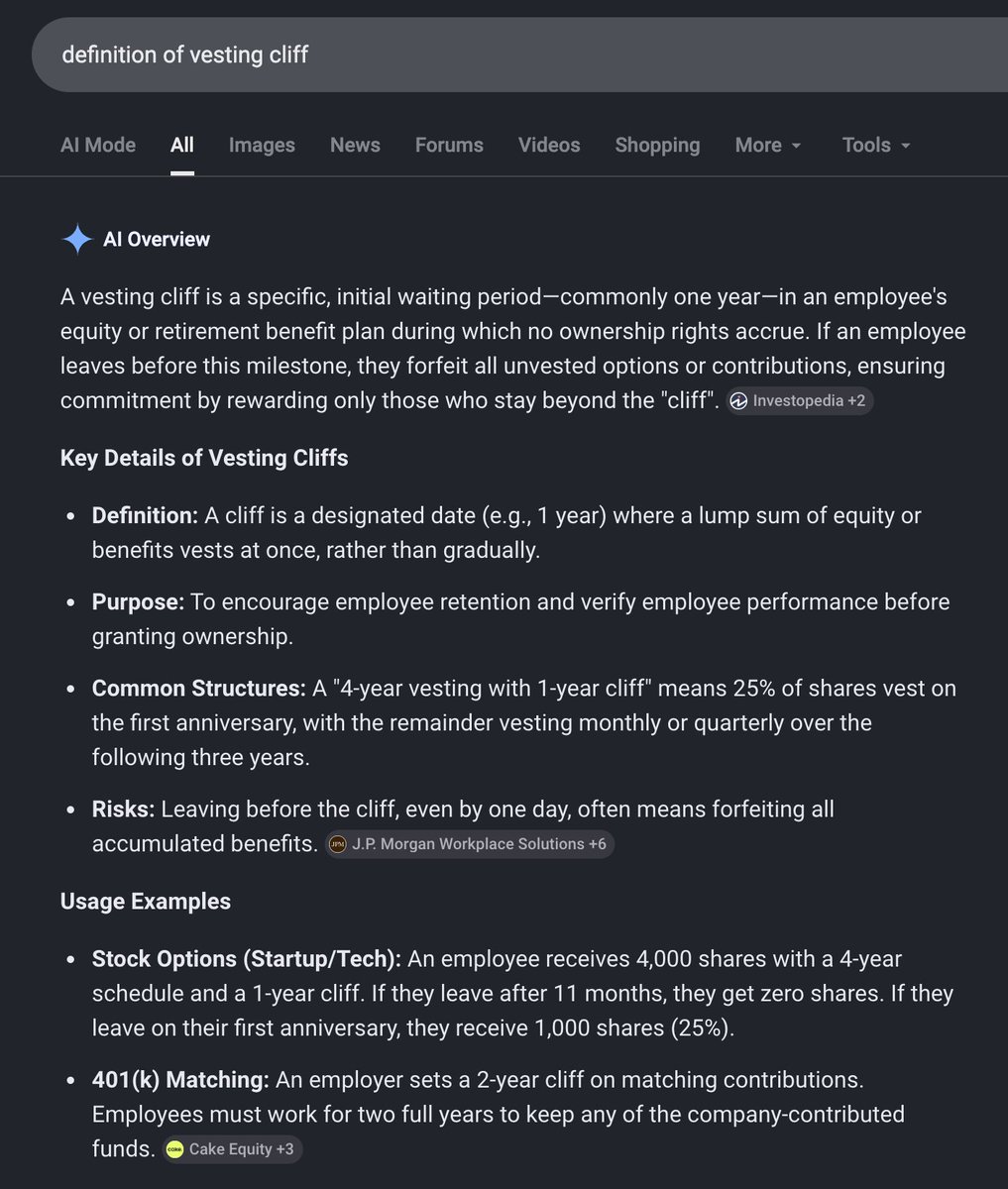

@theo @kunchenguid @ThePedroProenca @mil000 Usually just "cliff". I've heard "vesting cliff" in this context, but it's less common and I agree is wrong.

Fwiw most of the comments you called dumb/wrong only used the word "cliff"...

English

@kunchenguid @karrmannmatt @ThePedroProenca @mil000 Are they saying “cliff” or “vesting cliff”? Because one of those terms has a very specific legal definition.

Your office chair is a “seat”, but it is not a “bike seat”.

English

?

The “Cliff” usually refers to the point where you go from 0 equity to actual equity. Industry standard is 1 year. OAI follows that afaik.

From that point, you vest on a schedule. Every month or every 6 months. Never seen a continued vest schedule that is yearly, but it wouldn’t be a “cliff” either way.

English

@theo @ThePedroProenca @mil000 > Nobody uses them this way

Evidently some people use the term this way! It's standard terminology inside some companies, apparently not Amazon

Happy to concede it's "wrong" to use it that way, but I can promise it's common

English

You are wrong and idk why you're being so insistent about it.

I worked at Amazon. My vesting cliff was 1 year, and then my vesting schedule was every 6 months. The 4 year window wasn't my "cliff", it was the end of my schedule.

You're using the terms wrong. Please stop misinforming my audience. Nobody uses them this way and you are just wrong.

English

@theo 🫤 ha never thought I'd get dunked on by you! Especially over something as silly as this...

Are you saying that all of us are collectively "wrong" for using this phrase? What's the correct phrase for the drop in TC at Big Tech after 4 years?

English

@theo @ThePedroProenca @mil000 It means different things in different contexts!

In Big Tech, it refers to the drop in TC after your initial grant vests. In most startup contexts, it means what you're saying.

I'm not trying to argue one definition is "correct", but there's a miscommunication here

English

@karrmannmatt @ThePedroProenca @mil000 You are wrong. The “cliff” has never meant “when your stock fully vests”.

It refers to the temporary hold on all vesting for new hires. The rest is just your vesting schedule.

English

@theo @ThePedroProenca @mil000 Sorry Theo, the above comment is standard terminology in some places, e.g. Big Tech

(I have no idea whether it applies to OAI or not)

English

@ThePedroProenca @mil000 You have no idea what any of these terms mean

English

@knowclarified Demand has plummeted, most doing big layoffs and/or trying to pivot

English

This got me genuinely wondering, do coding bootcamps still exist? Are there any that have survived the aipocalypse?

Yonan@yonann

Dave Ramsey says a $600 pressure washing job can be the first step to making 150K a year "You don’t want to be 63 years old still pressure washing. But to get through this week, you can do a lot of pressure washing" "Use the pressure washing money to pay $10,000 for code school, then go make 150K a year coding, every move should be a step toward where you want to be in 10 years"

English

@athenaeumbc I'd expect that the easiest SAT Reading questions merely test basic literacy. Seems fine

English

@davidad Few people have a consistent stance on numerical ontology.

The phrase "imaginary number" is the worst marketing blunder in history. No surprise most people think mathematicians are just fucking with them.

English

There is a hypothesis that birth order effects (on things like income and educational attainment) are in part respiratory pathogen effects: younger kids get more of them from their older siblings. This cool recent paper uses Danish administrative data to argue that this is true and a pretty large part of the story. (They claim 70% of the birth order effect on long-run wages.)

Other work has previously shown that severe infections matter for long-run outcomes, and it's well-established that birth order matters, but I haven't until now seen anyone convincingly show that standard respiratory pathogens impose long-term costs on infant siblings.

nber.org/system/files/w…

English

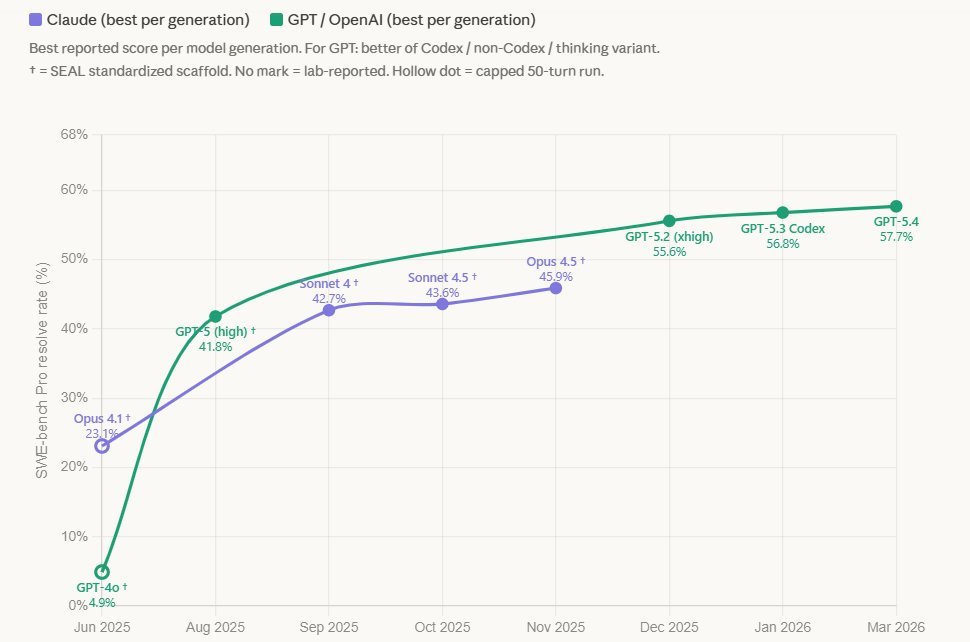

@dodgelander @GaryMarcus Maybe there is a plateau, I'm not arguing about that.

It's just silly to point to a benchmark which has been saturated as evidence of a plateau (and even sillier to say no one wants to talk about it when it was very publicly talked about by OpenAI)

English

@karrmannmatt @GaryMarcus plateau in the "recomended" swe bench pro by openai for your entertainment

English

Matt Karrmann retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Matt Karrmann retweetledi

A lot of folks talk about "escaping the permanent underclass". If AGI pans out, the future class divide won't be based on wealth, but on cognitive agency. There will be a "focus class" (those who control their attention and actually do things) and a "slop class" (those whose reward loops are fully RL-managed by AI)

English

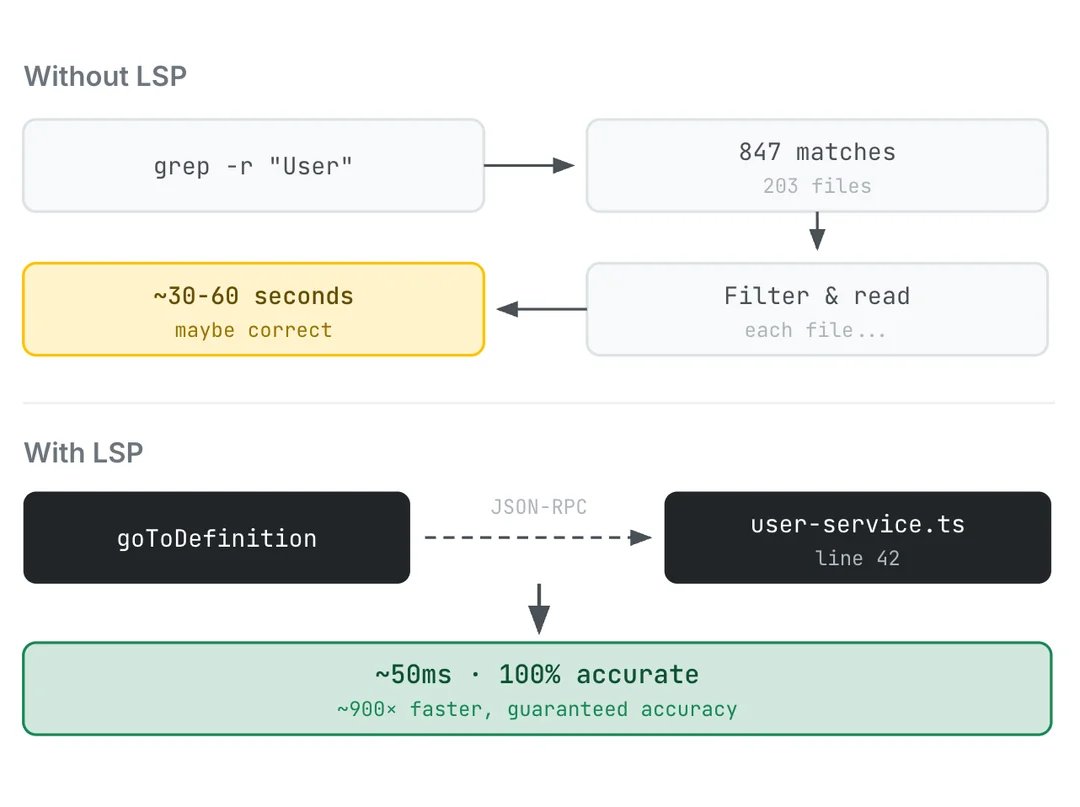

@micahtid @om_patel5 Cuz it's not just a flip to switch. You need to setup LSP integration, which is non-trivial and varies a lot between environments

English

claude code has a hidden setting that makes it 600x faster and almost nobody knows about it

by default it uses text grep to find functions.

it doesn't understand your code at all. that's why it takes 30-60 seconds and sometimes returns the wrong file

there's a flag called ENABLE_LSP_TOOL that connects it to language servers. same tech that powers vscode's ctrl+click to jump straight to the definition

after enabling it:

> "add a stripe webhook to my payments page" - claude finds your existing payment logic in 50ms instead of grepping through hundreds of files

> "fix the auth bug on my dashboard" - traces the actual call hierarchy instead of guessing which file handles auth

> after every edit it auto-catches type errors immediately instead of you finding them 10 prompts later

also saves tokens because claude stops wasting context searching for the wrong files

2 minute setup and it works for 11 languages

English