Miralib Balamar

1.6K posts

Miralib Balamar

@KaspaCrypto

This is a private account re the amazing Kaspa cryptocurrency and related stuff. Visit @kaspaunchained for what's as close to the "official" one as possible.

Coming soon ... #on𐤊

@binance,

Thanks for including me in the top 100 blockchain people list, appreciate the signal!

I must decline the Dubai invite though. I do not wish to disrespect, but many of the award voters are avid kaspians who rooted for my kaspa status at least as much as for my research. Let them win or count me out.

Crypto has turned from a euphoric cypherpunk project to a house-friendly casino. You may not be the culprit, but as a top player you hold the lion’s share of the responsibility to correct this, and the October crash your USDe oracle glitch helped trigger adds to what needs to be addressed.

There are three classes of crypto, as @mert put it recently: commercial crypto, casino crypto, cypherpunk crypto. <

DAG Industrial | Episode #006: The Agentic Web 🤖🔗 In 2026, the workforce has changed. 82:1. That is the ratio of Autonomous AI Agents to human employees. But these agents have a problem: They are unbanked ghosts. 👻 Episode 006 breaks down how #Kaspa provides the financial nervous system for the $26 Trillion Machine Economy. The 2026 Tech Stack: 🔹 The Problem: You can't run a fleet of million-transaction-per-second AI agents on a 15-minute block time. 🔹 The Solution: KIP-17 Covenants. We can now program "Spending Limits" directly into the UTXO. Your AI agent can hold money, but it can only spend it on approved services (Data, Energy, Compute). 🔹 The Scale: From V2X (Vehicle-to-Everything) to DePIN, Kaspa is the only PoW layer fast enough to catch the "stream" of machine payments. The future isn't just about "Human-to-Human" transactions. It's about Machine-to-Machine (M2M) settlement. Watch the full briefing below. 👇 #KAS #BlockDAG #AI #MachineEconomy #DePIN #Web3 #FutureOfWork

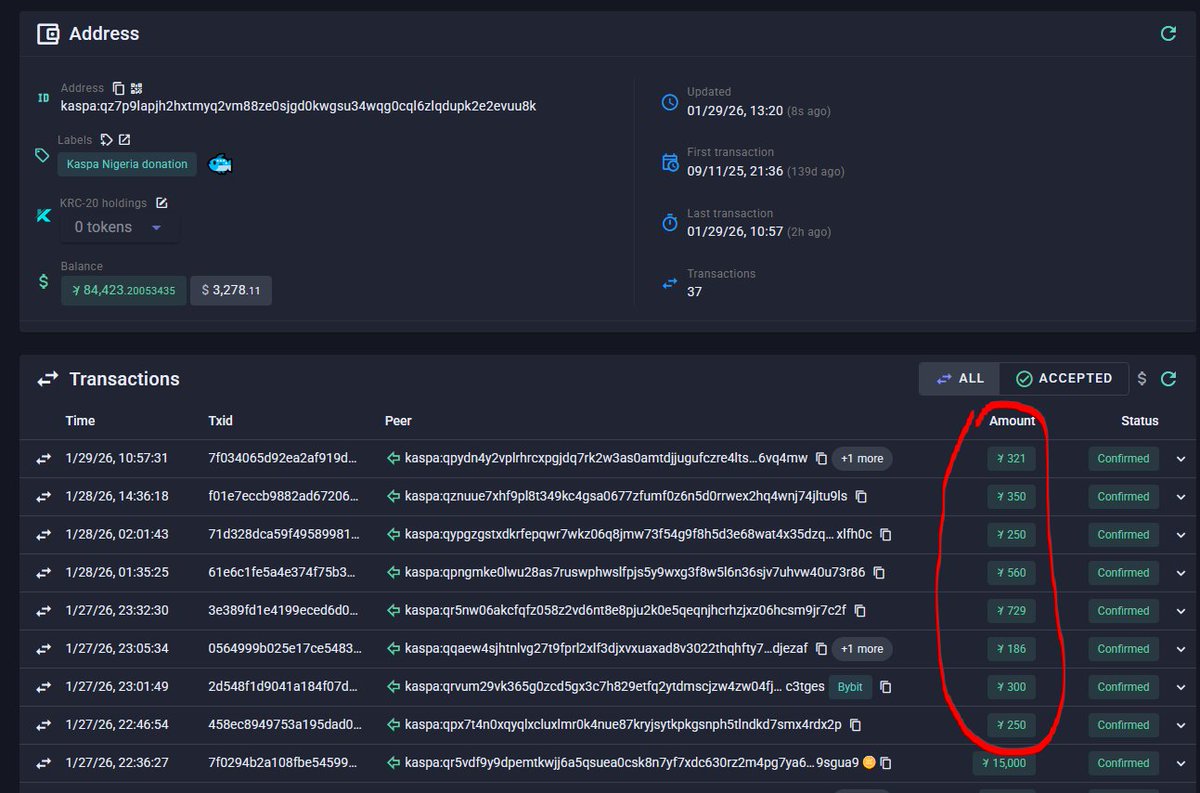

$KAS Wake up, community! Each time, hundreds vote 'YES' but LITERALLY almost no one donates. This has to stop! I’m matching the current balance RIGHT NOW. After that, I’ll add 50% of every new total between my updates. Stop voting, start sending! kas.coffee/kaspa_nigeria