Kaahan Radia

18 posts

Kaahan Radia

@kradisme

Building something new @keyframelabs. Ex-Zipline.

@ycombinator @KeyframeLabs @parthnradia Try it live at keyframelabs.com!

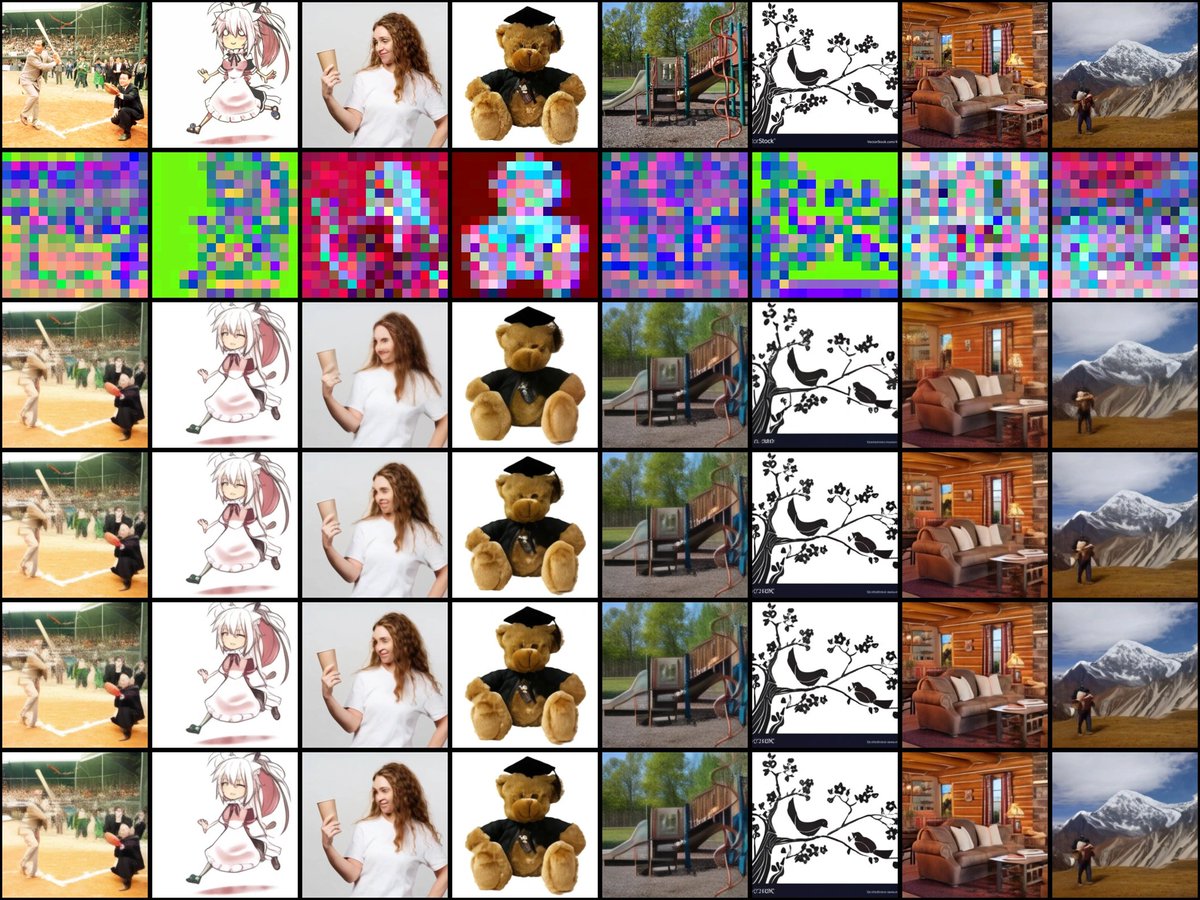

What's the right space to diffuse in: Raw Data or Latents? Why not both! In Latent Forcing, we order a joint diffusion trajectory to reveal Latents before Pixels, leading to improved convergence while being lossless at encoding and end-to-end at inference. w/ @drfeifei+... 1/n

any audiophile equipment better than airpods is 100% a waste of time and if you disagree you're literally just addicted

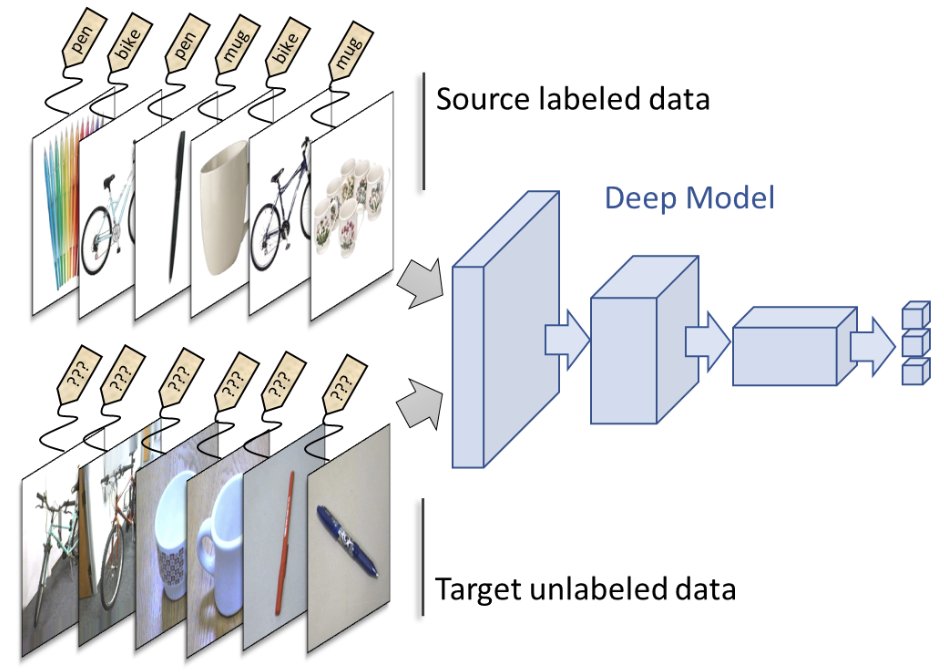

Writing this gave me flashbacks of when CLIP came out. Part of my lab was working on Domain Adaptation, i.e. adapting models to unseen domains. CLIP killed that field CLIP has seen everything, suddenly there was this model with no unseen domain. [1/2]