LangRouter

292 posts

LangRouter

@langrouter

The Amazon for AI agents The products include: LLM tokens, web search API, computing power, electricity, etc.

🚀 DeepSeek-V4 Tech Report Deep Dive: TileLang — Build Tiny LLM Operators Efficiently Insights from Zhihu Contributor SiriusNEO 🎬 Opening Insight • Hot discussions around the DeepSeek-V4 technical report, with TileLang standing out as a key highlight • Covers community tech progress & industrial polished experience of compiler/DSL in LLM infrastructure • Attach official TileLang repo + DeepSeek open-source TileKernels: A library of high-performance LLM tiny operators implemented via TileLang ⚖️ DSL vs Expert Handwritten CUDA Kernels • LLM architecture convergence makes computation patterns fixed; mainstream infra prefers handwritten kernels for performance limits • Extreme trend like MegaKernel pursues ultimate manual scheduling optimization • Leaves DSL/traditional compilers in an awkward positioning dilemma • TileKernels focus on non-Tensor-Core operators: elementwise+reduce combo, type cast, indexing & fine-grained tiny ops • DeepSeek-V4 Infra strategy: ✅ TileLang fused kernels replace scattered small operators ✅ Expert manual optimization for Attention & GEMM heavy kernels ✅ Healthy hybrid infra architecture for production deployment ✨ Core Advantages of TileLang for Small Operators 1️⃣ Development Edge • No classic performance vs development cost tradeoff for memory-bound small ops • No complex Warp Specialization demand; TileLang matches handwritten kernel performance ceiling • Dramatically faster development speed with zero performance loss 2️⃣ Maintenance & Hardware Migration • Low mental burden for operator library maintenance • Compiler-side bugs need no modification to original operator code • Weak hardware dependency, smooth cross-backend deployment & migration 3️⃣ Strong Capability for Tensor-Core Operators • Only 80 lines of Python in TileLang implement FlashMLA with 95% native performance • Qwen FlashQLA + TileLang GDN outperforms FlashInfer in specific scenarios • Perfect for academic idea rapid verification: 80%-90% performance with minimal coding cost • Clean dataflow modeling, friendly for open-source learning & reference • Compile stack outputs standard source code (.cu for CUDA), enabling follow-up expert manual tuning based on TileLang template 🤖 TileLang & Future AI Agent Coding • Uncertain if DSL is more readable than raw CUDA for agents currently • AI already masters CUDA coding well thanks to sufficient corpus • TileLang lacks enough public training data for now • Early practice: AI writes simple TileLang kernels better than zero-shot CUDA • Long-term potential: TileLang abstracts dataflow logic, frees agents from complex memory layout design once corpus accumulates 🧠 Core Design Essence of TileLang • Obvious difference from Triton: Explicit exposure of memory hierarchy (L0/L1/L2) • Two most iconic abstractions: Fragment + Parallel Fragment Abstraction • Abstracts register sets of all threads within a single CUDA block as one whole • Avoid tedious manual task splitting across warp/thread levels • Compiler auto-maps logical tensor access to physical register layout Parallel Abstraction • Supports fine-grained element-wise operation (A[i,j]) far beyond Triton’s tile-level micro-op • Unifies shared memory & registers as tiles of different memory hierarchies • Simplifies programming logic: only focus on tile data movement & tile computation • Inherits essence from MSRA deep learning compiler theoretical accumulation 📌 Key TileLang Highlights in DeepSeek-V4 Report 1️⃣ Host CodeGen Optimization • Leverage TVM-FFI to slash host-side kernel launch & tensor validation overhead • Migrate Python-side logic to C++ and compile into kernel host runtime • Brings obvious end-to-end latency benefits 2️⃣ Z3 Prover Integration • Replace weak TVM built-in arithmetic solver with Z3 formal prover • Auto eliminate redundant boundary condition checks when index out-of-bounds is mathematically impossible • Supports vectorization optimization & integer expression proof • More exposed hidden bugs after integration (previous conservative logic masked potential issues) 3️⃣ Precision & Bitwise Consistency • Critical for high-precision scenarios like RL inference • Disable fast-math by default, adopt standard IEEE intrinsics • Align algebraic transformation logic with NVCC • Solve bit mismatch caused by implicit FMA fusion in native CUDA compilers 📝 Closing Summary • TileLang plays an indispensable role in DeepSeek-V4 production infrastructure • Sets a clear positioning template for modern DSL in LLM era • Rich learning resources available: official docs, TileLang-Puzzles, TileOPs, XPUOJ • Ideal for developers to learn LLM kernel design & compiler DSL thinking #DeepSeekV4 #LLM #DSL #AIInfra #CUDA #MoE 🔗Full article: zhuanlan.zhihu.com/p/203303420272…

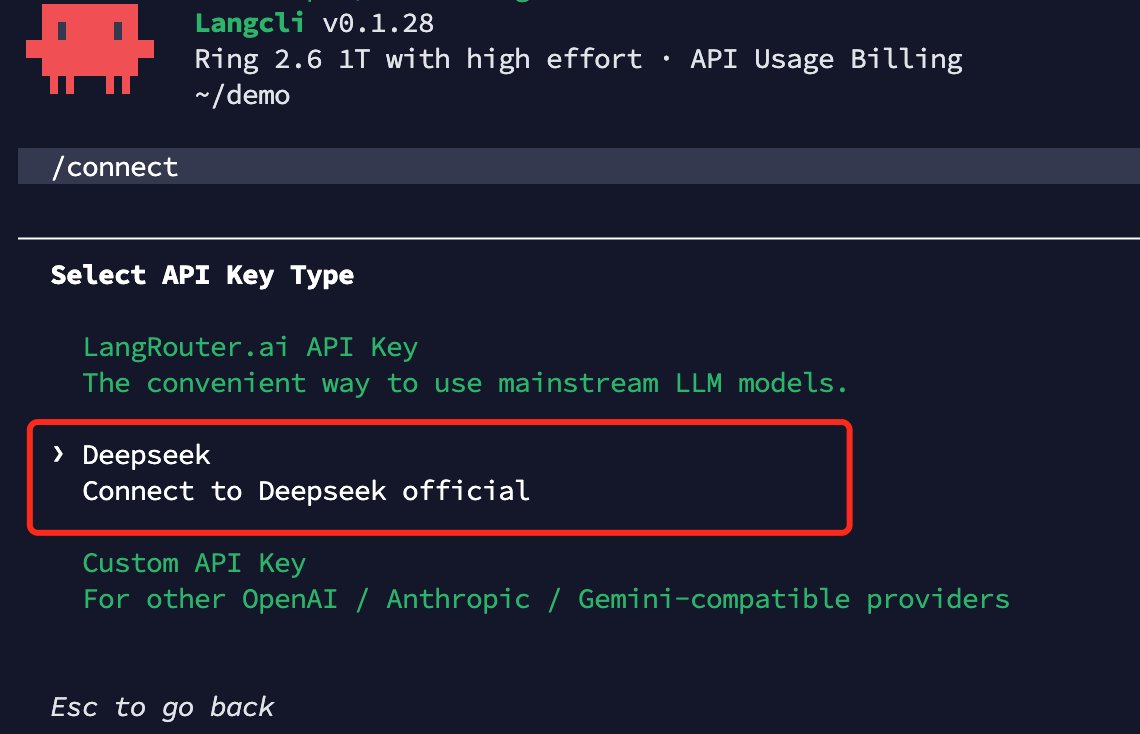

We are launching Ring-2.6-1T, a trillion-parameter flagship thinking model engineered for real-world complex tasks and production env: 🚀 - Adjustable Thinking Effort: dynamic compute mechanism to flexibly balance cognitive depth, token cost, and execution speed; - Agent-Optimized: Built for high-frequency workflows, delivering rapid multi-step execution and tool orchestration with SOTA stability; - Deep Thinking: Unlocks the model's maximum capability ceiling for rigorous mathematical logic and scientific research;

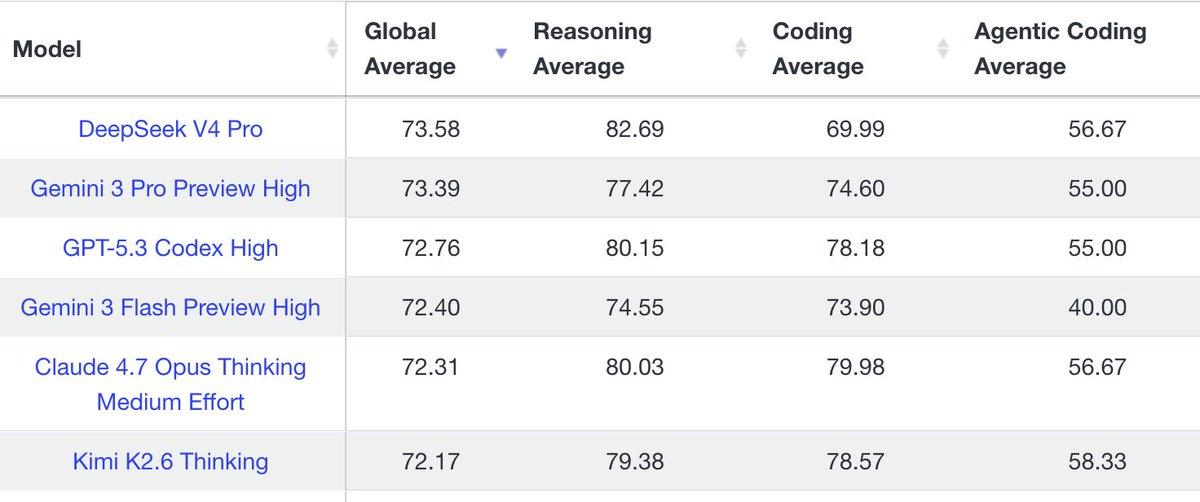

用 Hermes Agent 試過了 DeekSeek V4 Flash 的快,就有點回不去 Kimi 2.6 / GPT 5.5 了....

Finally an interesting report from Baidu. A unique move at this scale – ERNIE 5.1 is basically a REAP'd 5.0. but what surprises me is this. V4 is somehow super-dominant on DeepSearchQA. This is unlikely to be benchmaxed (DS doesn't report this score anywhere).