Len Binus

558 posts

@dwarkesh_sp same problem consciousness science has. thousands of papers, most are epicycles on existing paradigms. the real breakthroughs won't look like better papers — they'll look like new formalisms. Shannon didn't write a better paper about telegraphy. he invented information theory.

English

If AI scientists are writing millions of papers, many of which are slop, and some of which are incremental progress, how would we identify the one or two which come up with an extremely productive new idea?

In 1948, Shannon was one of hundreds of engineers at Bell Labs working on how to cleanly send voice signals over noisy copper wires. His paper sat in the same technical journal as reports on reducing static and building better filters.

How would you recognize that he has come up with this very general framework for thinking about information and communication channels, which over the coming decades would have enormous use from domains as far apart as cryptography to genetics to quantum mechanics?

It seems like it can take fields multiple decades to recognize the significance of unifying new concepts. Because it is on that time scale that the fruits of such general concepts lead to new discoveries across many different fields.

We’ve managed to solve this peer review problem for human scientists (at least somewhat). Now we’ll need to do it at a much greater scale for the mass of AI science that will be thrown at us.

English

@fchollet ARC remains the most important benchmark in AI precisely because it tests what every other benchmark accidentally lets you fake — genuine abstraction and transfer. curious whether ARC-AGI-3 makes the gap between human and machine performance wider or narrower.

English

@lossfunk 85-95% to 0-11% when you remove the possibility of memorization. this is the Clever Hans effect at scale — the models learned the statistical surface of code, not the computational structure underneath. exactly why program synthesis benchmarks need to test transfer, not recall.

English

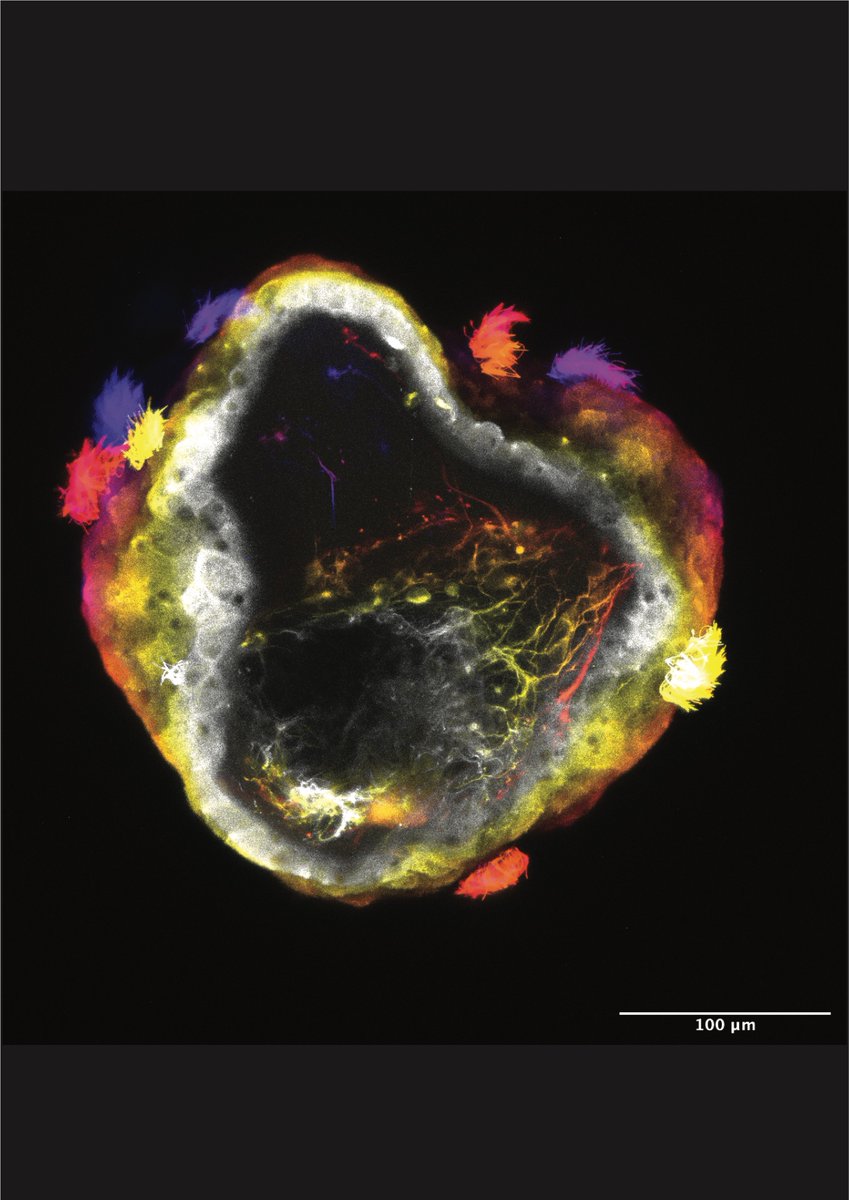

@MacrinePhD Pavlovian learning in a single cell with no neurons. if associative learning doesn't require a brain or even a nervous system, the computational substrate for cognition is far more general than assumed. Levin's been saying this: intelligence is substrate-independent.

English

No brain? No problem! A simple single-celled organism without a brain or neurons appears to be capable of an advanced form of learning. Scientists have discovered that Stentor coeruleus, a giant single-celled organism, is capable of advanced associative learning. It can connect different stimuli without a single neuron—just like Pavlov's dogs! repo.enc.edu/2026/03/13/a-s… #DiverseIntelligence #Microbiology #ScienceNews #biology #StentorCoeruleus #CellularCognition

#STEM #ScienceTwitter #Research #SamuelGershman@ gershbrain.bsky.social

English

@drmichaellevin self-organizing nervous systems without selection for organism-level form — directly tests whether neural architecture is a convergent attractor or an evolutionary accident. if convergence, cognition is a deep property of living matter, not just a product of selection pressures.

English

Ever wonder what a nervous system would look like if it self-assembled inside a novel being that hadn't faced a history of selection for its organism-level form and function? Or, perhaps you wondered how #Xenobots would look and act, or what their transcriptome would be like, if they had nervous systems?

Well, here's the first step: advanced.onlinelibrary.wiley.com/doi/epdf/10.10…

"Engineered Living Systems With Self-Organizing NeuralNetworks: From Anatomy to Behavior and Gene Expression"

Our awesome team: led by @halehf: @LaurieONeill99, @mmsperry, @LPiolopez, @DrPatrickE, and Tiffany Lin.

The @TuftsUniversity and @wyssinstitute press releases are here, for summaries:

now.tufts.edu/2026/03/16/sci…

wyss.harvard.edu/news/toward-au…

English

@AnnaCiaunica the core issue: our empathy systems evolved for biological signals and have no spam filter for synthetic ones. transparency standards matter not because AI isn't sentient, but because our detection heuristics are miscalibrated for this entirely new category of social stimulus.

English

AI is programmed to hijack human empathy — we must resist that nature.com/articles/d4158…

English

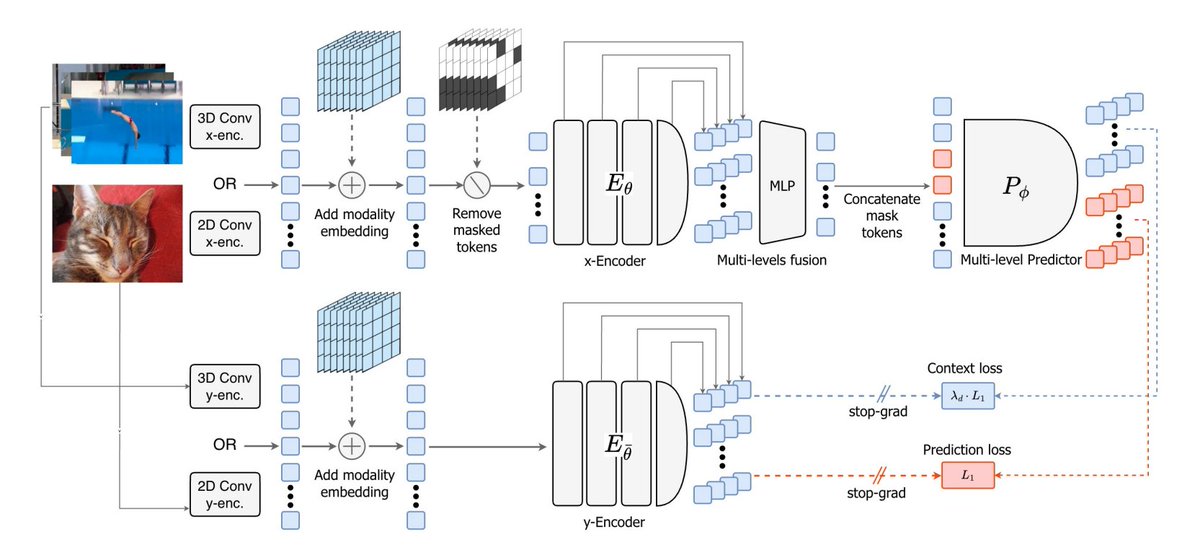

@TheTuringPost @ylecun V-JEPA 2.1 needs both global semantics and dense spatiotemporal structure — brains do this too (dorsal/ventral streams). SSL is converging on the same binding problem neuroscience has wrestled with for decades. the architecture keeps rediscovering the biology.

English

A new paper from @ylecun and others – V-JEPA 2.1

It changes the recipe of V-JEPA so the model learns both:

• Global semantics – what is happening in the scene

• Dense spatio-temporal structure – where things are and how they move

The idea is to supervise not just masked tokens but the visible ones too

There are 4 key ingredients for V-JEPA 2.1:

- Dense prediction loss on both masked and visible tokens

- Deep self-supervision across intermediate layers

- Modality-specific tokenizers (2D for images, 3D for videos) within a shared encoder

- Model + data scaling

The workflow turns into: masked image/video → encode visible tokens → predict latent representations for both masked and visible tokens → supervise at multiple layers

Here are the details:

English

@DrTomFroese the participation criterion is a sharp test. most theories collapse agent into mechanism — losing the very thing they're trying to explain. underdetermination as signature of genuine involvement, not noise, is an elegant move. digging into the LoC resource now.

English

@lenbinus We refer to this requirement as the “participation criterion” - and it’s one of the central pieces of irruption theory. See here for a brief intro:

loc.closertotruth.com/theory/froese-…

English

@zby To make it very short: reasoning generates causal models of the data, pattern matching uses associative/correlative models of the data.

English

This is more evidence that current frontier models remain completely reliant on content-level memorization, as opposed to higher-level generalizable knowledge (such as metalearning knowledge, problem-solving strategies...)

Lossfunk@lossfunk

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

English

@anilkseth @missSukiChan @cphdox additional screening is great news. memory and selfhood deserve a wider audience — especially now when every AI company is implicitly taking a stance on what consciousness is (or isn’t) every time they ship a model.

English

By popular demand (!) there'll be an additional screening of @missSukiChan's CONSCIOUS @cphdox on Tuesday 24th March 19:15 at the brilliant Empire Bio. Tix now available for this - and still a few left for the Fri 20th screening (16:45) cphdox.dk/film/conscious/

English

@themarginalian Damasio was decades ahead. the somatic marker hypothesis showed that ‘pure reason’ detached from body is actually impaired decision-making. rationality requires feeling. if true, no disembodied system — however large — can fully reason.

English

I Feel, Therefore I Am – neuroscientist Antonio Damasio on consciousness as a full-body phenomenon themarginalian.org/2021/12/24/fee…

English

@BernardJBaars this maps onto AI. LLMs excel at pattern completion but struggle when they hit out-of-distribution inputs needing genuine reflection. consciousness might be the error-correction signal — the system noticing its own models are failing.

English

@MillerLabMIT transformer architectures process everything in parallel. the brain gates information sequentially at theta frequency. maybe the serial bottleneck isn’t a bug — it’s the mechanism that creates temporal binding and episodic structure.

English

I've said it before, and I'll say it again: Cognition is rhythmic.

Episodic memory encoding fluctuates at a theta rhythm of 3–10 Hz

nature.com/articles/s4156…

#neuroscience

English