Giovanni Petri

4.3K posts

@lordgrilo

Topology, complex networks, neuroscience; Professor @NUnetsi; PI @NPLab_; PI @ProjectCETI; Science Comm Fellow @museumofscience; Angoleiro, wine-drinker.

The @Stanford biosciences affirmation taken by all of our PhD grads is inspiring, with pledges to: 1) Do science with rigor, integrity, and uncompromising respect for truth 2) Show kindness and compassion to colleagues 3) Show honesty and respect to the public 4) Place public trust in science above self 5) Foster inclusiveness to drive progress 6) Through our actions, honor the legacy of scientists who precede us and earn the respect of those who follow us. 7) Work to advance knowledge for the benefit of all humanity and the world. Seriously brought a tear to my eye.

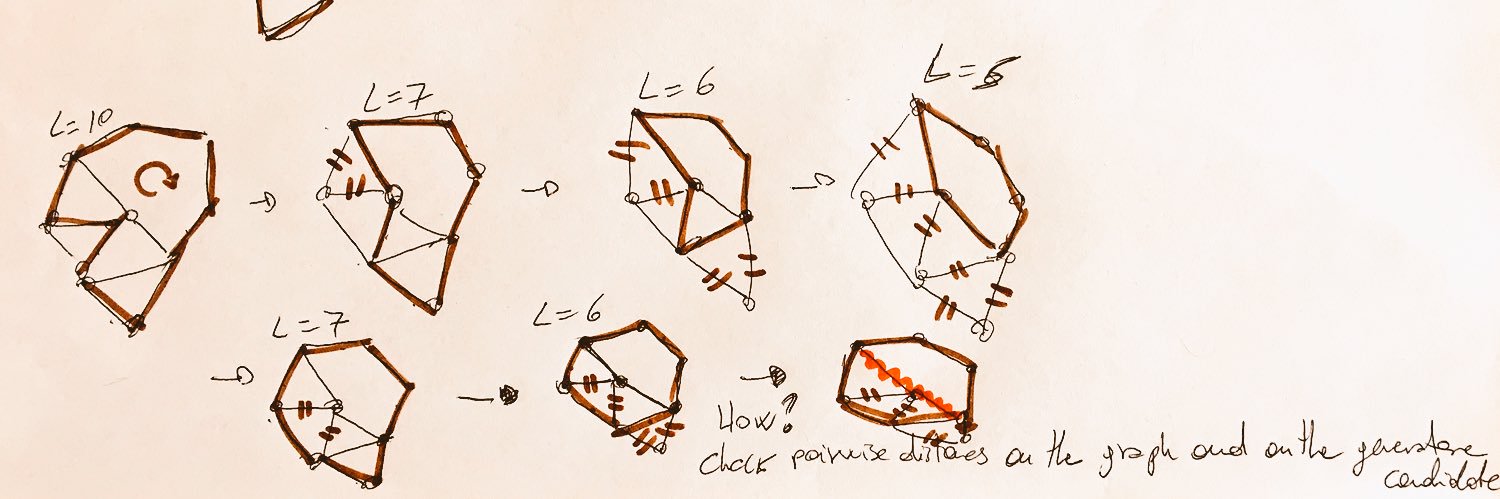

New preprint! "It's all about covers — Persistent Homology of Cover Refinements" We argue that covers are the natural level of abstraction for building filtrations and comparing persistence modules. arxiv.org/abs/2602.22784

📌Save the Date! The flagship conference of the Network Science Society - 𝗡𝗲𝘁𝗦𝗰𝗶 𝟮𝟬𝟮𝟲 - is coming to Northeastern University’s Network Science Institute, 𝗝𝘂𝗻𝗲 𝟭-𝟱, 𝟮𝟬𝟮𝟲. Registration opens soon! 🔗 netsci2026.com

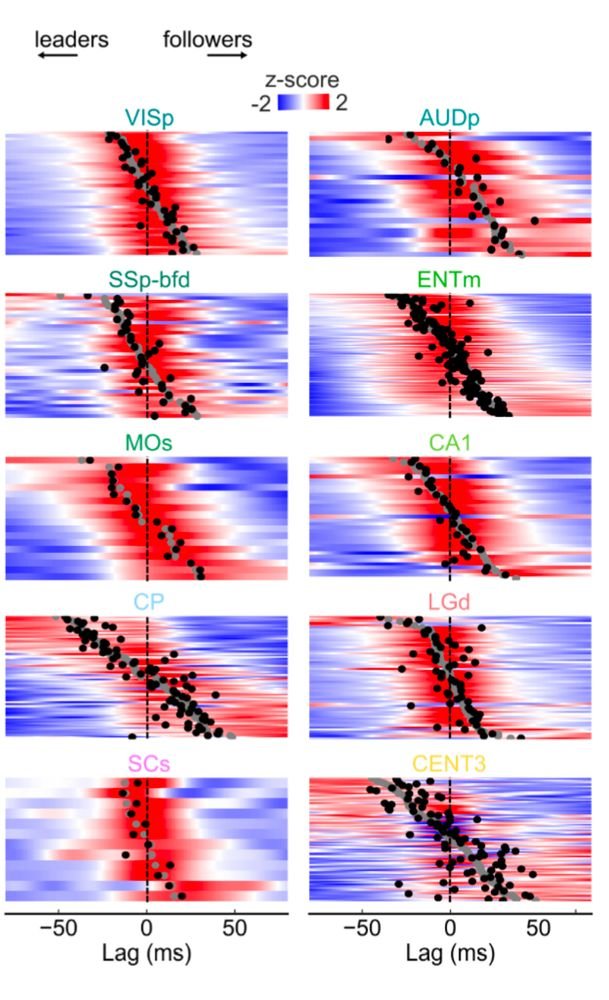

Latest work at #EUSIPCO25!🚀 We bring Topological Signal Processing to the brain 🧠 — showing that edge-based approaches outperform classical GSP in task decoding. Great collab with @MarcoNurisso & @lordgrilo Paper👉 eusipco2025.org/wp-content/upl… #Neuroimaging #SignalProcessing #TSP