Dan Lorenc

13.2K posts

Dan Lorenc

@lorenc_dan

OSS Supply Chain Security. Founder/CEO/Primary Ariba Admin at https://t.co/sGmuUU9JbG Sigstore: https://t.co/dWKlyYu6kv

Chainguard 🤝 @cursor_ai Our new partnership is making trusted OSS the foundation for AI-driven development. Now, devs using Cursor can pull from our CVE-free containers and malware-resistant libraries instead of public registries. See it in action 👇 chainguard.dev/unchained/chai…

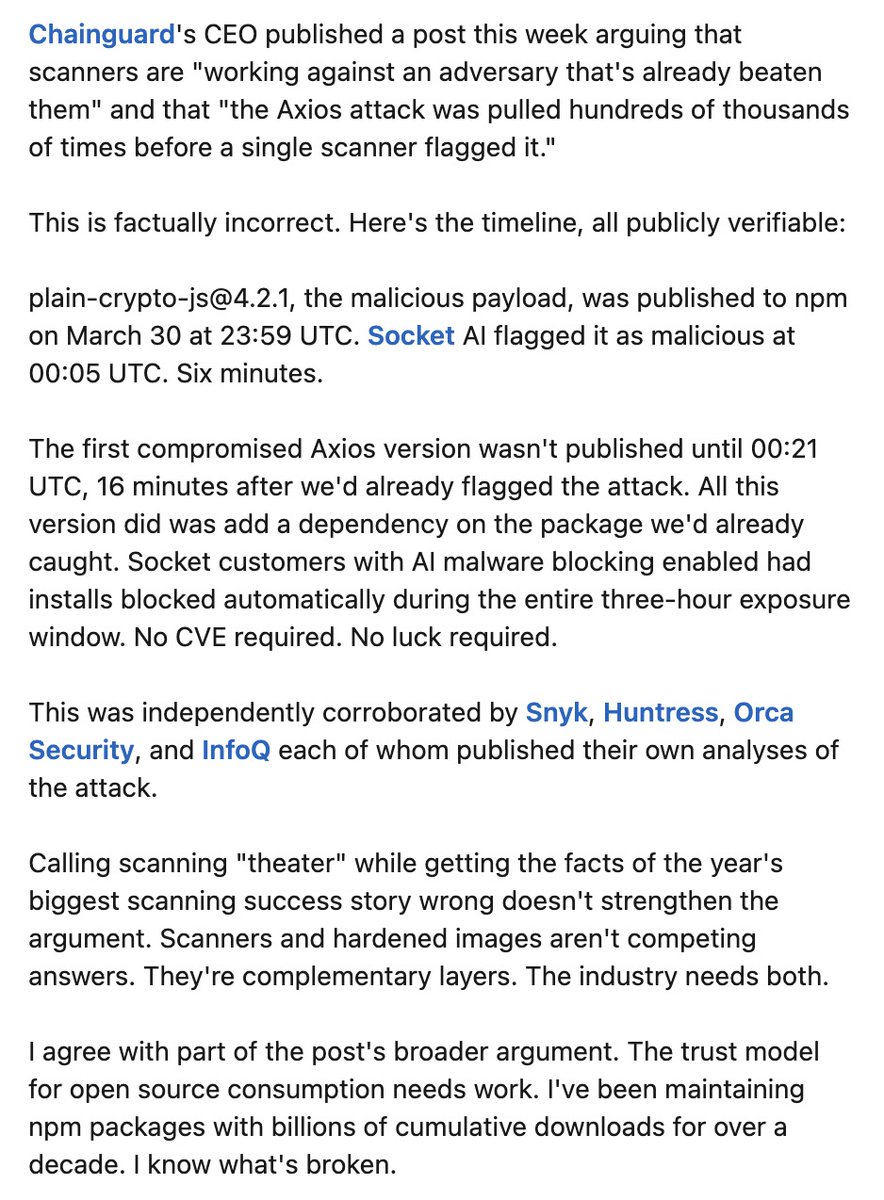

🚨 CRITICAL: Active supply chain attack on axios -- one of npm's most depended-on packages. The latest axios@1.14.1 now pulls in plain-crypto-js@4.2.1, a package that did not exist before today. This is a live compromise. This is textbook supply chain installer malware. axios has 100M+ weekly downloads. Every npm install pulling the latest version is potentially compromised right now. Socket AI analysis confirms this is malware. plain-crypto-js is an obfuscated dropper/loader that: • Deobfuscates embedded payloads and operational strings at runtime • Dynamically loads fs, os, and execSync to evade static analysis • Executes decoded shell commands • Stages and copies payload files into OS temp and Windows ProgramData directories • Deletes and renames artifacts post-execution to destroy forensic evidence If you use axios, pin your version immediately and audit your lockfiles. Do not upgrade.

I am the main developer fixing security issues in FFmpeg. I have fixed over 2700 google oss fuzz issues. I have fixed most of the BIGSLEEP issues. And i disagree with the comments @ffmpeg (Kieran) has made about google. From all companies, google has been the most helpfull & nice

AI bug hunting as Microsoft EEE. Embrace - Commit to open source. Extend - Use replaceable FOSS components in your workflow. Extinguish - Release AI hounds to make so many bug reports they cannot innovate before you outfox. Oh hi, @Google.